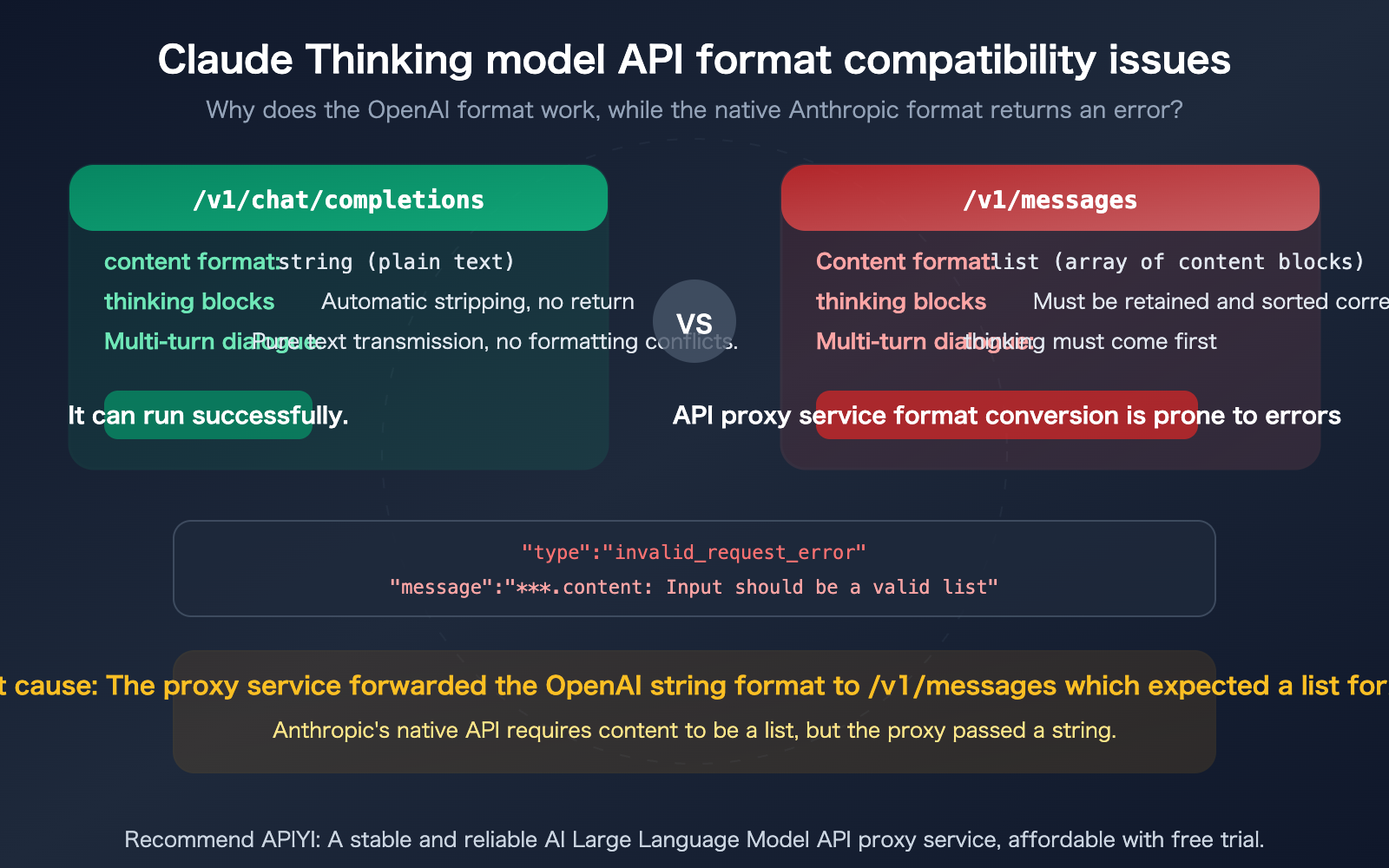

Ever run into this situation: you're using the claude-opus-4-6-thinking model, and calls via /v1/chat/completions (OpenAI format) work perfectly fine, but when you switch to /v1/messages (Anthropic native format), you get the error content: Input should be a valid list? This seemingly counterintuitive phenomenon actually reveals a deep compatibility issue with the Thinking model across the two API formats. This article will start from the underlying API formats and thoroughly explain the root cause of the error and the correct way to call it.

Core Value: After reading this article, you'll understand the behavioral differences of the Thinking model between the two API formats, solve the content should be a valid list error, and master the correct way to handle thinking blocks in multi-turn conversations.

Core Points of Claude Thinking Model API Compatibility

Let's get straight to the point about this "counter-intuitive" phenomenon.

| Key Point | Explanation | Impact |

|---|---|---|

| Root Cause of Error | The proxy passes content: "string" to /v1/messages, which expects content: [list] |

Format mismatch causes 400 error |

| Why OpenAI Format Works | /v1/chat/completions allows content to be a string, automatically stripping thinking blocks |

Simple format, better compatibility |

| Why Anthropic Format Fails | /v1/messages strictly requires content to be a list of content blocks, with thinking first |

Proxy format conversion is incomplete |

| Model Name Difference | claude-opus-4-6-thinking is a proxy platform alias; official model name is claude-opus-4-6 |

Thinking is enabled via parameters, not model name |

| Correct Approach | Use OpenAI format for calls, or ensure proxy correctly handles content format conversion | Choose the right endpoint + pass correct parameters |

The Technical Essence of Claude Thinking Model API Errors

The error message content: Input should be a valid list reveals a crucial format difference:

Anthropic Native API (/v1/messages) requires the content field to be a content block array (list):

{

"role": "assistant",

"content": [

{"type": "thinking", "thinking": "Let me analyze this problem...", "signature": "CpcH..."},

{"type": "text", "text": "Here's my answer..."}

]

}

OpenAI Compatible Format (/v1/chat/completions) allows content to be a plain string:

{

"role": "assistant",

"content": "Here's my answer..."

}

When an API proxy platform (like APIYI's backend) has its channel configured for /v1/messages format, if an upstream client sends OpenAI-format string content, the proxy needs to convert "string" to [{"type": "text", "text": "string"}]. If this conversion is incomplete—especially when a Thinking model's response is passed back to the next round of conversation—it triggers the Input should be a valid list error.

Detailed Comparison of Claude Thinking Model API's Two Endpoint Formats

This is key to understanding the issue: the two endpoints have fundamentally different requirements for the content field.

Claude Thinking Model API Format Differences

| Comparison Dimension | /v1/chat/completions (OpenAI) |

/v1/messages (Anthropic) |

|---|---|---|

| content Type | string or array |

Must be array (list of content blocks) |

| thinking Return | Doesn't return detailed thinking process | Returns thinking type content blocks |

| signature Passing | Placed in provider_specific_fields |

Directly in the signature field of the thinking block |

| Multi-turn Conversation | Plain text passing, no need to worry about thinking order | Assistant messages must start with a thinking block |

| thinking Enable Method | Model name suffix or parameters | thinking: {"type": "adaptive"} parameter |

| prompt caching | Not supported | Supported |

| Thinking Process Visibility | Not visible | Visible (summarized thinking) |

Claude Thinking Model API Request Format Comparison

OpenAI Format Call (Recommended for Proxy Scenarios):

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="claude-opus-4-6-thinking", # Proxy platform alias

messages=[

{"role": "user", "content": "Analyze the business prospects of quantum computing"}

],

max_tokens=16000

)

print(response.choices[0].message.content)

View Anthropic Native Format Call Code

import anthropic

client = anthropic.Anthropic(api_key="YOUR_API_KEY")

response = client.messages.create(

model="claude-opus-4-6", # Official model name, no -thinking suffix

max_tokens=16000,

thinking={

"type": "adaptive" # Enable thinking via parameter

},

messages=[

{"role": "user", "content": "Analyze the business prospects of quantum computing"}

]

)

# Response content is a list containing thinking blocks and text blocks

for block in response.content:

if block.type == "thinking":

print(f"[Thinking Process] {block.thinking[:100]}...")

elif block.type == "text":

print(f"[Answer] {block.text}")

Key Differences:

- Model name is

claude-opus-4-6(no-thinkingsuffix) - thinking is enabled via

thinking={"type": "adaptive"}parameter - Response content is a list of content blocks, not a string

- In multi-turn conversations, the complete content list (including thinking blocks) must be passed back

🎯 Calling Advice: If you're calling Claude Thinking models through a proxy platform, prioritize using

/v1/chat/completions(OpenAI format) for the best compatibility.

The OpenAI-compatible endpoint on the APIYI apiyi.com platform has been adapted for Thinking models, automatically handling thinking block conversions.

Why the OpenAI Format Works Better for Claude Thinking Model API

This is the most counterintuitive part: using the "non-native" OpenAI format to call the Claude Thinking model actually has better compatibility. There are three reasons:

Reason 1: Content Format Flexibility Differs

The OpenAI format allows content to be either a plain string "hello" or a content block array [{"type":"text","text":"hello"}]. The native Anthropic format only accepts content block arrays—string format will directly cause an error.

When client code passes content as a string (which is the default behavior of the OpenAI SDK), if the proxy uses the OpenAI format channel, the client and upstream endpoint formats match, avoiding conversion issues. But if it goes through the Anthropic format channel, strings won't be accepted.

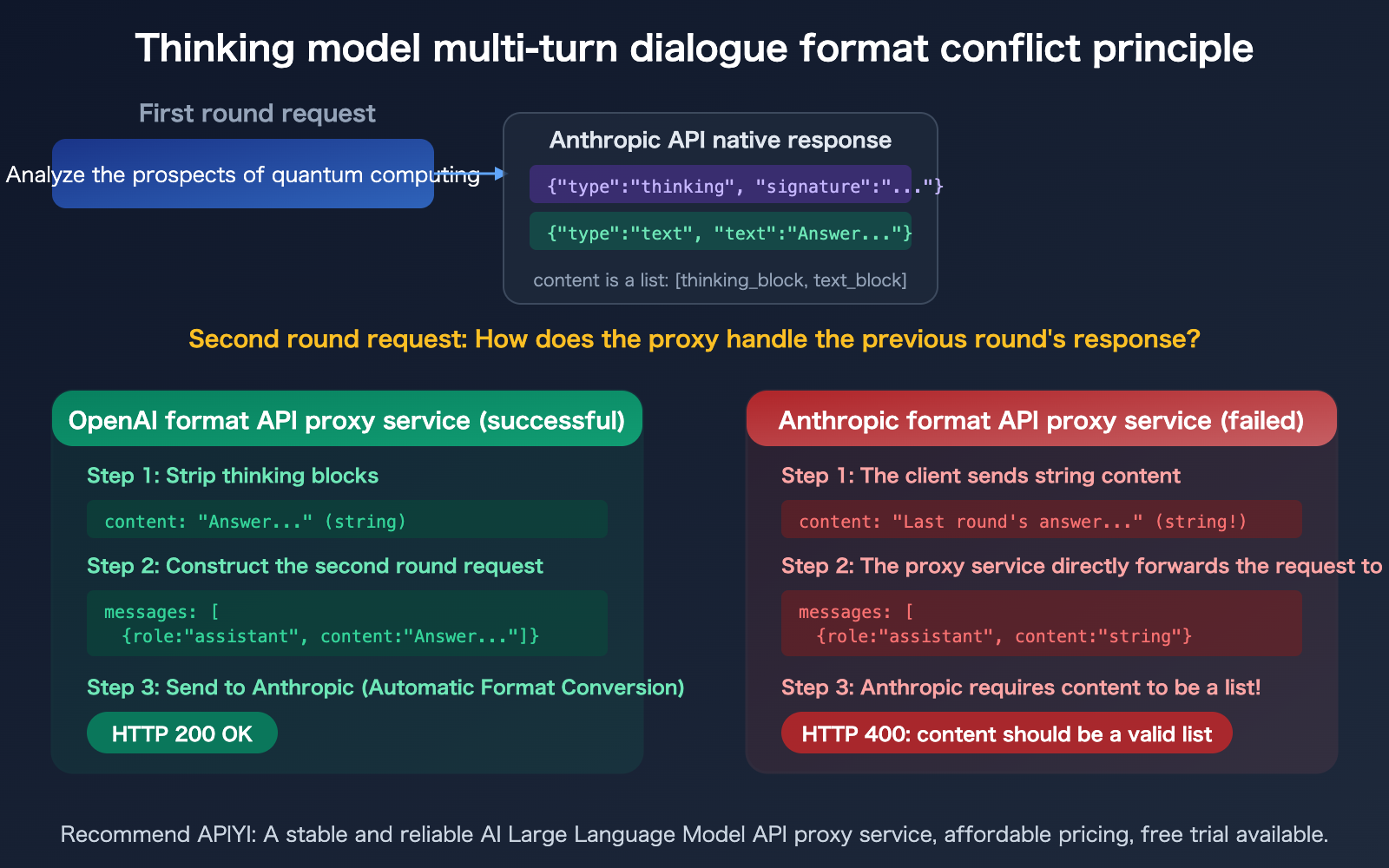

Reason 2: Automatic Stripping of Thinking Blocks

OpenAI-compatible mode automatically strips thinking blocks from Claude's response, returning only the final text. This means:

- Clients don't receive thinking blocks

- Thinking blocks don't need to be passed back in the next round of conversation

- There's no thinking block ordering issue

The native Anthropic format requires thinking blocks to be fully preserved in multi-turn conversations, and assistant messages must start with a thinking block. If the proxy doesn't handle this ordering requirement correctly, it will cause errors.

Reason 3: The thoughtSignature Passing Problem

As mentioned earlier, thinking blocks in the Anthropic format contain an encrypted signature (signature) that must be passed back exactly as received. The OpenAI format skips this entirely—it doesn't return signatures, and doesn't require passing them back.

🎯 Recommendation: When calling the Claude Thinking model through an API proxy, prioritize using the

/v1/chat/completionsformat to avoid thinking block format compatibility issues.

APIYI's OpenAI-compatible endpoints at apiyi.com have been fully adapted for Thinking models.

Claude Thinking Model API Calling Solutions Comparison

Three Calling Solutions for Claude Thinking Model API

| Solution | Endpoint | Format Compatibility | Thinking Visible | Prompt Caching |

|---|---|---|---|---|

| OpenAI Format Proxy | /v1/chat/completions |

Best (string content) | Not visible | Not supported |

| Anthropic Native Direct | /v1/messages |

Requires strict format adherence | Visible | Supported |

| Anthropic Format Proxy | /v1/messages (proxy) |

Depends on proxy implementation | Depends on proxy | Partially supported |

Claude Thinking Model API Model Name Differences

Different platforms use different naming conventions for Thinking models, which is a common source of confusion:

| Platform | Model Name | Thinking Enablement Method |

|---|---|---|

| Anthropic Official | claude-opus-4-6 |

thinking: {"type": "adaptive"} parameter |

| API Proxy (e.g., APIYI) | claude-opus-4-6-thinking |

Implicitly enabled via model name suffix |

| OpenRouter | anthropic/claude-opus-4.6 |

Parameter-enabled |

| AWS Bedrock | anthropic.claude-opus-4-6-v1 |

Parameter-enabled |

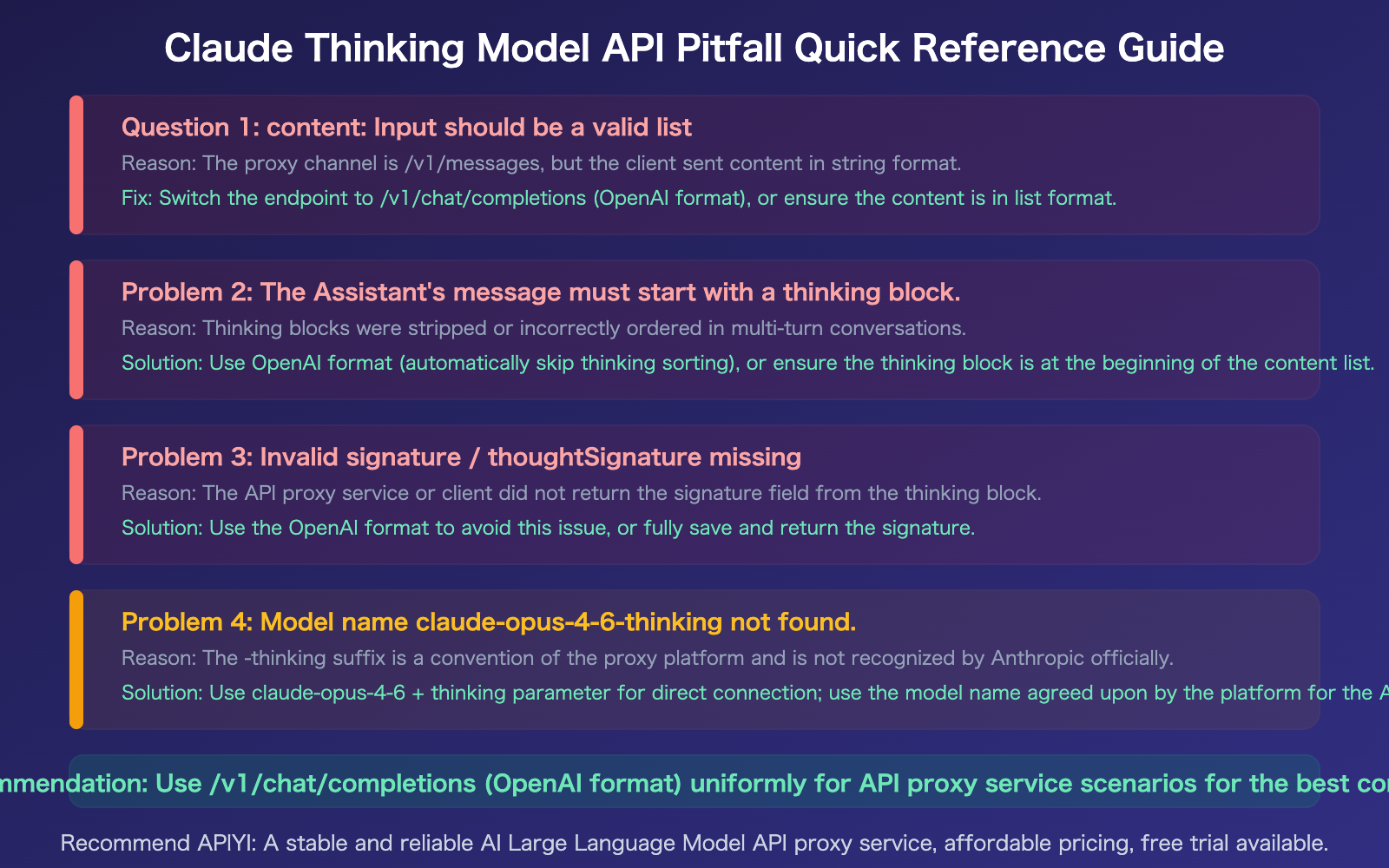

In the official Anthropic API, there's no claude-opus-4-6-thinking model name. The -thinking suffix is a naming convention used by proxy platforms, allowing users to directly enable thinking functionality through the model name without manually setting parameters.

Tip: If you use the

claude-opus-4-6-thinkingmodel name on APIYI at apiyi.com, the platform automatically adds thethinking: {"type": "adaptive"}parameter to your request. This means you can get thinking capabilities directly with the OpenAI SDK without modifying your code.

Common Pitfalls and Solutions for Claude Thinking Model API

Frequently Asked Questions

Q1: If I use the OpenAI format to call the Thinking model, will it lose its thinking ability?

No. The model's thinking process happens on Anthropic's servers and isn't affected by the calling endpoint format. When you use the OpenAI format, the model still performs its full reasoning process—you just don't get the text summary of that thinking process returned to your client. The quality and depth of the final answer remain the same—you're getting the "thought-out answer," just not the "text transcript of the thinking."

Q2: When must I use the native /v1/messages format?

There are two scenarios where you need the native format: 1) You need to see the model's thinking process (summarized thinking) for debugging, educational purposes, or to showcase the reasoning chain; 2) You need to use prompt caching to reduce costs—this feature is only available on the /v1/messages endpoint. If you don't have either of these requirements, using the OpenAI format is simpler. The easiest way is to call it through APIYI's apiyi.com OpenAI-compatible endpoint.

Q3: How do I resolve compatibility issues when the APIYI backend channel is configured as /v1/messages?

Two solutions: 1) Switch the channel to OpenAI type (/v1/chat/completions) to completely avoid format conversion issues; 2) If you must use the /v1/messages channel, ensure your proxy layer correctly converts the client's string content to list format and properly handles the ordering of thinking blocks and signature passing in multi-turn conversations. Option 1 is simpler and more reliable.

Q4: What’s the difference between adaptive thinking and the old extended thinking?

For Opus 4.6, it's recommended to use thinking: {"type": "adaptive"} (adaptive thinking), where the model automatically decides whether to think and how deeply based on the problem's complexity. The old thinking: {"type": "enabled", "budget_tokens": N} is deprecated for Opus 4.6 and Sonnet 4.6. The new version also adds an effort parameter (low/medium/high/max) to control thinking depth, with high being the default.

Summary

The key points about Claude Thinking model API compatibility issues:

- Root cause is content format mismatch: Anthropic's native API strictly requires content to be a list, while OpenAI format allows strings—if an API proxy service uses

/v1/messagesbut the client sends a string, it will throwInput should be a valid list - OpenAI format has better compatibility: Automatically strips thinking blocks, doesn't require passing back signature, content can be a string—the preferred choice for proxy scenarios

- The -thinking suffix is a proxy convention, not an official model name: The official model name is

claude-opus-4-6, thinking is enabled via parameters

When calling Claude Thinking models through an API proxy, the simplest solution is to consistently use the OpenAI-compatible format.

We recommend calling through APIYI at apiyi.com—the platform has already optimized format compatibility for Thinking models, offering free credits and a unified interface for multiple models.

📚 References

-

Claude API Extended Thinking Documentation: Complete API reference for thinking mode

- Link:

platform.claude.com/docs/en/build-with-claude/extended-thinking - Description: Includes detailed explanations of adaptive thinking, effort parameters, and content block formats

- Link:

-

Claude API OpenAI SDK Compatibility Documentation: Official guide for calling Claude using OpenAI format

- Link:

platform.claude.com/docs/en/api/openai-sdk - Description: Includes compatibility limitations and unsupported features list

- Link:

-

Claude API Error Code Reference: Explanation of all API error types

- Link:

platform.claude.com/docs/en/api/errors - Description: Includes specific troubleshooting methods for invalid_request_error

- Link:

-

APIYI Documentation Center: Calling Claude Thinking models through OpenAI-compatible interface

- Link:

docs.apiyi.com - Description: Already adapted for Thinking models, automatically handles thinking blocks conversion

- Link:

Author: APIYI Technical Team

Technical Discussion: Welcome to discuss in the comments, more resources available at APIYI docs.apiyi.com documentation center