The comparison between GPT-5.5 and Claude Opus 4.7 is one of the most significant topics for developers in the first half of 2026.

Neither of these is just a simple chat model.

GPT-5.5 leans heavily into agentic coding, computer use, knowledge work, and scientific analysis.

Claude Opus 4.7, on the other hand, prioritizes complex reasoning, long-term agentic tasks, high-resolution vision, memory capabilities, and stricter instruction following.

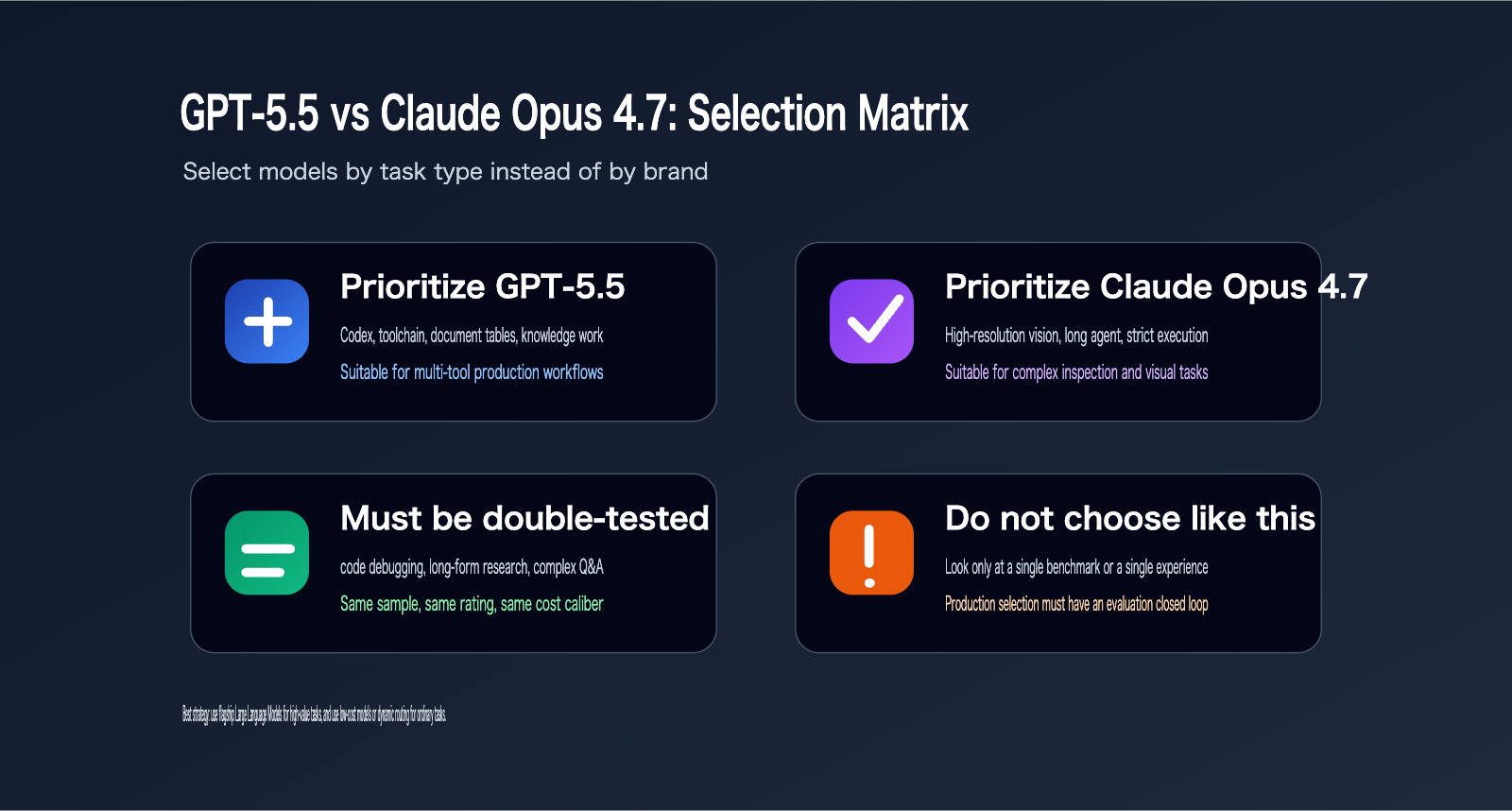

Asking "which one is better" is too simplistic.

A more practical approach is to ask: Is your task focused on code repair, knowledge base Q&A, long-context analysis, visual understanding, automated agents, or high-cost production model invocation?

Your choice between GPT-5.5 and Claude Opus 4.7 will vary significantly depending on the task.

When OpenAI officially released GPT-5.5, they included Claude Opus 4.7 directly in their comparative evaluation tables.

Anthropic has also positioned Claude Opus 4.7 as its most powerful generally available model, highlighting its improvements in agentic coding, knowledge work, visual tasks, and memory.

This article is based on official English-language documentation and does not rely on secondary Chinese sources.

It is important to note that "Claude 4.7" in this article refers specifically to Claude Opus 4.7. As of the time of writing, official Anthropic documentation does not indicate that a Claude Sonnet 4.7 has been released.

Core Conclusions: GPT-5.5 vs Claude Opus 4.7

The primary difference between GPT-5.5 and Claude Opus 4.7 lies in their positioning.

OpenAI defines GPT-5.5 as a model better suited for real-world workflows. It emphasizes coding, debugging, online research, data analysis, document and spreadsheet generation, and cross-tool task completion.

Anthropic defines Claude Opus 4.7 as its most powerful generally available model. It emphasizes complex reasoning, agentic coding, long-range tasks, visual understanding, memory, and self-correction.

If your tasks involve complex engineering projects in Codex, cross-file modifications, tool invocation, and knowledge work, GPT-5.5 is often worth prioritizing for testing.

If your tasks involve long-running Claude Code agentic sessions, visual screenshot understanding, document layout verification, file system memory, and strict instruction following, Claude Opus 4.7 is worth prioritizing.

If you need to integrate both types of models, we recommend using an API proxy service like APIYI (apiyi.com) for multi-model routing and evaluation, which helps avoid hardcoding model choices into your business logic.

Quick Comparison: GPT-5.5 vs Claude Opus 4.7

| Dimension | GPT-5.5 | Claude Opus 4.7 | Recommendation |

|---|---|---|---|

| Official Positioning | Real-world workflows & agentic AI | Most powerful general Claude model | Choose based on task type |

| Coding Ability | Strong performance in Terminal-Bench 2.0 | Significant improvement in agentic coding | Both should be tested |

| Long Context | 1M context via API | 1M context window | Both are suitable |

| Visual Ability | Multimodal & tool collaboration | High-resolution image support | Prefer Claude for heavy visual tasks |

| Reasoning Control | reasoning_effort | effort / adaptive thinking | Different parameter systems |

| API Cost | $5 input / $30 output per million tokens | $5 input / $25 output per million tokens | Claude has lower output costs |

| Ecosystem Entry | ChatGPT, Codex, API | Claude, Claude Code, API | Depends on workflow |

Recommendation: If you're unsure which model is better for your needs, we suggest preparing 30-50 real-world business samples and running them through both models using APIYI (apiyi.com). Compare them based on success rate, response time, cost, and human evaluation.

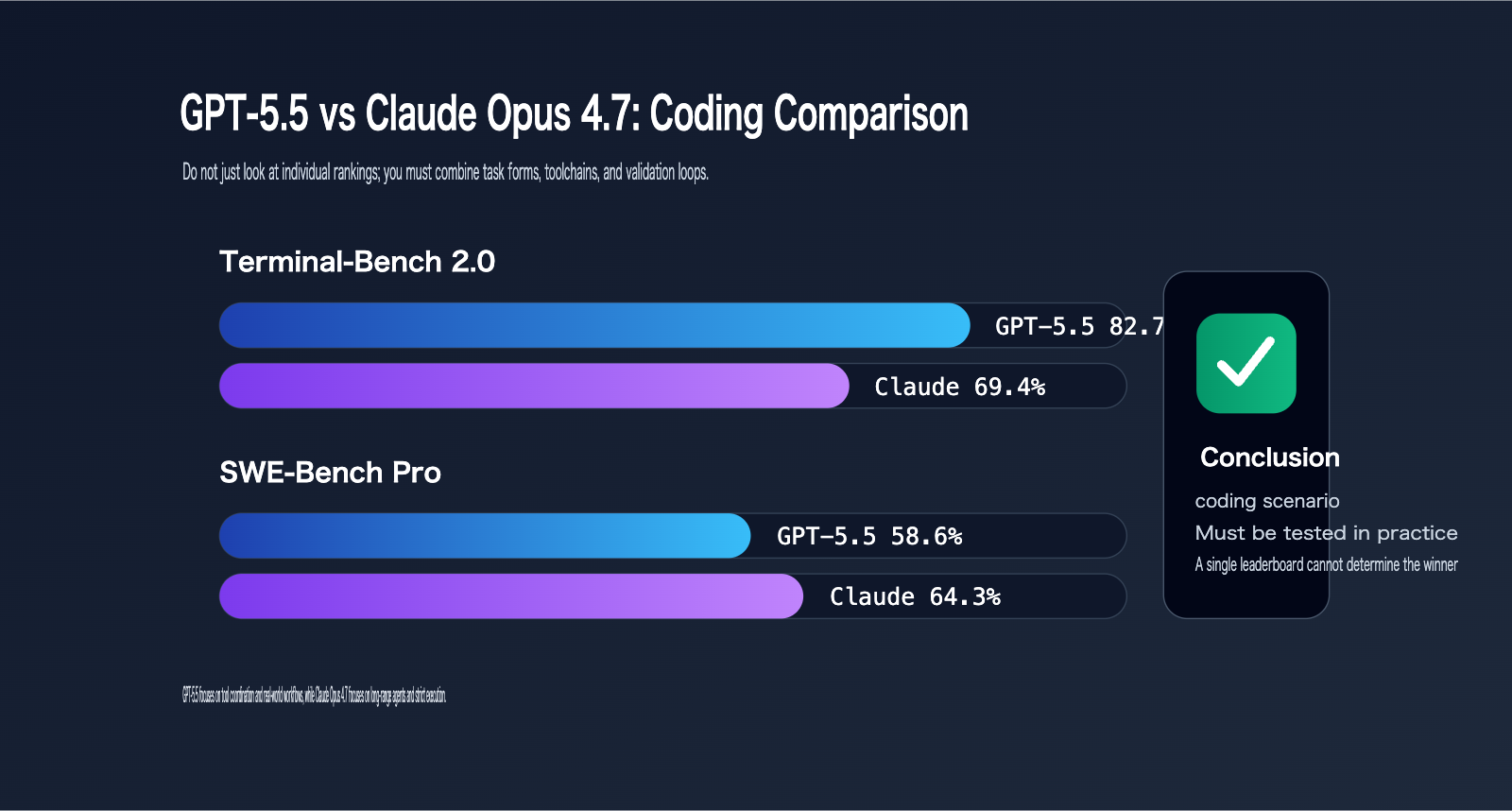

GPT-5.5 vs. Claude Opus 4.7 Coding Capability Comparison

Coding is the core scenario for comparing GPT-5.5 and Claude Opus 4.7.

According to official OpenAI data, GPT-5.5 achieves 82.7% on Terminal-Bench 2.0. In the same benchmark, Claude Opus 4.7 scores 69.4%. However, on the public SWE-Bench Pro evaluation, GPT-5.5 scores 58.6%, while Claude Opus 4.7 reaches 64.3%.

This shows that neither model is a unilateral winner.

GPT-5.5 excels in complex command-line workflows, planning, iteration, and tool coordination. Claude Opus 4.7, on the other hand, is highly competitive in tasks involving GitHub issue resolution. Anthropic’s official materials also highlight that Claude Opus 4.7 shows a 13% improvement over Opus 4.6 on their 93-task coding benchmark, confirming a clear generational leap in coding performance.

However, when comparing GPT-5.5 and Claude Opus 4.7, you shouldn't rely on a single benchmark as the final verdict. Real-world coding involves reading legacy code, identifying risks, controlling the scope of changes, completing tests, running commands, handling failures, explaining changes, and generating review notes.

GPT-5.5 emphasizes cross-tool execution and completing tasks with fewer tokens in Codex scenarios. Claude Opus 4.7 focuses on long-range agentic workflows, "high effort" processing, and stricter instruction following in Claude Code scenarios.

Coding Scenario Recommendations: GPT-5.5 vs. Claude Opus 4.7

| Coding Task | Recommended Priority | Reason |

|---|---|---|

| Complex CLI Workflows | GPT-5.5 | Higher official Terminal-Bench 2.0 score |

| GitHub Issue Fixing | Test both | Claude leads on SWE-Bench Pro; GPT-5.5 has a stronger ecosystem |

| Large Codebase Understanding | GPT-5.5 | Codex scenarios emphasize cross-system context |

| Long-running Agentic Tasks | Claude Opus 4.7 | Better alignment with "high effort" and task budgets |

| Code Review & Validation | Both suitable | Focus on the test feedback loop |

| Cost-sensitive Batch Fixing | Needs testing | Significant differences in token usage patterns |

Selection Tip: Don't just look at the leaderboards. We recommend taking your real issues, failed tests, PR reviews, and refactoring tasks to APIYI for comparative evaluation. Track whether each model actually runs the tests, avoids modifying unrelated files, and correctly identifies potential risks.

GPT-5.5 vs. Claude Opus 4.7 Knowledge Work and Research

Comparing GPT-5.5 and Claude Opus 4.7 for knowledge work is also critical.

OpenAI’s official materials show GPT-5.5 reaching 84.9% on GDPval, while Claude Opus 4.7 scores 80.3% in the same table. GPT-5.5 Pro scores 82.3%. This indicates that GPT-5.5 is exceptionally strong in the professional knowledge tasks evaluated by OpenAI. OpenAI also highlights significant improvements in GPT-5.5 regarding document generation, spreadsheets, presentations, and handling operational research and business inputs.

On the Anthropic side, official documentation for Claude Opus 4.7 emphasizes its strengths in knowledge work, memory, vision, and long-horizon agentic work. A key feature of Claude Opus 4.7 is its superior "data discipline." Anthropic cites feedback from Hex, noting that the model is more willing to acknowledge missing data rather than providing plausible-sounding but incorrect alternatives. This is crucial for financial analysis, research reports, compliance reviews, and data table processing.

If your knowledge work requires the model to produce polished, complete, and well-structured business documents, GPT-5.5 is definitely worth testing. If your tasks require the model to remain cautious in the face of missing or conflicting data and long context windows, Claude Opus 4.7 is highly competitive.

Knowledge Work Selection: GPT-5.5 vs. Claude Opus 4.7

| Scenario | GPT-5.5 Advantage | Claude Opus 4.7 Advantage | Recommendation |

|---|---|---|---|

| Business Reports | Strong structured generation | Strong data discipline | Compare both |

| Table Analysis | Strong Codex document/table capability | Strong visual verification/chart analysis | Depends on input format |

| Financial Research | Strong GDPval performance | Improved General Finance module | Test with real samples |

| Compliance Review | Strong comprehensive capability | Cautious handling of missing data | Prioritize Claude |

| Multi-doc Summarization | Strong long context | Strong memory and strict instruction following | Choose based on citation quality |

Selection Tip: The biggest risk in knowledge work is "looking complete but containing hallucinations." When comparing GPT-5.5 and Claude Opus 4.7 on APIYI, we recommend breaking down human evaluations into five dimensions: factual accuracy, citation consistency, omission rate, structural quality, and actionability.

GPT-5.5 vs. Claude Opus 4.7: Vision and Long Context Capabilities

Both GPT-5.5 and Claude Opus 4.7 support long context, but they handle the details differently.

According to official OpenAI materials, the GPT-5.5 API features a 1M context window.

Anthropic’s model overview shows that Claude Opus 4.7 also supports a 1M token context window, along with a 128k max output.

In long-context tasks, both models have entered the range where they can effectively process large documents, codebases, and complex data packages.

However, when it comes to vision tasks, the changes in Claude Opus 4.7 are more pronounced.

Anthropic’s documentation indicates that Claude Opus 4.7 is the first Claude model to support high-resolution images, with the maximum image resolution increased to 2576px / 3.75MP.

This is a big deal for screenshot understanding, document imaging, slide verification, chart analysis, and computer use.

Anthropic also mentioned that image coordinates now map 1:1 to real pixels, which reduces the need for coordinate scaling conversions.

GPT-5.5 also boasts strong multimodal and computer use capabilities, but if your input primarily consists of high-resolution screenshots, charts, document layouts, or UI coordinates, Claude Opus 4.7 is worth prioritizing for testing.

If your input is primarily long text, codebases, business documents, structured data, or toolchain outputs, you should evaluate both GPT-5.5 and Claude Opus 4.7 using the same set of samples.

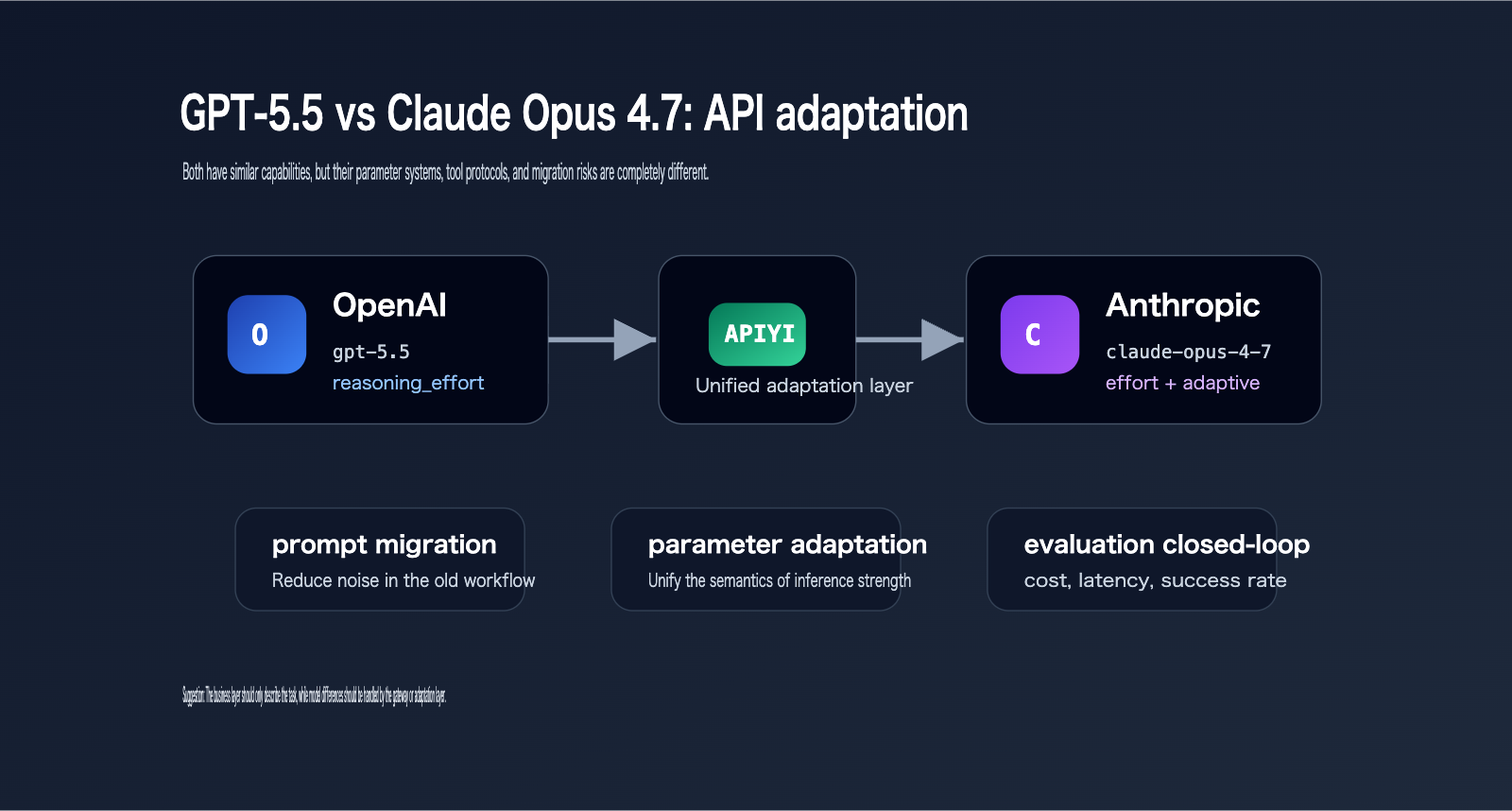

GPT-5.5 vs. Claude Opus 4.7: API Parameters and Migration Differences

The API migration differences between GPT-5.5 and Claude Opus 4.7 are significant.

GPT-5.5 belongs to the OpenAI model ecosystem, where key parameters include model, reasoning_effort, Responses API tool calls, and output format control.

Claude Opus 4.7 belongs to the Anthropic Messages API ecosystem, where key parameters include adaptive thinking, effort, task budget, max_tokens, and tool calls.

Anthropic’s official documentation shows that Claude Opus 4.7 has removed extended thinking budgets.

The old syntax thinking: {"type": "enabled", "budget_tokens": N} will now return a 400 error.

The new syntax should use thinking: {"type": "adaptive"}, and you should set the effort via output_config.

Anthropic also clarified that starting with Claude Opus 4.7, setting non-default temperature, top_p, or top_k values will return a 400 error.

This is a critical migration point for many legacy projects.

If you previously relied on temperature=0 for deterministic output, you'll need to recalibrate your expectations: temperature=0 never guaranteed perfect consistency anyway.

In contrast, the migration focus for GPT-5.5 is more centered on prompt reconstruction, reasoning_effort evaluation, tool workflows, and result-oriented prompts.

GPT-5.5 vs. Claude Opus 4.7 API Migration Highlights

| Migration Item | GPT-5.5 | Claude Opus 4.7 |

|---|---|---|

| Model ID | gpt-5.5 |

claude-opus-4-7 |

| Reasoning Control | reasoning_effort |

effort + adaptive thinking |

| Long Context | 1M context window | 1M context window |

| Output Limit | Per OpenAI API specs | 128k max output |

| Temperature | Configured per OpenAI API | Non-default temperature/top_p/top_k will error |

| Tool Workflow | Responses API tool system | Messages API tool system |

| Migration Risk | Over-specified legacy prompts | Thinking budget and legacy sampling params |

Recommendation: If you need to integrate both GPT-5.5 and Claude Opus 4.7, it's not recommended to write two separate, fragmented logic flows in your business code. You can use APIYI (apiyi.com) as a unified OpenAI-compatible entry point, and then manage model differences, parameter variations, and error handling at the gateway or adapter layer.

GPT-5.5 vs. Claude Opus 4.7: Cost and Performance Trade-offs

When comparing the costs of GPT-5.5 and Claude Opus 4.7, you can't just look at the unit price.

According to official OpenAI documentation, the GPT-5.5 API is priced at $5 per million input tokens and $30 per million output tokens.

Anthropic's model overview shows that Claude Opus 4.7 is priced at $5 per million input tokens and $25 per million output tokens.

Looking strictly at output pricing, Claude Opus 4.7 is cheaper.

However, OpenAI emphasizes that GPT-5.5 is more token efficient in Codex than GPT-5.4.

Anthropic also highlights that Claude Opus 4.7 manages costs through effort, task budget, and adaptive thinking.

Therefore, the actual cost depends on the nature of the task.

If GPT-5.5 completes a task in fewer rounds, its total cost might not be higher.

Conversely, if Claude Opus 4.7 consumes a large number of output tokens under xhigh or max effort settings, its total cost could also rise.

Cost evaluation should focus on the "total cost to complete a qualified task," rather than just the price per million tokens.

GPT-5.5 vs. Claude Opus 4.7 Cost Evaluation Dimensions

| Cost Dimension | What to Track | Why It Matters |

|---|---|---|

| Input tokens | Prompts, context, tool results | Significant cost differences in long-context tasks |

| Output tokens | Final answers, tool parameters, reasoning output | Output pricing is usually more expensive |

| Rounds | How many turns to complete a task | Multiple rounds amplify costs |

| Success rate | First-try success vs. iterative correction | Failed retries are hidden costs |

| Latency | User wait time | High effort settings increase wait times |

| Human review | Need for human correction | Poor quality shifts costs to human labor |

Recommendation: For enterprise applications, model cost optimization isn't just about picking the cheapest model. We recommend using APIYI (apiyi.com) to log the input, output, latency, model, parameters, and human ratings for every model invocation, using "qualified task cost" as your ultimate metric.

GPT-5.5 vs. Claude Opus 4.7: Scenario-Based Decision Making

If you're an individual developer, you can choose between GPT-5.5 and Claude Opus 4.7 based on your tool ecosystem.

If you frequently use Codex, test GPT-5.5 first.

If you frequently use Claude Code, test Claude Opus 4.7 first.

If you're an enterprise technical lead, don't make decisions based on personal experience alone.

You should build a task set and compare both models using the same inputs, outputs, scoring, and cost tracking.

For content teams, GPT-5.5 is worth prioritizing for structured content, research synthesis, tables, and multi-tool workflows.

Claude Opus 4.7 is worth prioritizing for cautious expression, long-context tasks, visual materials, and document verification.

If you're building an API platform or SaaS product, we recommend implementing model routing.

For example, route simple Q&A to a lower-cost model, and escalate to GPT-5.5 or Claude Opus 4.7 only for complex coding or long-running agent tasks.

This prevents every single request from hitting your flagship models.

Migration Checklist: GPT-5.5 vs. Claude Opus 4.7

Don't just rely on a single subjective test before going live.

I recommend preparing at least five categories of samples:

- Success Samples: Cases that already work well.

- Edge Cases: Scenarios prone to misinterpretation.

- Long-Context Samples: Tests for large context window handling.

- Tool-Calling Samples: Tests for function/tool invocation accuracy.

- Failure Recovery Samples: Tests for how the model handles errors or corrections.

For every sample, make sure to log the model, parameters, input tokens, output tokens, latency, success status, and human ratings.

Also, be sure to test both low-cost and high-capability tiers.

For GPT-5.5, you can test different reasoning_effort settings.

For Claude Opus 4.7, you can test medium, high, xhigh, and max effort levels.

Don't just default to the highest configuration for both models. The highest setting only shows you the performance ceiling, not the production cost-effectiveness.

How to Interpret GPT-5.5 vs. Claude Opus 4.7 Evaluation Data?

Public benchmarks for GPT-5.5 and Claude Opus 4.7 are useful references, but they aren't a direct substitute for your own business results.

The reason is simple: public evaluations usually feature fixed task sets, fixed prompts, fixed runtime environments, and fixed scoring rules.

Your business system, however, will encounter dirty data, missing context, inconsistent user expressions, tool failures, permission restrictions, and legacy prompt baggage.

Therefore, seeing GPT-5.5 lead on a specific benchmark doesn't mean you should switch all tasks to GPT-5.5.

Similarly, seeing Claude Opus 4.7 lead on another benchmark doesn't mean you should switch everything to Claude.

A safer approach is to treat official benchmarks as indicators of a model's capability direction.

For example, Terminal-Bench 2.0 is a better indicator of complex command-line workflow capabilities.

SWE-Bench Pro is closer to real-world GitHub issue resolution.

GDPval is closer to professional knowledge delivery capabilities.

Vision benchmarks and high-resolution image support are better for judging tasks related to screenshots, charts, UI, and document layout.

When deploying, you need to map these dimensions to your own product scenarios.

- If your product is an IDE coding assistant, prioritize code fix success rates, test pass rates, irrelevant change rates, and explanation quality.

- If your product is an enterprise knowledge base, prioritize citation accuracy, factual omission rates, conflict handling, and refusal boundaries.

- If your product is an automation agent, prioritize tool invocation counts, failure recovery, task completion rates, and total costs.

- If your product is for visual document processing, prioritize coordinate recognition, chart transcription, layout understanding, and human correction costs.

The value of APIYI (apiyi.com) lies in its ability to execute these model tests under a unified interface.

With the same inputs, the same scoring dimensions, and the same log fields, you can finally make your conclusions about GPT-5.5 vs. Claude Opus 4.7 truly reusable.

GPT-5.5 vs Claude Opus 4.7 FAQ

Which is better for coding: GPT-5.5 or Claude Opus 4.7?

Both are excellent choices.

GPT-5.5 performs better on Terminal-Bench 2.0, making it well-suited for complex command-line processes and Codex workflows.

Claude Opus 4.7 shows strong performance on SWE-Bench Pro and is also a great fit for long-running agentic tasks via Claude Code.

For real-world projects, we recommend running A/B tests using the same set of issues and test commands.

Which is better for knowledge base Q&A: GPT-5.5 or Claude Opus 4.7?

If your focus is on structured generation and multi-tool organization, prioritize testing GPT-5.5.

If your focus is on identifying missing data, cautious expression, and maintaining strict discipline over a long context window, prioritize testing Claude Opus 4.7.

Ultimately, your choice should depend on citation accuracy and the cost of human review.

Which is better for visual tasks: GPT-5.5 or Claude Opus 4.7?

Claude Opus 4.7 explicitly includes high-resolution image support in its official documentation.

If your task involves screenshots, coordinate detection, document layout analysis, or visual verification, Claude Opus 4.7 is worth testing first.

GPT-5.5 is also suitable for multimodal workflows, but heavy visual tasks should be evaluated separately.

Which is more cost-effective: GPT-5.5 or Claude Opus 4.7?

Based on official pricing, both models cost $5 per million tokens for input.

For output, Claude Opus 4.7 costs $25 per million tokens, while GPT-5.5 costs $30 per million tokens.

However, the actual cost depends on the number of turns required to complete a task, output length, failure rates, and the cost of human intervention.

GPT-5.5 vs Claude Opus 4.7 Summary

There is no one-size-fits-all answer when choosing between GPT-5.5 and Claude Opus 4.7.

GPT-5.5 is better suited for multi-tool production workflows, Codex coding, document/table generation, knowledge work, and complex task execution.

Claude Opus 4.7 is better suited for high-resolution visual tasks, long-range agentic tasks, strict instruction following, file memory, and cautious data processing.

If you're an individual user, choose based on the ecosystem of tools you already use.

If you're an enterprise user, you must evaluate them using real-world samples.

If you're an API developer, we recommend managing model differences within an abstraction layer rather than hard-coding GPT-5.5 or Claude Opus 4.7 into your business logic.

APIYI (apiyi.com) is perfect for serving as a unified model gateway, handling model invocation logs, cost monitoring, and seamless model switching.

Our final recommendation: Use GPT-5.5 for high-complexity, multi-tool tasks; use Claude Opus 4.7 for high-precision visual and long-agentic tasks; use lower-cost models for standard requests; and continuously adjust your routing based on evaluation data.

References:

- OpenAI Introducing GPT-5.5: openai.com/index/introducing-gpt-5-5

- Anthropic Introducing Claude Opus 4.7: anthropic.com/news/claude-opus-4-7

- Anthropic Claude Opus 4.7 API docs: platform.claude.com/docs/en/about-claude/models/whats-new-claude-4-7

- Anthropic Models overview: platform.claude.com/docs/en/about-claude/models/overview

- Anthropic Effort docs: platform.claude.com/docs/en/build-with-claude/effort