On April 21, 2026, Google launched two next-generation autonomous research agents in the Gemini API public preview: Deep Research and Deep Research Max. Both are powered by the Gemini 3.1 Pro model released this past February. This marks a critical step in Google's strategy to transition "long-term autonomous research" from consumer-facing products to developer APIs. Notably, Deep Research Max achieved a 93.3% score on DeepSearchQA and inherits the core reasoning capabilities of Gemini 3.1 Pro, hitting 77.1% on ARC-AGI-2—more than double the performance of Gemini 3 Pro.

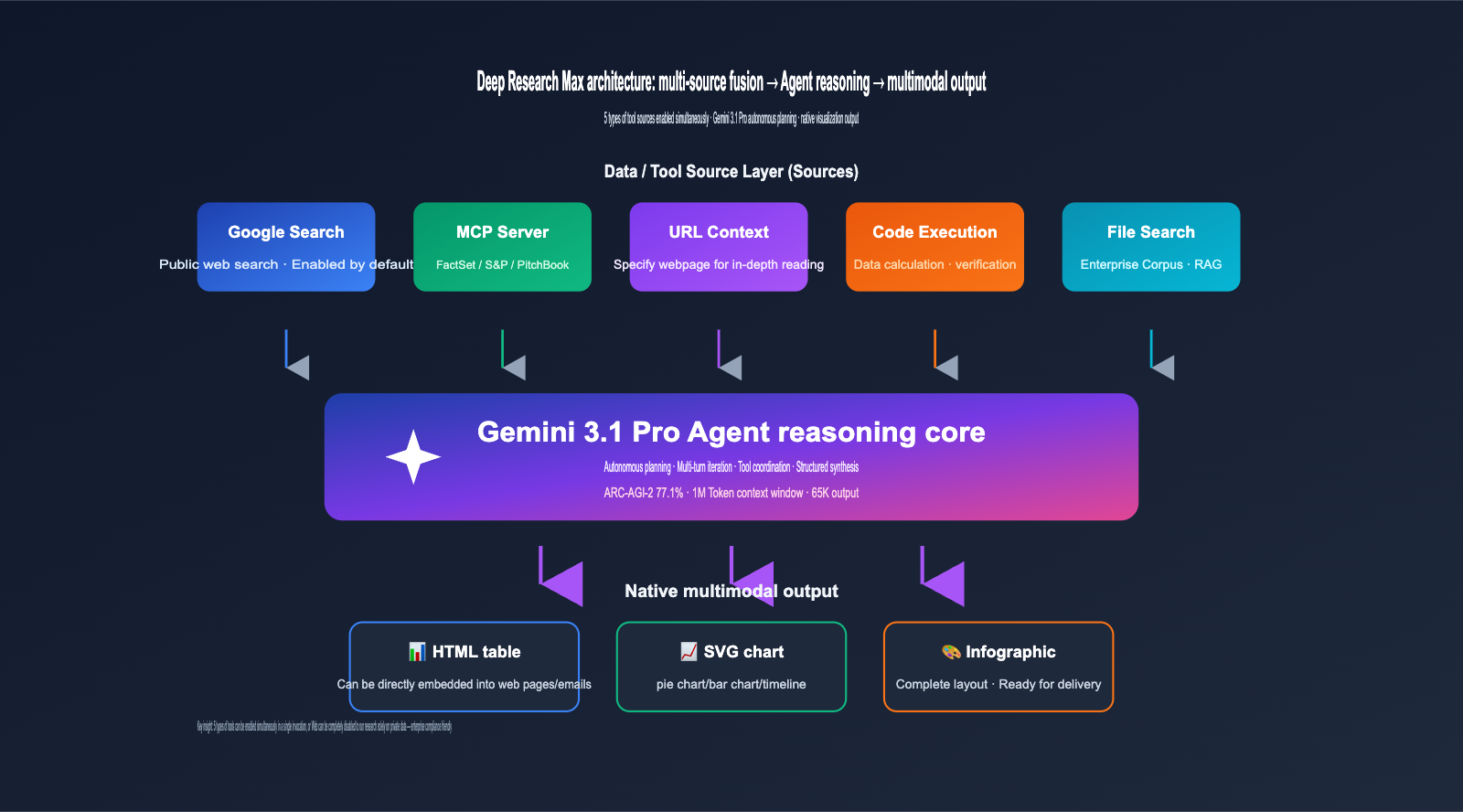

More importantly, this generation of Deep Research introduces three engineering-grade features: MCP (Model Context Protocol) integration for any private data source, native visual output (HTML tables / SVG charts / infographics), and cross-source fusion of web and private data. This means that for the first time, developers can use a single API call to have an agent simultaneously retrieve information from the public web, internal corporate networks, and third-party professional databases, ultimately generating visual reports ready for dashboard embedding.

This article, based on official Google releases and Gemini API documentation, breaks down the four core breakthroughs of Deep Research Max, the differences between it and the standard version, the real-world significance of the 77.1% ARC-AGI-2 score, and how domestic developers can integrate these capabilities.

1. What is Deep Research Max: An Autonomous Research Agent Powered by Gemini 3.1 Pro

Deep Research isn't a new concept; Google opened a basic version to consumers in the Gemini app back in late 2024, allowing AI to run web searches and write reports with citations for you. However, the consumer version had limited functionality, no public API access, and couldn't connect to private data, making its industrial application value limited.

This release of Deep Research / Deep Research Max is an architectural rewrite. Google defines it as a "next-generation autonomous research agent"—capable of autonomously planning, executing, and synthesizing multi-step research tasks across various tool sources like the web, MCP servers, URL context, code execution, and file retrieval, ultimately producing structured reports with citations. Both versions were launched simultaneously to cater to different engineering needs.

| Comparison Dimension | Deep Research (Standard) | Deep Research Max | Use Case |

|---|---|---|---|

| Optimization Goal | Speed and Latency | Comprehensiveness and Depth | – |

| Reasoning Duration | Short (Seconds to Minutes) | Long (Minutes to Hours) | – |

| Test-time Compute | Standard | Extended | – |

| Multi-turn Iteration | 1-2 turns | Multi-turn deep reasoning | – |

| Invocation Mode | Synchronous / Real-time | Asynchronous / Background | – |

| Cost | Lower | Higher | – |

| Typical Use Case | Conversational research assistant, customer service | Investment research, industry analysis, due diligence | – |

Google explicitly stated in its official blog: Deep Research Max is designed for workflows that can accept asynchronous waiting and prioritize the highest quality, comprehensive output. If you're handling corporate due diligence, deep industry research, or automated long-form report generation, Max is the better choice. If you're building an AI assistant for real-time C-end interaction, the lower latency of the standard version is more user-friendly.

💡 Integration Advice: Both Deep Research and Max are available through the paid tier of the Gemini API. Domestic developers can access the Gemini 3.1 Pro series interfaces directly via APIYI (apiyi.com). The platform has unified these into an OpenAI-compatible protocol, helping you bypass cross-border network issues and account registration hurdles.

2. The 4 Core Breakthroughs of Deep Research Max

The most noteworthy aspect of this release is the four leapfrog upgrades in engineering capabilities. Together, they transform Deep Research Max into a true "enterprise-grade autonomous research agent."

2.1 First Breakthrough: Native MCP Protocol Support for Third-Party Data Integration

The Model Context Protocol (MCP) is an open standard led by Anthropic, designed to allow AI agents to connect to arbitrary external tools and data sources in a unified way. Deep Research Max is the first product in the Google ecosystem to integrate MCP as a first-class citizen. Developers simply need to encapsulate private or third-party data into an MCP server, and the agent can retrieve it just like a native tool.

Google also disclosed its first batch of MCP partners at the launch: financial data giants FactSet, S&P Global, and PitchBook are all working with Google to design MCP servers, allowing mutual customers to plug these professional financial data streams directly into their Deep Research workflows. This means research agents in fields like finance, law, and healthcare finally have a standardized access path, eliminating the need to rewrite adaptation layers for every single data source.

2.2 Second Breakthrough: Native Visual Output, Beyond Plain Text Reports

Traditional LLM outputs are generally limited to markdown text; adding charts usually requires an extra call to a plotting API or a Code Interpreter. Deep Research Max, however, natively generates HTML tables, SVG charts, and infographics during the reasoning process. These visual artifacts are an organic part of the agent's reasoning flow, not an afterthought.

Actual output formats include: structured HTML tables (embeddable directly into web pages), scalable SVG charts (pie charts, bar charts, timelines, etc.), and fully laid-out infographics (perfect for emails or Slack). If a project integrates high-quality image models like Nano Banana, Deep Research can even call them to generate more complex visual assets. This shift upgrades Deep Research's output from "long markdown with citations" to "multimodal reports ready for dashboards."

2.3 Third Breakthrough: Cross-Source Integration of Web and Private Data

Previous versions of Deep Research were limited to public web searches, failing to address the biggest pain point for enterprise users: integrating private information like SaaS documents, CRM data, and ERP reports into their research. The new version allows you to simultaneously enable Google Search, remote MCP servers, URL Context, Code Execution, and File Search in a single API call, with the agent autonomously deciding which tool to use.

More importantly, developers can completely disable Web access, forcing the agent to conduct research only within specified private data sources. For industries highly sensitive to data compliance—such as finance, law, and healthcare—this switch is a true game-changer, ensuring that the agent doesn't inadvertently leak internal information into public search queries. It's a critical, yet long-overlooked, detail in enterprise AI adoption.

2.4 Fourth Breakthrough: Performance Leap – Comprehensive Gains Across Three Benchmarks

Official benchmark comparisons released by Google show significant performance improvements for Deep Research Max compared to the December 2024 version:

| Benchmark | Dec 2024 Version | Deep Research Max (2026/04) | Improvement |

|---|---|---|---|

| DeepSearchQA | 66.1% | 93.3% | +27.2 percentage points |

| Humanity's Last Exam | 46.4% | 54.6% | +8.2 percentage points |

| ARC-AGI-2 (Base Model) | 31.1% (Gemini 3 Pro) | 77.1% (Gemini 3.1 Pro) | > 2× Improvement |

The DeepSearchQA benchmark specifically evaluates autonomous web retrieval and comprehensive reasoning capabilities; a score of 93.3% is nearing the ceiling. This means that for the core task of "autonomously researching materials and writing accurate answers," Deep Research Max is unlikely to be surpassed by competing products anytime soon.

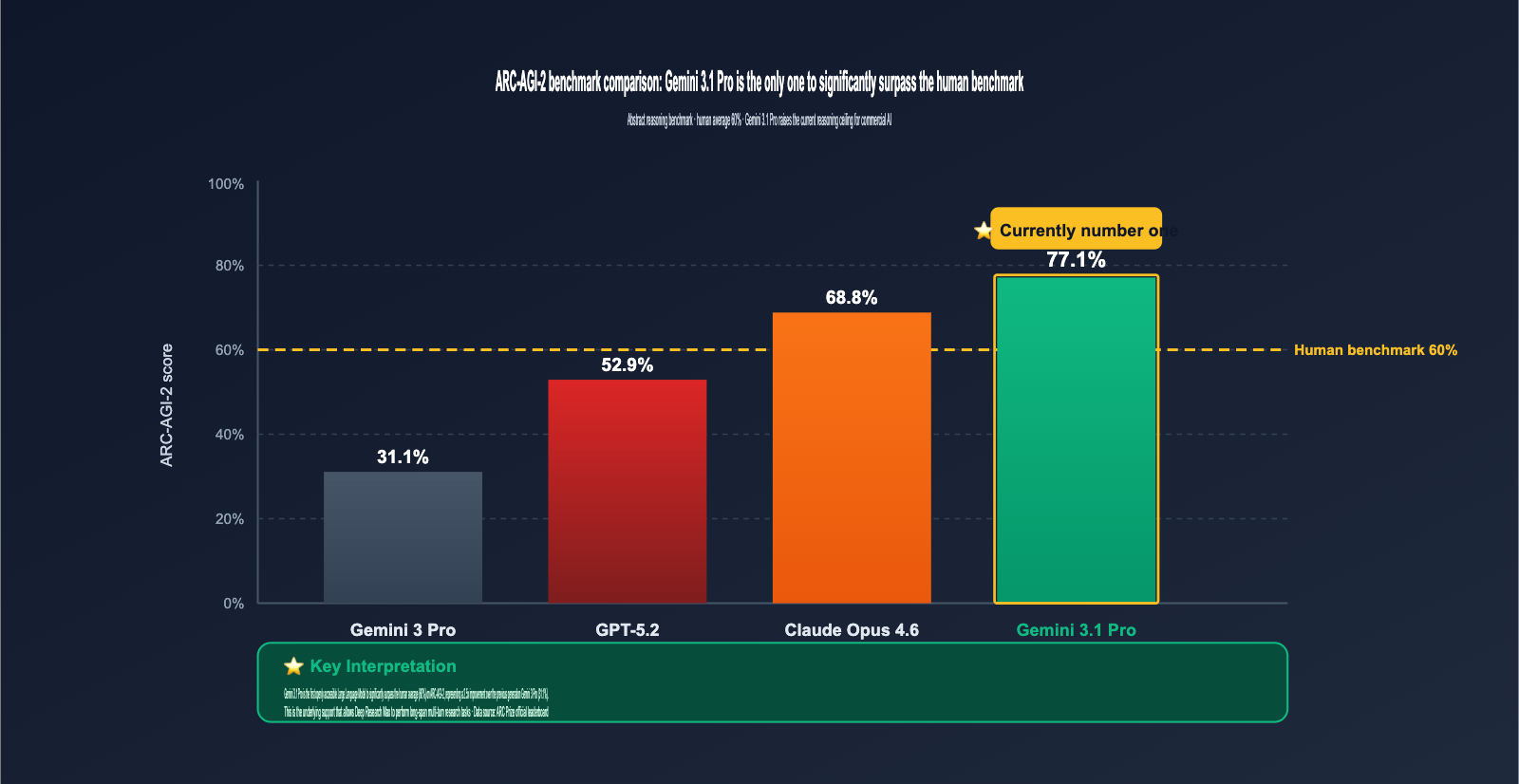

3. The Real Significance of the 77.1% ARC-AGI-2 Score

Many developers see the "77.1%" figure and might instinctively think "that's not bad," but you have to understand the difficulty of the ARC-AGI-2 benchmark to truly appreciate the weight of this score.

3.1 What is ARC-AGI-2?

Maintained by the ARC Prize organization, ARC-AGI-2 is specifically designed to test an AI's abstract reasoning capabilities on entirely new logical patterns that have never been seen in training data. It provides a few examples (input → output pairs) for the model to infer implicit rules, which it must then apply to solve unseen inputs. The human benchmark is 60%, so 77.1% is already well above the human average.

The core challenge of this benchmark is that models cannot "game" the score through memorization. Every pattern is newly generated and has no relation to the training corpus. This is precisely why ARC-AGI-2 is considered one of the gold standards in the industry for measuring "true abstract reasoning ability."

3.2 Benchmarking: Gemini 3.1 Pro is Currently the Strongest

| Model | ARC-AGI-2 Score | vs Human Benchmark (60%) | Notes |

|---|---|---|---|

| Gemini 3.1 Pro | 77.1% | +17.1 pp | First open model to significantly outperform humans |

| Claude Opus 4.6 | 68.8% | +8.8 pp | Anthropic Flagship |

| Human Benchmark | 60.0% | – | Average level |

| GPT-5.2 | 52.9% | -7.1 pp | OpenAI |

| Gemini 3 Pro | 31.1% | -28.9 pp | Previous Generation |

As you can see, Gemini 3.1 Pro not only outperforms all other commercial Large Language Models, but it is also the only openly accessible model that significantly exceeds the human benchmark. This marks the first time commercial AI has pulled ahead of humans on a rigorous "novel logical reasoning" benchmark. Deep Research Max directly inherits this reasoning capability—which is the underlying support for its ability to execute long-span, multi-turn iterative research tasks.

🎯 Capability Suggestion: If your product is geared toward high-intensity reasoning scenarios like research, consulting, investment analysis, or legal analysis, the combination of Gemini 3.1 Pro and Deep Research Max should be immediately included in your technical assessment. You can quickly integrate and test it via the APIYI (apiyi.com) platform, which already supports OpenAI-compatible invocations for multiple flagship models, including Gemini 3.1 Pro.

4. Deep Research Max API Quick Start

Now that we've covered the theory, here is the most concise code to get you up and running. Deep Research Max uses the standard Gemini API and is currently available in the paid tier preview.

4.1 Basic Invocation: Running a Web Research Agent

from google import genai

from google.genai import types

# Connect via the APIYI unified proxy to avoid cross-border network issues

client = genai.Client(

api_key="your-apiyi-key",

http_options={"base_url": "https://vip.apiyi.com"}

)

response = client.models.generate_content(

model="deep-research-max-preview-04-2026",

contents="Analyze the global embedding model market landscape for the first half of 2026, listing the Top 5 vendors and their differentiated advantages",

config=types.GenerateContentConfig(

tools=[types.Tool(google_search={})], # Enable Google Search

thinking_config=types.ThinkingConfig(thinking_level="max") # Max-level thinking budget

)

)

print(response.text) # Outputs the complete research report (including native HTML tables / SVG charts)

This code does three things: selects the Deep Research Max model, enables the Google Search tool, and sets the thinking level to the maximum. The Agent will autonomously plan its search path, iterate through multiple rounds of analysis, and finally produce a complete report with citations and visualizations.

4.2 Advanced Invocation: Connecting to an MCP Server for Private Data Research

If you want to use Deep Research Max to run research on internal company data (e.g., CRM, internal wikis), you'll need to encapsulate your data sources into an MCP server and declare them during the model invocation:

response = client.models.generate_content(

model="deep-research-max-preview-04-2026",

contents="Analyze the customer types with the highest churn rate in the company's Q1 sales pipeline",

config=types.GenerateContentConfig(

tools=[

types.Tool(mcp_servers=[

{"url": "https://your-internal-mcp.company.com", "auth": "..."}

]),

types.Tool(file_search={"corpora": ["sales-docs-corpus"]}),

],

thinking_config=types.ThinkingConfig(thinking_level="max")

)

)

Note that google_search is not enabled here, meaning the Agent performs research entirely within your private data scope and will not send any external queries to Google. This is a critical capability for enterprise compliance scenarios.

4.3 Switching Between Standard and Max Versions

If your scenario involves real-time, consumer-facing chat where speed is more important than depth, simply change the model name to deep-research-preview-04-2026. The interfaces are fully compatible; the only differences are the internal compute budget and the number of iteration rounds.

💡 Quick Trial Tip: When connecting for the first time, we recommend using the standard Deep Research version to run a few demos and get familiar with the Agent's output style before upgrading to Max for actual business tasks. We suggest connecting directly via the APIYI (apiyi.com) platform, which supports OpenAI-compatible calls for multiple models, including Gemini 3.1 Pro, Deep Research, and Deep Research Max, making it easy to switch and compare.

5. Impact Analysis of Deep Research Max: Which Workflows Will Be Reshaped

The release of a new tool is just the beginning; the real value lies in which existing workflows it changes. Based on release materials and early community feedback, the following four areas will be most heavily impacted.

5.1 Investment Research and Industry Analysis

This is the scenario Google explicitly highlighted at the launch. Financial data providers FactSet, S&P Global, and PitchBook have teamed up to create MCP servers, aiming to allow buy-side analysts to call financial reports, industry research, and M&A databases simultaneously using natural language instructions, automatically generating visualized research reports. A draft that used to take two days to write might now be generated in 30 minutes. This isn't about replacing analysts; it's about liberating them from mechanical data retrieval.

5.2 Corporate Due Diligence and Compliance Reviews

The biggest pain point for legal and compliance teams during due diligence is the need to "check both public information and internal archives." The "private data only" mode of Deep Research Max allows lawyers to confidently feed client data to the Agent for analysis without worrying about it being indexed by search engines. Combined with native visualization output, the final due diligence report can be embedded directly into Notion or Confluence.

5.3 Academic Reviews and Literature Research

The most time-consuming part of writing a review paper for scholars is quickly digesting 200+ documents into a coherent argument framework. Deep Research Max's multi-round deep reasoning can read dozens of PDFs in a single call and generate a structured outline. Combined with the 1M token context window, a single call can digest the core literature of an entire research direction.

5.4 AI Assistant Upgrades in SaaS Products

Many SaaS products are already stuffed with AI Copilots, but most current implementations are just "GPT-4 + RAG wrappers." The standard Deep Research version (with its lower latency) provides an upgrade path for these products: replacing the Copilot with a true autonomous Agent capable of answering questions by synthesizing web data, product-internal data, and user-private data, rather than just flipping through keywords in documents.

VI. Comparing Deep Research Max with Similar Products

Let's place Deep Research Max within the industry landscape. Current mainstream "research/deep reasoning" products generally fall into three categories.

| Product | Vendor | Autonomous Research | MCP Support | Native Visualization | Private Data | Overall Rating |

|---|---|---|---|---|---|---|

| Deep Research Max | ✅ Multi-turn deep | ✅ First-class citizen | ✅ Native HTML/SVG | ✅ Web off mode | ⭐⭐⭐⭐⭐ | |

| OpenAI Deep Research | OpenAI | ✅ Multi-turn | Partial | Partial | Partial | ⭐⭐⭐⭐ |

| Anthropic Claude Research | Anthropic | ✅ | ✅ Native MCP | ❌ Text-focused | ✅ | ⭐⭐⭐⭐ |

| Perplexity Deep Research | Perplexity | ✅ Web-focused | ❌ | Partial | ❌ | ⭐⭐⭐ |

| Custom RAG + Agent | Various | Depends on impl. | Depends on impl. | Custom dev req. | ✅ | ⭐⭐ |

As you can see, Deep Research Max achieves the most complete implementation across four core dimensions: multi-turn deep reasoning + first-class MCP support + native visualization + cross-source private data integration. It is currently the most mature engineering-grade research Agent solution among commercial products.

📌 Selection Advice: If your application requires deep reasoning, private data compliance, and visual output, Deep Research Max is the current optimal choice. If you only need a lightweight web search assistant, Perplexity or the standard Deep Research version will suffice. You can use APIYI (apiyi.com) to access and compare these models in one place, avoiding the hassle of configuring authentication and interfaces for multiple providers.

VII. Deep Research Max FAQ

Q1: What is the difference between Deep Research Max and the standard Gemini 3.1 Pro?

Gemini 3.1 Pro is the underlying base model that provides reasoning capabilities. Deep Research Max is an autonomous research Agent built on top of 3.1 Pro, encapsulating Agent capabilities like multi-tool invocation, multi-turn iteration, and native visualization. Simply put, 3.1 Pro is the "brain," while Deep Research Max is the "researcher equipped with hands, feet, and tools."

Q2: How can developers in China access Deep Research Max?

Deep Research Max is a paid-tier feature of the Gemini API. Accessing it directly from China requires overcoming cross-border network and payment hurdles. The easiest path is through a unified API proxy service like APIYI (apiyi.com), which allows you to pay in RMB, provides an interface fully compatible with the official one, and supports one-stop access to multiple models, including the Gemini 3.1 Pro series.

Q3: How much more expensive is Deep Research Max than the standard version?

Google hasn't disclosed the exact multiplier, but based on descriptions of "extended test-time compute and multi-turn deep iteration," the cost per call for Max will be significantly higher than the standard version, likely in the 3-10x range. I recommend using the standard version for non-high-value tasks and switching to Max when top-tier depth is required.

Q4: Can I write my own MCP server to connect to Deep Research Max?

Yes. MCP is an open protocol. Any team can implement their own MCP server according to the specifications, encapsulating data from ERP, CRM, internal knowledge bases, etc., into standard interfaces for the Agent. Google has also explicitly stated that it welcomes community contributions to MCP server implementations.

Q5: Can the output of Deep Research Max be embedded directly into web pages?

Yes. The native output includes HTML tables, SVG charts, and structured layouts, which can be embedded directly into web pages, dashboards, or emails. This is one of the core differentiating advantages of Deep Research Max compared to traditional LLM output.

Q6: Can the Agent still work if Web access is completely disabled?

Yes. The Agent will conduct research solely using the private data sources you specify, such as your MCP servers, File Search corpora, and URL Context. This is the core usage pattern for enterprise compliance scenarios—ensuring data never leaves the enterprise boundary.

Q7: What is the context window of Deep Research Max?

Inherited from Gemini 3.1 Pro, it supports an input context of 1,048,576 tokens (approx. 1M) and a maximum output of 65,536 tokens (approx. 65K). This means a single call can digest dozens of long research papers or an entire product documentation library.

Q8: Does the 77.1% score on ARC-AGI-2 mean Gemini 3.1 Pro has the strongest general capabilities?

You can't infer that directly. ARC-AGI-2 measures abstract reasoning; the 77.1% indicates that Gemini 3.1 Pro leads in this specific dimension. However, other dimensions like coding, multimodal performance, and Chinese language understanding depend on their respective benchmarks. Overall, Gemini 3.1 Pro is one of the top-tier flagship models currently available.

Q9: Will Deep Research Max replace RAG systems?

Not in the short term; they are more likely to be complementary. RAG still holds irreplaceable cost and latency advantages for "precise retrieval of specific enterprise data." Deep Research Max is better suited for high-value tasks involving "multi-source integration, deep reasoning, and visual output." The best practice is to use RAG for frontline Q&A and upgrade to Deep Research Max when deep research needs arise.

Q10: How does Deep Research Max perform in Chinese-language scenarios?

Gemini 3.1 Pro's multilingual capabilities include Chinese, and Deep Research Max inherits this foundation. However, keep in mind that the Google Search tool defaults to English-first. For Chinese research tasks, I recommend enabling both the Chinese domain for Google Search and a Chinese-language MCP server to significantly improve information coverage.

8. Summary: Core Takeaways for Implementing Deep Research Max

Looking back at this guide, there are a few key points that developers should keep in mind regarding Google Deep Research Max:

First, Deep Research Max is the most noteworthy autonomous research agent of 2026. With its four core breakthroughs—MCP support, native visualization, cross-source integration, and a major performance leap—it has effectively pushed enterprise-grade research agents into a truly deployable phase. Second, the two versions serve distinct purposes: the standard version is optimized for speed and low latency, making it perfect for real-time interaction, while the Max version is optimized for depth and comprehensiveness, ideal for asynchronous, deep-dive tasks. Choose based on your specific use case. Third, the 77.1% score on ARC-AGI-2 isn't just a vanity metric. It signifies that the underlying Gemini 3.1 Pro has clearly surpassed the human average in core abstract reasoning. Combined with Deep Research Max’s tool invocation framework, we finally have a commercially viable solution for long-span, complex research tasks.

Fourth, the MCP protocol is set to become the de facto standard for the next generation of agents. Google treating it as a first-class citizen is a clear signal. Anthropic is also a major proponent of MCP, and with support already available in tools like Cursor and Claude Desktop, the entire ecosystem is coalescing around it. For developers, investing time in learning and implementing MCP servers is a high-ROI decision. Fifth, the integration path for domestic developers is straightforward. Accessing Deep Research/Max via the Gemini API paid preview tier is simple, and you can complete the entire process—from registration and payment to model invocation—via unified API proxy services like APIYI (apiyi.com). This saves you from having to deal with cross-border networking or international credit card issues.

🎯 Final Recommendation: If you are currently building AI products for research, consulting, analysis, education, or legal sectors, immediately include Deep Research Max in your technical evaluation. It represents the current gold standard of commercial AI agent engineering; those who start early will reap the greatest dividends in product differentiation. You can quickly integrate and test via the APIYI (apiyi.com) platform. Combined with Gemini 3.1 Pro's 1M context window and multimodal capabilities, you can elevate your traditional RAG, intelligent customer service, or content generation workflows into next-generation autonomous agent systems.

The release of Deep Research Max is just the beginning. Google has explicitly stated in its blog that this represents "a step change for autonomous research agents." Whether you can seize this window of tool iteration will directly determine your AI product's competitive position in the second half of 2026.

Author: APIYI Technical Team | Focusing on the practical implementation of Large Language Models. For more technical content, please visit APIYI at apiyi.com.