In the second quarter of 2026, the AI image generation market saw an unprecedented "twin star" landscape emerge:

- Nano Banana 2 (Gemini 3.1 Flash Image) was released on February 26th, challenging Pro-level quality with Flash-level speed, capable of generating images in just 1-2 seconds.

- GPT-Image-2 debuted on April 21st, setting a new industry benchmark with an Arena Elo score of 1512 and over 99% text accuracy.

Both models have their own strengths in the two core capabilities of text-to-image and image editing. Many developers and designers are finding themselves torn when choosing between them: "Which one, GPT-Image-2 or Nano Banana 2, is actually better for my business?"

This article breaks down the performance differences between the two models in text-to-image and image editing across 8 dimensions, based on official documentation, LMArena Elo rankings, and real-world business scenarios, to help you find the answer quickly.

GPT-Image-2 vs. Nano Banana 2: Core Capabilities at a Glance

Let's start with a summary table to clarify the key parameter differences between the two models.

| Comparison Dimension | GPT-Image-2 (OpenAI) | Nano Banana 2 (Google) |

|---|---|---|

| Release Date | 2026-04-21 | 2026-02-26 |

| Base Model | GPT-5 + O-Series Reasoning | Gemini 3.1 Flash Image |

| Arena Text-to-Image Elo | 1512 (#1) | 1360 |

| Arena Single-Image Edit Elo | 1513 (#1) | ~1065 |

| Arena Multi-Image Edit Elo | 1464 (#1) | ~1050 |

| Text Accuracy | 99%+ | ~93% |

| Generation Speed | 3 seconds (Instant) | 1-2 seconds (Official) / 4-6 seconds (Tested) |

| Max Resolution | 2K Native / 4K Beta | 2K Native / 4K Pro |

| Supports Inpainting | ✅ Localized editing | ✅ Localized editing |

| Supports Outpainting | ✅ | ✅ |

| Aspect Ratio Limits | 3:1 / 1:3 | 4:1 / 1:4 / 8:1 |

| Images per Request | Up to 8 | 1 |

| Standard API Unit Price | ~$0.04 (Standard tier) | $0.067 (1K) |

| Batch API Discount | No explicit discount | 50% discount |

🎯 Quick Conclusion: GPT-Image-2 leads across the board in text rendering, localized editing, and structural reasoning, holding the #1 spot on all three Arena leaderboards. Nano Banana 2 shines in generation speed, widescreen formats, and batch production costs, making it ideal for high-frequency iteration and large-scale production. For teams looking to integrate both for testing, we recommend using an API proxy service like APIYI (apiyi.com) to call both models through a single gateway, saving you from maintaining separate OpenAI and Google SDKs.

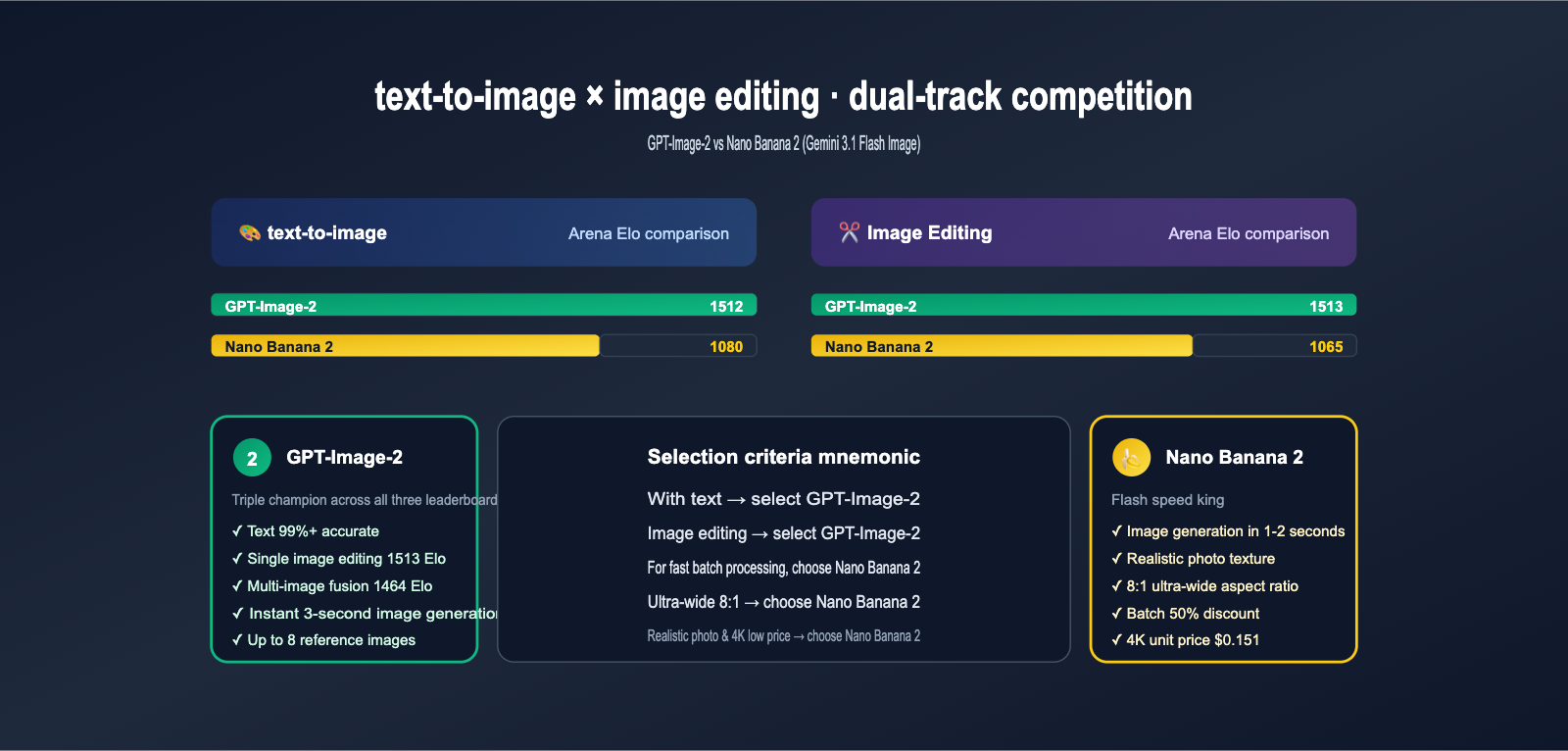

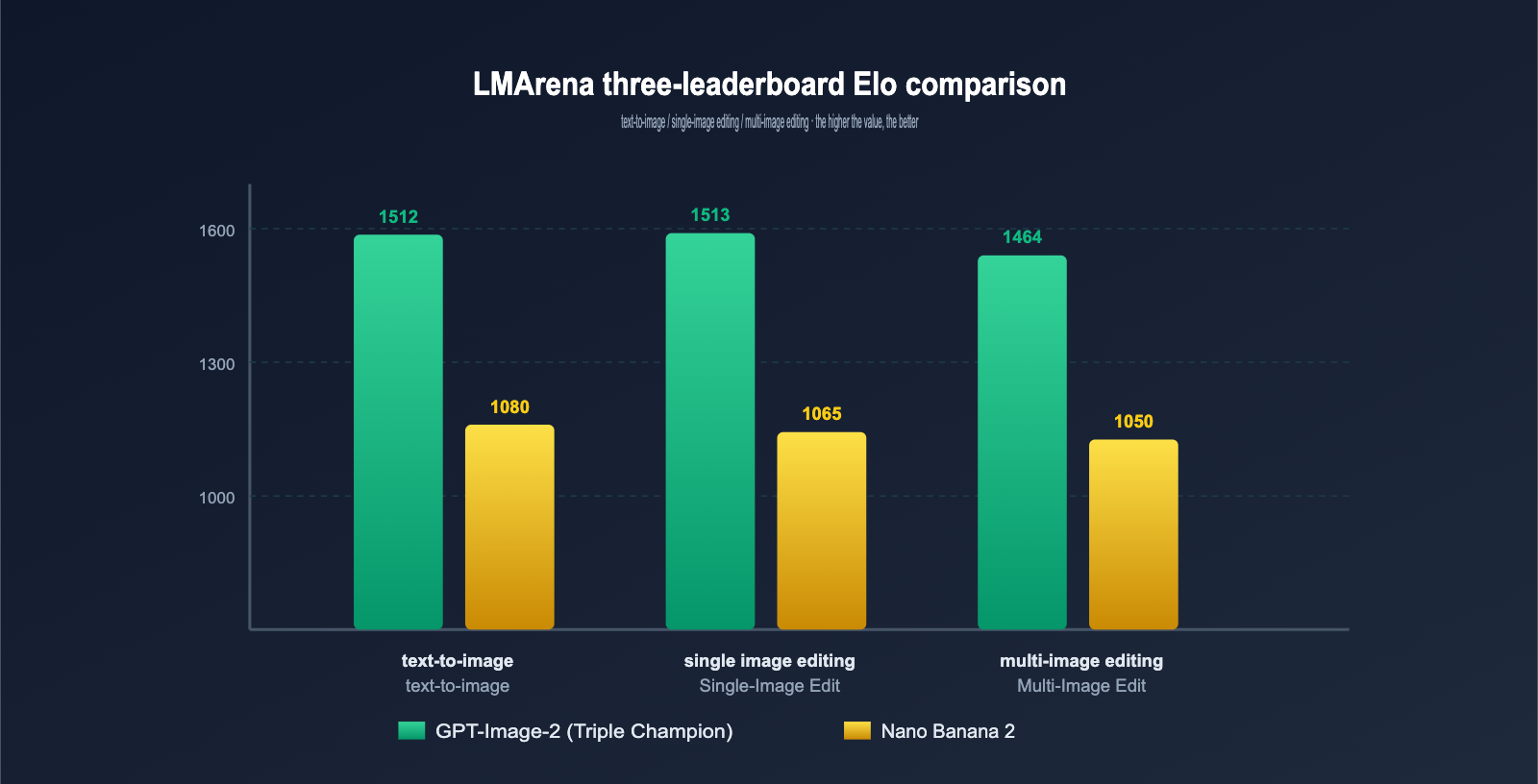

Dimension 1: Arena Text-to-Image Leaderboard—The "1512 Miracle" of GPT-Image-2

LMArena is currently the most authoritative blind-test arena, where global users cast anonymous votes to generate Elo scores. There's a significant gap between the two models on the text-to-image leaderboard.

LMArena Text-to-Image Elo Comparison

| Model | Elo Score | Rank | Gap from #1 |

|---|---|---|---|

| GPT-Image-2 | 1512 | #1 | 0 |

| Nano Banana Pro (Gemini 3 Pro Image) | 1360 | #2 | -152 |

| Nano Banana 2 (Gemini 3.1 Flash Image) | ~1080 | #5+ | -432 |

| Midjourney V8 | ~1250 | #3 | -262 |

| FLUX Pro 1.1 | ~1180 | #4 | -332 |

Key Observations:

- The text-to-image advantage of GPT-Image-2 over Nano Banana 2 (the Flash version) is 432 Elo, which is close to the largest gap in Arena history.

- The Flash version (Nano Banana 2) is positioned for "speed and cost efficiency" rather than competing for flagship image quality.

- If you're purely comparing the ceiling of image quality, GPT-Image-2 wins hands down; however, when it comes to cost-effectiveness, Nano Banana 2 has unique advantages.

Underlying Technical Differences

The root of these models' strengths lies in their different architectural choices:

GPT-Image-2's Autoregressive Path

- Based on the GPT-5 autoregressive architecture, it essentially "paints piece by piece."

- It natively integrates O-Series reasoning, allowing it to understand the prompt first → plan the layout → and finally generate.

- It has an incredibly strong grasp of semantic structure, which is the technical foundation for its 99%+ text accuracy.

Nano Banana 2's Flash Diffusion Path

- Based on the Gemini 3.1 Flash Image diffusion model.

- It pursues high-speed iteration + photorealistic textures, making it naturally suited for concept exploration.

- It leverages Gemini's world knowledge and web search capabilities to enhance realism.

💡 Technical Advice: If you need structural precision + readable text (posters, infographics, UI), the autoregressive advantage of GPT-Image-2 is a better fit. If you need rapid image output + photorealism (concept drafts, social media, realistic photography), the Flash diffusion of Nano Banana 2 is more appropriate.

Dimension 2: Image Editing Capabilities—GPT-Image-2 Scores Again

Image editing (Inpainting) is a core capability provided by both models, but the gap is equally stark on the LMArena specialized editing leaderboard.

Arena Image Editing Elo Rankings

| Editing Type | GPT-Image-2 | Nano Banana 2 | Gap |

|---|---|---|---|

| Single-Image Edit | 1513 | ~1065 | +448 |

| Multi-Image Edit | 1464 | ~1050 | +414 |

GPT-Image-2 is the triple crown winner in text-to-image, single-image editing, and multi-image editing, a first in the history of AI image models.

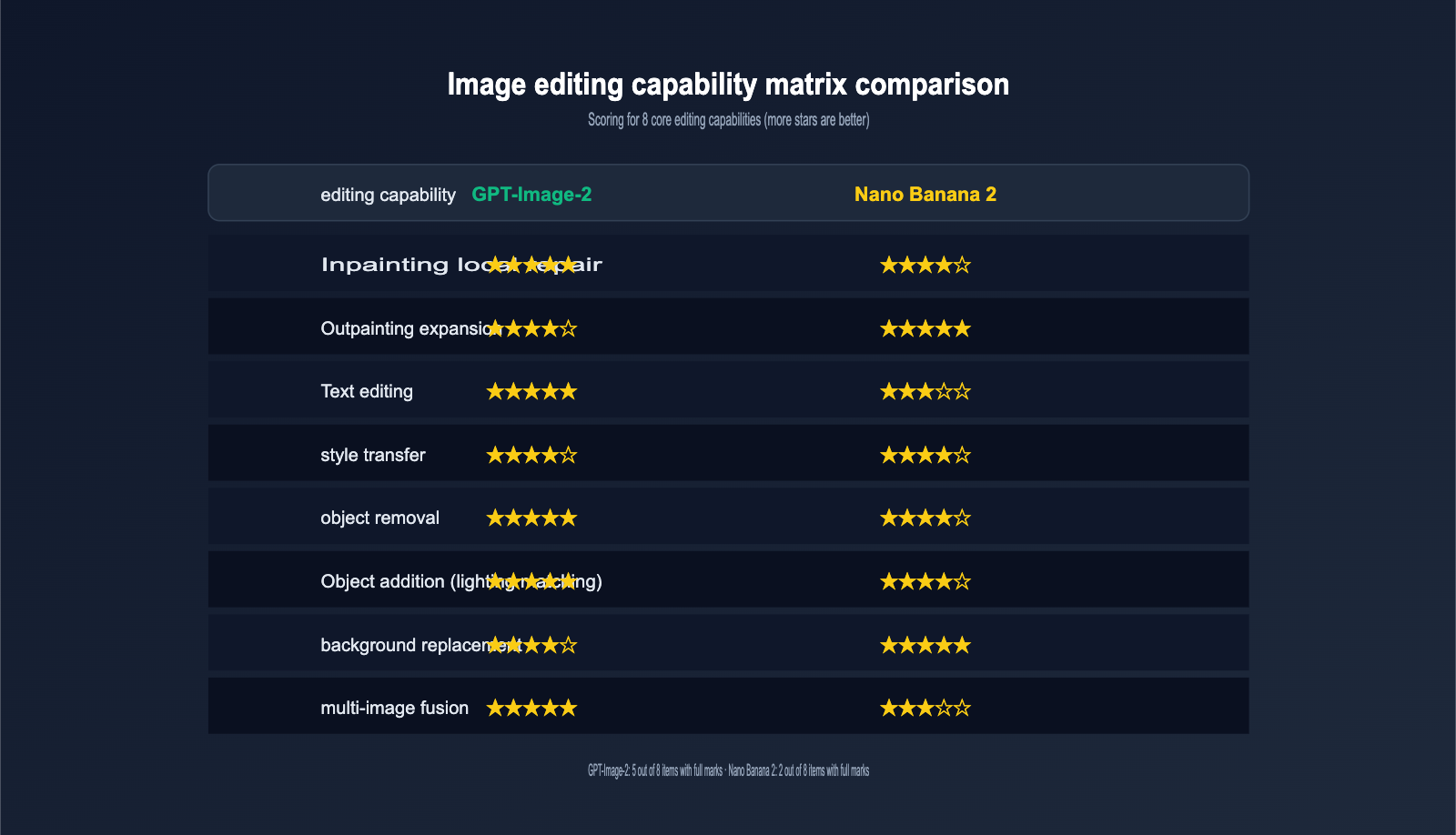

Detailed Editing Capability Comparison

| Editing Capability | GPT-Image-2 | Nano Banana 2 |

|---|---|---|

| Inpainting | ✅ Precise background retention | ✅ Natural blending |

| Outpainting | ✅ Supports 3:1 ultra-wide | ✅ Supports 8:1 extreme wide |

| Text Editing (Correcting text in images) | ✅ 99% accuracy | ✅ ~90% accuracy |

| Style Transfer | ✅ Reference image fusion | ✅ Reference image fusion |

| Object Removal | ✅ Fine-tuned cleanup | ✅ Natural filling |

| Object Addition | ✅ Auto-lighting matching | ✅ Auto-lighting matching |

| Background Replacement | ✅ Precise edges | ✅ Precise edges |

| Multi-Image Composition | ✅ Up to 8 inputs | ✅ Multiple references |

Typical Editing Scenario Tests

Scenario 1: E-commerce Product Image Text Change (Changing "V1.0" to "V2.0" on a box)

- GPT-Image-2: Replaces text precisely; fonts, colors, and reflections are perfectly preserved, and inpainting seams are invisible.

- Nano Banana 2: Can complete the task, but the font occasionally drifts, requiring 2-3 retries.

Scenario 2: Poster Outpainting (Expanding a 9:16 portrait poster to 21:9 landscape)

- GPT-Image-2: Expands up to 3:1 with natural composition.

- Nano Banana 2: Can expand to an extreme 8:1 wide screen, though repeating elements may appear on the far left or right.

Scenario 3: Multi-Image Composition (Combining "Character A" + "Background B" + "Outfit C" into one image)

- GPT-Image-2: With a 1464 Elo in multi-image editing, its fusion quality and detail retention are top-tier in the industry.

- Nano Banana 2: Fusion quality is slightly inferior, but it's 2-3 times faster, making it perfect for quick drafts.

🎯 Scenario Recommendation: Choose GPT-Image-2 for brand e-commerce / high-quality retouching; choose Nano Banana 2 for social content / rapid iteration. In actual production, a common workflow is to "use Nano Banana 2 for quick initial drafts, and GPT-Image-2 for the final high-end polish."

Dimension 3: Generation Speed—Nano Banana 2 is the King of Flash

Speed is the core selling point of Nano Banana 2, and it's the true meaning behind the "Flash" in its name.

Generation Latency by Resolution

| Resolution | GPT-Image-2 (Instant) | Nano Banana 2 | Speed Ratio |

|---|---|---|---|

| 512×512 | 2s | 1-2s | 1.0-1.5x |

| 1024×1024 | 3s | 2-4s | 1.0-1.2x |

| 2K (2048×2048) | 5-8s | 3-5s | 1.3-1.6x |

| 4K (4096×4096) | 10-15s | 5-8s | 1.7-2.0x |

| Inpainting (Single Image Editing) | 4-6s | 2-3s | 1.5-2.0x |

Conclusion: For 2K and 4K high-resolution image generation, Nano Banana 2 is 50-100% faster. This has a significant impact on teams that need to mass-produce large images (e-commerce, content factories, and asset libraries).

Concurrency and Throughput

While Nano Banana 2 can only generate one image per request, its Flash architecture responds so quickly that its batch concurrency capability is actually excellent:

- GPT-Image-2: Up to 8 images per request, with relatively strict concurrency limits.

- Nano Banana 2: 1 image per request, but you can use the Batch API for massive concurrency at 50% of the unit price.

For content farms / SaaS products that need to produce thousands of images daily, the Nano Banana 2 Batch API often delivers 3-5 times the cost-effectiveness.

# Nano Banana 2 batch concurrency example

import asyncio

from openai import AsyncOpenAI

client = AsyncOpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1" # APIYI unified gateway, supports both models

)

async def gen_one(prompt: str):

resp = await client.images.generate(

model="gemini-3.1-flash-image",

prompt=prompt,

size="1024x1024",

n=1

)

return resp.data[0].url

async def batch_run(prompts: list[str]):

tasks = [gen_one(p) for p in prompts]

return await asyncio.gather(*tasks)

# Run 50 prompts concurrently, theoretical time = single image latency

prompts = ["...prompt 1...", "...prompt 2...", ...]

results = asyncio.run(batch_run(prompts))

💡 Concurrency Tip: In high-concurrency scenarios for Flash models, the connection pool reuse capability of the API proxy service directly determines your success rate. For production environments, we recommend using an API gateway with sub-second response times and connection pool reuse to keep the failure rate of long-tail requests below 0.1%.

Dimension 4: Text Rendering Capability—The Absolute Edge of GPT-Image-2

Text rendering is the "final exam" for image models, and for years, most models have failed this test. GPT-Image-2 is the first commercial model to break the 99% accuracy threshold.

First-Generation Accuracy by Language

| Language | GPT-Image-2 | Nano Banana 2 | Gap |

|---|---|---|---|

| English | 99.5%+ | 96% | +3.5pp |

| Chinese (Simplified/Traditional) | 98%+ | 90% | +8pp |

| Japanese (Kanji/Kana) | 97%+ | 85% | +12pp |

| Korean (Hangul) | 96%+ | 82% | +14pp |

| Arabic (RTL) | 95%+ | 75% | +20pp |

Key Differences:

- English scenarios: GPT-Image-2 has a slight lead; the difference is negligible for daily use.

- Chinese scenarios: The gap widens to 8pp, which is noticeable for posters and infographics.

- Non-Western scenarios (Japanese/Korean/Arabic): GPT-Image-2 has a massive, clear advantage.

Selection Guide for Typical Text Scenarios

| Scenario | Recommendation | Reason |

|---|---|---|

| English Marketing Posters | Either | Gap <4pp |

| Chinese Social Media Cards | GPT-Image-2 | Stable character morphology |

| Multilingual Ads | GPT-Image-2 | Consistently high accuracy |

| Japanese Anime Covers | GPT-Image-2 | Stable Kana and Kanji |

| Arabic Ads | GPT-Image-2 | RTL language remains intact |

| Brand LOGO Overlay | GPT-Image-2 | Reproducible fonts |

| Text-free Art | Nano Banana 2 | Faster speed |

🎯 Text-based Selection Tip: As long as your image output contains any readable text, especially CJK + RTL languages, prioritize GPT-Image-2 unconditionally. Although Nano Banana 2 has a speed advantage, if the text is incorrect, you'll have to re-run the job, making the total cost higher in the long run.

Dimension 5: Realism and Stylistic Expression—The Photographic Feel of Nano Banana 2

While GPT-Image-2 leads the rankings overall, Nano Banana 2’s Flash Diffusion architecture still holds a unique advantage when it comes to authentic photographic textures, cinematic lighting, and skin detail.

Realism Comparison Matrix

| Realism Dimension | GPT-Image-2 | Nano Banana 2 |

|---|---|---|

| Skin Texture | Slightly digital/illustrative | Natural pore detail |

| Lighting Realism | Excellent | Cinematic |

| Depth of Field (Bokeh) | Good | DSLR-like |

| Material Detail (Metal/Fabric) | Fine | Extremely fine |

| Outdoor Natural Light | Standard | Excellent |

| Indoor Lighting | Standard | Cinematic |

| Emotional Expression | Rational | Emotive |

| Artistic Stylization | Diverse | Realism-oriented |

Ideal Realism Use Cases for Nano Banana 2

- 📷 E-commerce Model Photography Replacement: Clothing, footwear, accessories, and beauty products.

- 🏨 Hotel/Real Estate Exterior & Interior Shots

- 🍽️ Food Photography Styles

- 🎬 Movie Posters / Trailer Key Visuals

- 🌅 Travel Landscapes / Nature Photography

- 👥 Lifestyle Portraits (Non-retouched artistic photos)

Ideal Creative Use Cases for GPT-Image-2

- 🎨 Illustration / Artistic Rendering

- 🖥️ UI Prototypes / Mockups

- 📊 Infographics / Data Visualization

- 📝 Posters + Typography

- 🎭 Comic Storyboarding

- 🧩 Precise Multi-object Layouts

Dimension 6: Aspect Ratio and Canvas—Nano Banana 2 Goes to Extremes

For ultra-wide banners, vertical information feeds, and long e-commerce detail images, the flexibility of the aspect ratio directly determines usability.

| Aspect Ratio Needs | GPT-Image-2 Support | Nano Banana 2 Support |

|---|---|---|

| Square 1:1 | ✅ | ✅ |

| Widescreen 16:9 | ✅ | ✅ |

| Vertical 9:16 | ✅ | ✅ |

| Cinematic 21:9 | ✅ | ✅ |

| Ultra-wide 3:1 | ✅ (Limit) | ✅ |

| Extreme-wide 4:1 | ❌ | ✅ |

| Super-wide 8:1 | ❌ | ✅ |

| Vertical Long 1:4 | ❌ | ✅ |

Nano Banana 2’s 4:1 / 8:1 extreme wide-screen support is currently unique in the industry, making it perfect for:

- Ultra-wide website header banners

- Extra-long composite images for product detail pages

- Horizontally unfolding timelines / flowcharts

- Giant posters for film or music festivals

💡 Aspect Ratio Advice: Both models handle standard marketing materials just fine. However, when you need ultra-wide (4:1 or higher) or extra-long (1:4 or higher) formats, Nano Banana 2 is currently your only choice. GPT-Image-2 requires post-generation stitching or outpainting for these requirements, which makes the workflow significantly more complex.

Dimension 7: API Pricing and Cost Optimization

The pricing strategies for these two models are completely different. Understanding them can help you cut your API costs by 30-50%.

Official Pricing Comparison (Per Image)

| Tier / Resolution | GPT-Image-2 | Nano Banana 2 | Cheaper Option |

|---|---|---|---|

| Low / 1024×1024 | $0.006 | $0.045 | GPT-Image-2 |

| Standard / 1024×1024 | ~$0.04 | $0.067 | GPT-Image-2 |

| High / 1024×1024 | $0.211 | $0.067 | Nano Banana 2 |

| High / 2K | $0.28 | $0.120 | Nano Banana 2 |

| High / 4K | $0.41 | $0.151 | Nano Banana 2 |

| Batch / 1K | N/A | $0.034 | Nano Banana 2 |

| Batch / 4K | N/A | $0.076 | Nano Banana 2 |

Two Typical Cost Models

Model A: GPT-Image-2 — "Quality-Tiered Pricing"

- Low-quality tier is extremely cheap ($0.006), perfect for bulk drafts.

- High-quality tier is quite expensive ($0.211+), use with caution for single high-end images.

- No Batch discounts available.

Model B: Nano Banana 2 — "Resolution-Tiered + Batch Discount"

- Prices remain stable across tiers between $0.045 and $0.151.

- Batch API offers a 50% discount across all tiers.

- Highly cost-effective for large-scale 4K production.

Monthly Cost Comparison Example (10,000 Images/Month)

| Scenario | GPT-Image-2 Monthly Cost | Nano Banana 2 Monthly Cost | Savings |

|---|---|---|---|

| Low-quality draft (1K) | $60 (Low) | $340 (Batch) | GPT saves 82% |

| Standard output (1K) | $400 | $340 (Batch) | NB2 saves 15% |

| High-quality 1K | $2110 | $340 (Batch) | NB2 saves 84% |

| High-quality 4K | $4100 | $760 (Batch) | NB2 saves 81% |

🎯 Cost Optimization Tip: Choose GPT-Image-2 Low for low-quality drafts, and Nano Banana 2 Batch for high-quality, large-scale production. A hybrid scheduling approach is the optimal solution. Through APIYI (apiyi.com), you can use a single API key to invoke both models and switch based on your business needs, without having to manage separate balances for OpenAI and Google.

Dimension 8: Compliance, Watermarking, and Content Safety

The two providers have very different approaches to content safety, which directly impacts enterprise compliance.

| Compliance Dimension | GPT-Image-2 | Nano Banana 2 |

|---|---|---|

| Visible Watermark | None | None |

| Invisible Watermark | C2PA Metadata | SynthID (Google Patent) |

| Moderation Strictness | High (prone to 400 errors) | Medium |

| Celebrities/Public Figures | Strictly restricted | Strictly restricted |

| Trademarks/Brand Logos | Relatively strict | Medium |

| Child Content | Strictly restricted | Strictly restricted |

| NSFW / Violence | Strictly prohibited | Strictly prohibited |

| Historical Figures | Relatively lenient | Relatively lenient |

Moderation Trigger Test

Testing with the same set of prompts shows:

- GPT-Image-2: When prompts include combinations like "woman, fashion, swimsuit," the probability of triggering a

moderation_blocked400 error is approximately 8%. - Nano Banana 2: The same prompts have a trigger rate of about 3%, making it more lenient for approval.

This means that for businesses in fashion, beauty, fitness, and medical aesthetics, Nano Banana 2 has a higher approval rate, though you should still maintain careful internal content review.

💡 Compliance Advice: For enterprise-level scenarios, we strongly recommend keeping the official invisible watermarks (C2PA or SynthID). If you find that GPT-Image-2 frequently returns 400 moderation errors, consider switching those specific scenarios to Nano Banana 2, or refer to the prompt rewriting guides in the APIYI (apiyi.com) documentation.

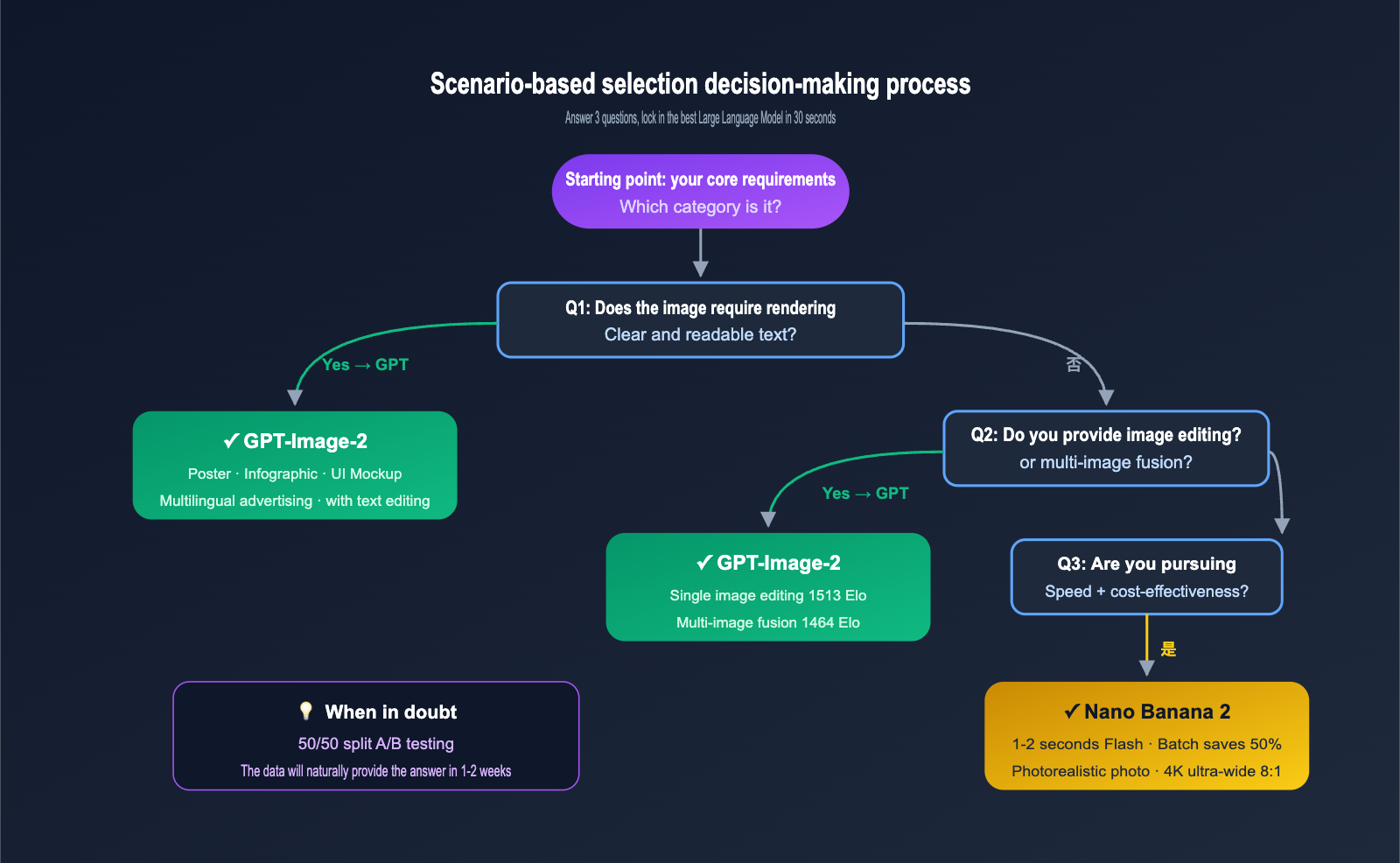

Scenario-Based Selection Decision Matrix

Based on the 8 dimensions mentioned above, here are our model recommendations for common business scenarios.

| Business Scenario | Primary Choice | Alternative | Core Reason |

|---|---|---|---|

| Marketing posters with text | GPT-Image-2 | NB2 Refined | 99% text accuracy |

| E-commerce product copy editing | GPT-Image-2 | – | 1513 Elo for single-image editing |

| E-commerce models / Fashion | Nano Banana 2 | NB Pro | Realism + Speed |

| Daily social media posts | Nano Banana 2 Batch | – | Low cost + Fast |

| Infographics / Data visualization | GPT-Image-2 | – | Reasoning + Text |

| 4K Ultra-wide banners (8:1) | Nano Banana 2 | – | Exclusive aspect ratio support |

| Multi-image composition | GPT-Image-2 | – | 1464 Elo for multi-image editing |

| Real-time AI editor | Nano Banana 2 | GPT Instant | 1-2 second response |

| Brand VI visual systems | GPT-Image-2 | – | Stable LOGO and text |

| Artistic stylization | Varies | – | Determined by A/B testing |

| Large-scale concept exploration | Nano Banana 2 Batch | – | 50% discount |

| High-quality 4K refinement | Nano Banana 2 | – | Lower unit price |

Three Hybrid Routing Strategies

Strategy A: Text + Structure Priority (Brand operations, advertising, B2B SaaS)

- 90% traffic → GPT-Image-2 (text-to-image + editing)

- 10% traffic → Nano Banana 2 (large-scale realism, ultra-wide aspect ratios)

Strategy B: Speed + Cost Priority (C-end AI tools, content factories, creative exploration)

- 80% traffic → Nano Banana 2 Batch (fast batch processing)

- 20% traffic → GPT-Image-2 (final refinement + text inclusion)

Strategy C: Dual-Track A/B Testing (New products, data-driven teams)

- 50/50 traffic split, tracking user click-through rates, download rates, and re-editing rates.

- Decide the primary model based on data; scene preferences usually emerge within 1-2 weeks.

🎯 Engineering Tip: All three strategies require switching models under the same SDK. We recommend using an OpenAI-compatible API proxy service (like APIYI apiyi.com) and pointing the

base_urlto a unified gateway. You can then switch models using themodelfield, eliminating the need to maintain separate API keys for OpenAI and Google AI Studio.

Quick Start: Calling Two Models with the Same Code

Unified Python Calling Template

from openai import OpenAI

client = OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1" # APIYI unified gateway

)

def generate(model: str, prompt: str, size="1024x1024", quality="high"):

"""Unified text-to-image interface for seamless model switching"""

resp = client.images.generate(

model=model,

prompt=prompt,

size=size,

quality=quality,

n=1

)

return resp.data[0].url

# Compare two models with the same prompt

prompt = "A modern tech startup poster with text 'Launch 2026', minimalist style"

url_gpt = generate("gpt-image-2", prompt)

url_nb2 = generate("gemini-3.1-flash-image", prompt)

print(f"GPT-Image-2: {url_gpt}")

print(f"Nano Banana 2: {url_nb2}")

Image Editing (Inpainting) Example

import base64

from pathlib import Path

def load_image_b64(path: str) -> str:

return base64.b64encode(Path(path).read_bytes()).decode()

def edit_image(model: str, image_path: str, mask_path: str, prompt: str):

"""Perform local editing (Inpainting) on an existing image"""

resp = client.images.edit(

model=model,

image=open(image_path, "rb"),

mask=open(mask_path, "rb"),

prompt=prompt,

size="1024x1024",

n=1

)

return resp.data[0].url

# Edit copy on the same product image using both models

edit_prompt = "Change the text on the box from 'V1.0' to 'V2.0', keep style"

url_gpt_edit = edit_image("gpt-image-2", "product.png", "mask.png", edit_prompt)

url_nb2_edit = edit_image("gemini-3.1-flash-image", "product.png", "mask.png", edit_prompt)

Node.js Version

import OpenAI from "openai";

const client = new OpenAI({

apiKey: process.env.APIYI_KEY,

baseURL: "https://vip.apiyi.com/v1",

});

async function compareModels(prompt) {

const [gpt, nb2] = await Promise.all([

client.images.generate({ model: "gpt-image-2", prompt, size: "1024x1024" }),

client.images.generate({ model: "gemini-3.1-flash-image", prompt, size: "1024x1024" }),

]);

return { gpt: gpt.data[0].url, nb2: nb2.data[0].url };

}

const result = await compareModels("A cyberpunk city at night, neon signs");

console.log(result);

💡 Integration Tip: Both models share the standard OpenAI SDK. Switching models only requires changing the

modelstring, with no changes needed to the parameter structure. For teams with A/B testing requirements, this is the shortest path to reducing switching costs to zero.

FAQ

1. Are Nano Banana 2 and Nano Banana Pro the same thing?

No, they aren't. Nano Banana 2 = Gemini 3.1 Flash Image (Flash version, speed-optimized); Nano Banana Pro = Gemini 3 Pro Image (Pro version, quality-optimized). They serve different purposes:

- Need highest quality + 14 reference images: Choose Nano Banana Pro.

- Need fastest speed + lowest batch cost: Choose Nano Banana 2.

- Not sure which to pick? Start by running tests with Nano Banana 2; upgrade to Pro if the quality isn't quite there.

2. Is GPT-Image-2 really superior to Nano Banana 2 in image editing?

GPT-Image-2 holds a significant lead on the LMArena Single-Image Editing (1513 vs 1065) and Multi-Image Editing (1464 vs 1050) leaderboards. However, in terms of actual batch editing speed, Nano Banana 2 is still 50-100% faster. So, if you're chasing ultimate editing quality, go with GPT-Image-2; if you need fast batch editing, choose Nano Banana 2.

3. Why is the text-to-image Elo of Nano Banana 2 only 1080, yet it feels so powerful to use?

Arena Elo is based on blind test relative preference, and general users tend to prefer the structural precision of GPT-Image-2. However, in professional designer workflows, the rapid iteration capability of Nano Banana 2 is often more valuable than "getting it right on the first try." An Elo score isn't the same as "how good it feels to use."

4. How can I reliably call these two APIs from within China?

Official API access can be unstable for users in China. We recommend using the optimized domestic routes provided by APIYI (apiyi.com). It is compatible with the standard OpenAI SDK, covers both gpt-image-2 and gemini-3.1-flash-image, offers sub-second latency, and provides enterprise-grade SLA.

5. Are the Inpainting interfaces for both models consistent?

Yes, both are compatible with the standard OpenAI client.images.edit(image, mask, prompt) interface, and the parameter structure is identical. When calling via an API proxy service, you can run the same code against both models to compare outputs without modifying any request bodies.

6. How do I use the 50% discount for the Nano Banana 2 Batch API?

The Batch API is suitable for non-real-time scenarios, where requests are processed in batches within 24 hours. When calling, mark batch in the endpoint or model name, for example: gemini-3.1-flash-image-batch. When accessing via APIYI (apiyi.com), the batch discount is applied automatically—no manual application required.

7. What should I do if I encounter a GPT-Image-2 moderation 400 error?

Common causes include prompts involving celebrities, trademarks, violence, or sensitive keywords. Here are three ways to handle it:

- Rewrite the prompt to avoid sensitive keywords.

- Switch the same prompt to Nano Banana 2 for testing (as they have slightly different moderation policies).

- Consult the dedicated documentation on moderation troubleshooting at APIYI (apiyi.com).

8. Will there be a Nano Banana 3 or GPT-Image-3 in the future?

Based on the iteration cycles of Google and OpenAI, both companies are expected to release next-generation models in the second half of 2026. Our advice is: don't wait. Start using these two now and standardize your API integration (using the OpenAI SDK compatible format) so that switching to future models will be as easy as possible.

Summary: The "Dual-Model Division of Labor" Era for Text-to-Image and Image Editing

After a systematic comparison across 8 dimensions, we can draw three clear conclusions:

-

GPT-Image-2 is the all-around champion for text-to-image and image editing, ranking first across all three Arena leaderboards. It has established a generational advantage in text rendering, structural reasoning, and multi-image fusion, making it ideal for branding, UI, infographics, and high-end editing.

-

Nano Banana 2 is the king of Flash speed and cost-effectiveness, with significant advantages in large-image generation speed, ultra-wide aspect ratios, and batch costs. It is perfect for content factories, social media, real-time editing, and realistic photography.

-

A dual-model division of labor is the optimal solution for 2026; no single model can "do it all." Routing tasks based on the specific scenario ensures the lowest cost and highest quality output.

For teams looking to get started quickly with zero migration or learning costs, we recommend using the APIYI (apiyi.com) platform for unified access. With one API key, one set of standard OpenAI SDKs, and one base_url, you can seamlessly switch between gpt-image-2 and gemini-3.1-flash-image based on your business needs, while enjoying stable domestic access and bulk discounts.

🎯 Final Recommendation: If your team hasn't integrated either model yet, register an account at APIYI (apiyi.com). Run 30 comparison tests with the same code (10 text-to-image, 10 single-image edits, 10 multi-image fusions). Let the data speak for itself—you'll have your primary model locked in within 30 minutes.

Author: APIYI Technical Team | apiyi.com

Published: 2026-04-24

Technical Support: Visit APIYI (apiyi.com) for the latest AI Large Language Model API services. We support unified access to major providers like OpenAI, Google, and Anthropic, covering full-scenario capabilities including text-to-image, image editing, video generation, and text chat.