description: A comprehensive guide to Little Red Book's open-source FireRed Image Edit 1.1, featuring 5 core capabilities, benchmark data, and API integration details.

Author's Note: This is a comprehensive breakdown of the open-source FireRed Image Edit 1.1 image editing model from Little Red Book (Xiaohongshu). We’ll cover its 5 core capabilities, benchmark data, technical architecture, and how to integrate via API. This open-source SOTA has officially surpassed Alibaba's Qwen.

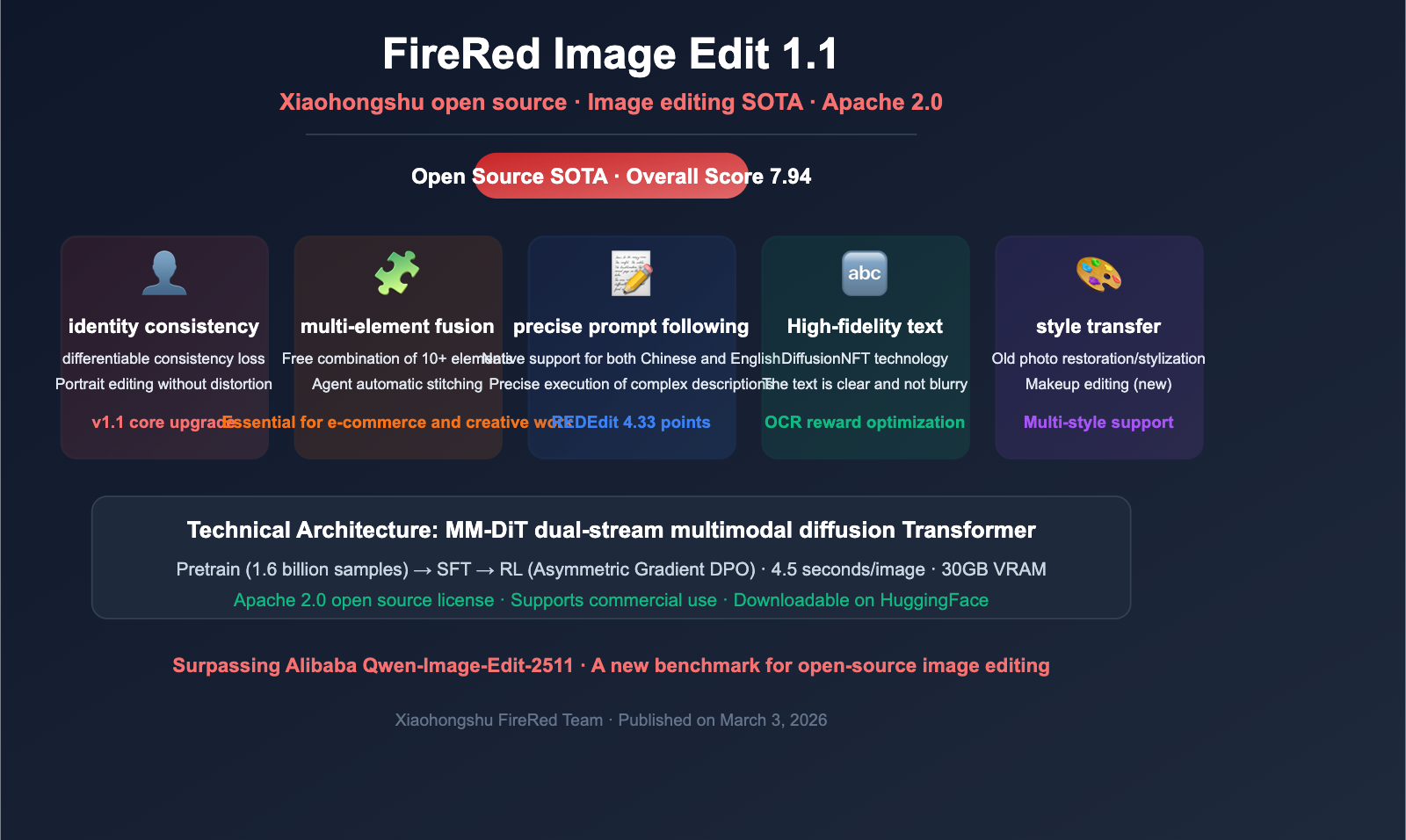

On March 3, 2026, the Little Red Book FireRed team released FireRed-Image-Edit 1.1—a foundational image editing model based on the Diffusion Transformer architecture. The model has achieved open-source SOTA status across three major benchmarks: ImgEdit, GEdit, and REDEdit. With a composite score of 7.94, it edges out Alibaba's Qwen-Image-Edit-2511 (7.88), making it the most powerful open-source image editing model currently available.

Core Value: By reading this article, you'll understand the 5 core capabilities of FireRed Image Edit 1.1, its architectural innovations, and how to quickly integrate it using our API.

FireRed Image Edit 1.1 Key Highlights

| Point | Description | Advantage |

|---|---|---|

| Open-source SOTA | ImgEdit score 4.56, GEdit score 7.94 | Surpasses Qwen-Image-Edit |

| Face Consistency | Differentiable consistency loss, high-fidelity facial features | Portrait editing without distortion |

| Multi-element Fusion | Supports 10+ element combinations | Intelligent auto-cropping and stitching |

| Bilingual | 1,673 Chinese-English editing pairs | Native Chinese instruction support |

| Apache 2.0 | Fully open-source, supports commercial use | Free for commercial application |

What is FireRed Image Edit 1.1?

FireRed-Image-Edit is a foundational image editing model developed by the Little Red Book FireRed team. Unlike common text-to-image models, it specializes in image editing—modifying images precisely according to natural language instructions while maintaining the integrity of the original core content.

You can upload up to 3 reference images and describe your desired effects in natural language (Chinese or English). The model intelligently fuses elements, styles, and faces from the reference images into your output.

Key improvements in version 1.1 compared to 1.0:

- Significantly Optimized Face Consistency: Maintains more accurate facial features when changing backgrounds or transferring styles.

- Enhanced Multi-element Fusion: Handles complex multi-image combination scenarios much better.

- Stylized Text References: Supports a wider variety of fonts and layout styles.

- Portrait Makeup Effects: Added high-precision makeup editing capabilities.

5 Key Capabilities of FireRed Image Edit 1.1

Capability 1: Identity Consistency

This is the most significant upgrade in version 1.1. Through an innovative Differentiable Consistency Loss mechanism, the model can precisely preserve facial features, expressions, and personal characteristics when editing portraits.

Use Cases:

- Changing the background of a photo while keeping the face unchanged

- Applying various artistic styles while retaining identity information

- Compositing characters into different scenes while maintaining consistent appearance

Traditional image editing models often suffer from "facial distortion" during style transfer—making the person look like someone else. FireRed 1.1 solves this by minimizing identity differences throughout the entire generation process.

Capability 2: Multi-Element Fusion

FireRed 1.1 supports the free combination of over 10 visual elements, paired with Agent-driven automatic cropping and stitching features:

| Fusion Type | Description | Typical Scenario |

|---|---|---|

| Character + Background | Place a person in a new scene | Replacing backgrounds for fashion models |

| Character + Clothing | Virtual try-on effects | E-commerce clothing display |

| Multi-character Combo | Combine characters from different images | Creative compositing for posters |

| Style + Content | Apply reference image style to content image | Artistic style transfer |

| Text + Image | Naturally integrate text into images | Social media cover design |

Capability 3: Instruction Following

The model utilizes Stochastic Instruction Alignment technology, coupled with dynamic prompt re-indexing, to ensure the output stays highly consistent with user instructions.

Tests show that FireRed 1.1 achieved the following scores on the REDEdit-Bench instruction following dimension:

- Chinese instruction score: 4.33

- English instruction score: 4.26

This means the model can handle not only simple commands like "change the background to the beach" but also complex descriptions like "keep the person unchanged, replace the background with a tropical beach at sunset, and add soft, warm-toned lighting effects."

Capability 4: High-Fidelity Text Editing

Through DiffusionNFT technology and layout-aware OCR reward mechanisms, FireRed 1.1 can accurately preserve and edit text content within images. This is crucial in practical applications, as many image editing models suffer from blurred or distorted text when processing images containing text.

Capability 5: Old Photo Restoration and Style Transfer

FireRed 1.1 excels in old photo restoration and cross-style transfer:

- Old Photo Restoration: Automatically fixes common old photo issues like scratches, color degradation, and blur.

- Style Transfer: Converts photos into various artistic styles such as oil painting, watercolor, and anime.

- Makeup Editing: The 1.1 update adds refined makeup adjustment capabilities.

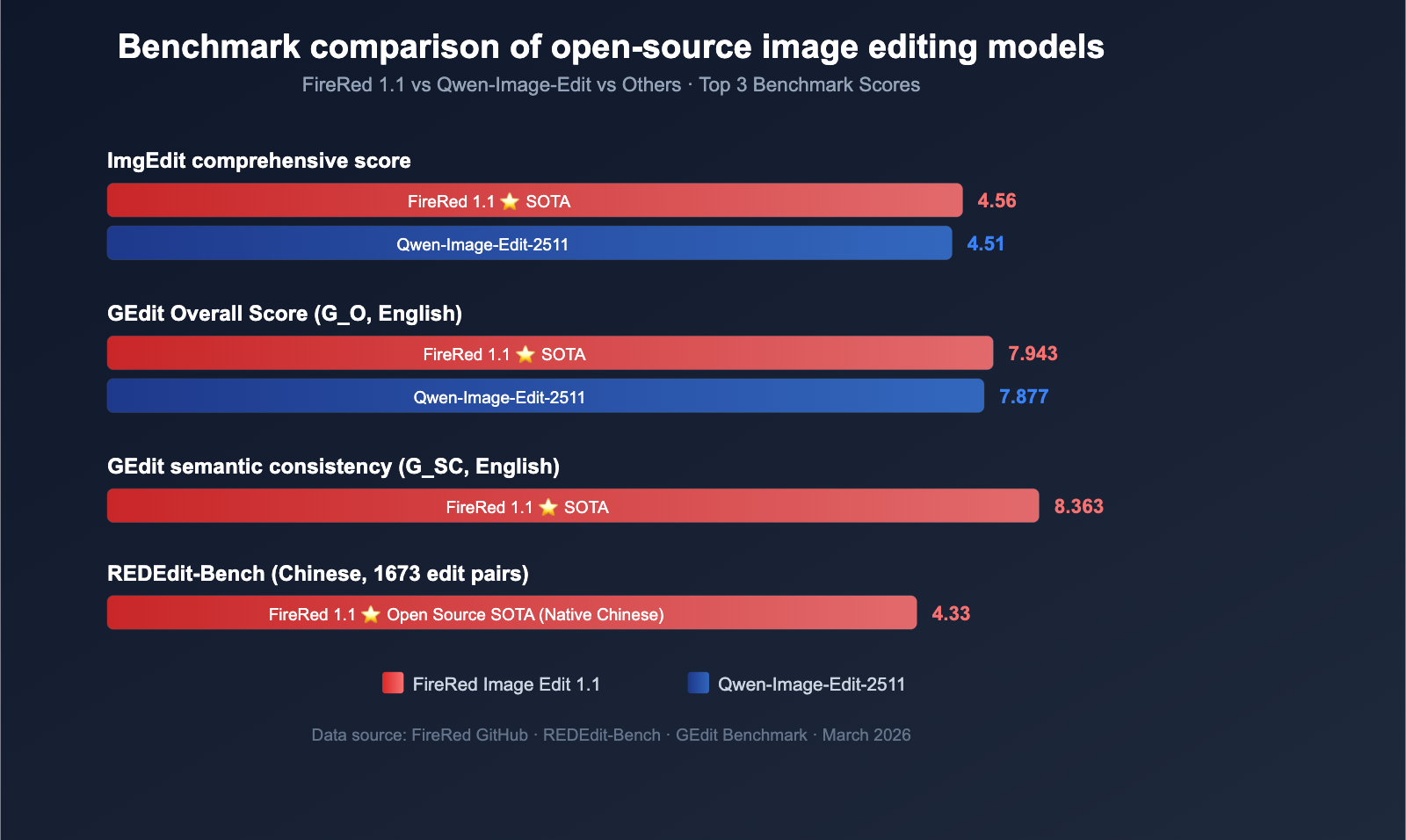

FireRed Image Edit 1.1 Benchmark Results

Leading Across Three Major Benchmarks

| Benchmark | FireRed 1.1 | Qwen-Image-Edit | Comparison |

|---|---|---|---|

| ImgEdit (Overall) | 4.56 | 4.51 | ✅ FireRed wins |

| GEdit (Overall G_O) | 7.94 (EN) / 7.89 (CN) | 7.88 | ✅ FireRed wins |

| REDEdit (Chinese) | 4.33 | — | Open-source SOTA |

| REDEdit (English) | 4.26 | — | Open-source SOTA |

GEdit Dimensional Breakdown

| Dimension | English Score | Chinese Score | Meaning |

|---|---|---|---|

| G_SC (Semantic Consistency) | 8.363 | 8.287 | Semantic matching between edit results and instructions |

| G_PQ (Perceptual Quality) | 8.245 | 8.227 | Visual quality of generated images |

| G_O (Overall Score) | 7.943 | 7.887 | Weighted composite score |

REDEdit-Bench is a benchmark developed in-house by the FireRed team, covering 15 categories and 1,673 Chinese-English bilingual edit pairs, making it more aligned with real-world user editing needs than existing benchmarks.

🎯 Performance Tip: FireRed 1.1 shows its strongest advantages in face consistency and instruction following, making it particularly suitable for editing scenarios where character features must be preserved. APIYI (apiyi.com) plans to integrate this model in the future; users with specific needs are welcome to contact us for early access.

FireRed Image Edit 1.1 Technical Architecture

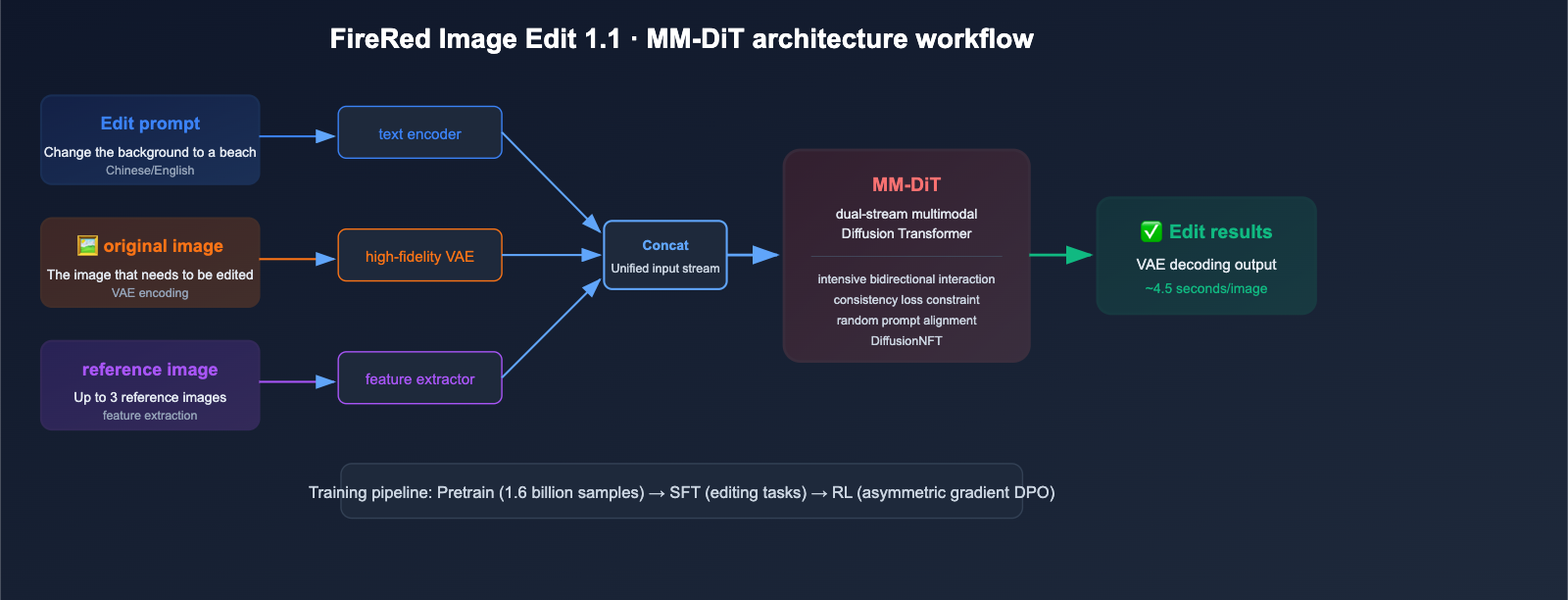

Core Architecture: MM-DiT Double-Stream Multimodal Diffusion Transformer

The core generation engine of FireRed 1.1 is the Double-Stream Multimodal Diffusion Transformer (MM-DiT):

- Text Embedding: User editing instructions are converted into semantic vectors via a text encoder.

- Image Latent Tokens: The original image is encoded into a latent space representation using a high-fidelity VAE.

- Reference Image Features: Visual features of reference images (up to 3) are extracted.

- Unified Input Stream: The three streams of information are concatenated into a unified input and fed into the MM-DiT for dense bidirectional interaction.

- Generation Output: The model generates the latent representation of the edited image, which is then decoded back into the final image by the VAE.

Training Pipeline: Pretrain → SFT → RL

FireRed 1.1 uses a full three-stage training process:

- Pretraining: Based on a massive corpus of 1.6 billion samples, including over 100 million high-quality samples.

- Supervised Fine-Tuning (SFT): Fine-tuned specifically for editing tasks.

- Reinforcement Learning (RL): Further enhances editing quality using DPO with asymmetric gradient optimization.

Key Technical Innovations

| Technology | Purpose | Effect |

|---|---|---|

| Differentiable Consistency Loss | Identity preservation | Face non-distortion in portrait editing |

| Random Instruction Alignment | Instruction understanding | Precise execution of complex descriptions |

| Multi-Condition Aware Bucket Sampling | Training efficiency | Supports variable resolution batch processing |

| DiffusionNFT | Text editing | Sharp and clear text within images |

| Asymmetric Gradient DPO | Quality optimization | Alignment with human preferences |

💡 Developer Perspective: The editing capabilities of FireRed 1.1 can be migrated to any T2I foundation model, which means it is not just an editing model, but a reusable framework for editing capabilities.

title: FireRed Image Edit 1.1 API Integration Guide

description: Learn how to integrate FireRed Image Edit 1.1, its technical requirements, and how it fits into your workflow compared to other image models.

tags: [FireRed, API, Image Generation, AI]

FireRed Image Edit 1.1 API Integration Guide

Available API Platforms

FireRed Image Edit 1.1 is currently available via several third-party platforms:

| Platform | Estimated Pricing | Features |

|---|---|---|

| Replicate | ~$0.036/call | Pay-per-call, easy to use |

| fal.ai | Usage-based | Serverless deployment, fast response |

| WaveSpeedAI | Usage-based | Focused on AI image model acceleration |

| HuggingFace Spaces | Free trial | Online demo, no coding required |

Local Deployment Requirements

If you need to deploy FireRed 1.1 locally:

- VRAM Requirements: 30GB VRAM (A100 or H100 recommended)

- Inference Speed: Approximately 4.5 seconds per image

- License: Apache 2.0, supports commercial use

- Model Source: HuggingFace

FireRedTeam/FireRed-Image-Edit-1.1

APIYI Integration Status

FireRed Image Edit 1.1 is not yet live on the APIYI platform, but it is currently under technical evaluation and integration preparation.

🔔 Integration Notice: APIYI (apiyi.com) is currently evaluating the integration of the FireRed Image Edit 1.1 model. If you have image editing API needs, please contact the APIYI team to learn about the progress or to request early testing access. Once it goes live on the platform, you'll be able to use a unified API interface for model invocation, eliminating the need for self-deployment.

FireRed Image Edit 1.1 Use Cases

E-commerce and Content Creation

- Product Photo Editing: Swapping product backgrounds, adjusting lighting, and adding scenes

- Virtual Try-on: Realistic virtual garment rendering to lower photography costs

- Social Media Covers: Rapid generation of consistent visual styles for covers

- Photo Restoration: Repairing old photos and enhancing overall image quality

Design and Creativity

- Style Transfer: Converting photos into various artistic styles

- Creative Compositing: Combining multiple elements to generate creative posters

- Brand Assets: Batch processing images for a consistent brand visual identity

Positioning Differences Compared to Other Image Models

| Model | Positioning | Key Advantage | Best For |

|---|---|---|---|

| FireRed Image Edit 1.1 | Image Editing | Face consistency, instruction following | Precise editing of existing images |

| Gemini Imagen 4 | Text-to-image | High-quality generation | Generating new images from scratch |

| DALL-E 3 | Text-to-image | Text rendering | Creative image generation |

| Stable Diffusion 3 | Text-to-image + Edit | Open-source ecosystem | Flexible customization |

The core differentiator for FireRed 1.1 is: It doesn't just generate new images; it precisely edits existing ones. This gives it a unique edge in scenarios like e-commerce and content creation where you need to perform secondary processing on authentic assets.

🚀 Pro Tip: If your requirement is "precise modifications based on existing images" (swapping backgrounds, changing styles, adding elements, etc.), FireRed is currently the best open-source choice. If you need text-to-image capabilities, you can use models like Gemini Imagen or DALL-E via the APIYI (apiyi.com) platform and mix-and-match them according to your specific project needs.

FAQ

Q1: Is FireRed Image Edit 1.1 free for commercial use?

Yes. FireRed Image Edit 1.1 is released under the Apache 2.0 license, which allows for free use, modification, and distribution, including for commercial purposes. You can download the model weights from HuggingFace for local deployment or use them via third-party API platforms on a pay-per-use basis.

Q2: What are the differences between FireRed 1.1 and 1.0, and which one should I use?

We recommend using version 1.1. Building on 1.0, version 1.1 focuses on significant improvements in face consistency, multi-element fusion, stylized text, and makeup effects. It's an upgrade in every aspect with no regressions. Version 1.1 achieves a GEdit comprehensive score of 7.94, compared to the lower baseline of 1.0.

Q3: What hardware is required for local deployment?

FireRed 1.1 requires at least 30GB of VRAM; we recommend using NVIDIA A100 (40/80GB) or H100 GPUs. If you don't have sufficient GPU resources, we suggest using it via API. On Replicate, a single model invocation costs approximately $0.036. Once it becomes available on the APIYI (apiyi.com) platform, you'll also be able to call it directly via API.

Q4: When will APIYI support FireRed Image Edit?

FireRed Image Edit 1.1 is currently in the technical evaluation phase for the APIYI platform. If you have specific needs for an image editing API, please reach out to the APIYI (apiyi.com) team. Your feedback will help us accelerate the evaluation and integration process.

Summary

Key highlights of FireRed Image Edit 1.1:

- Open-Source SOTA: Achieves a GEdit comprehensive score of 7.94 and ImgEdit score of 4.56, comprehensively outperforming Qwen-Image-Edit-2511.

- Leading Face Consistency: Features a differentiable consistency loss mechanism that prevents "face swapping" during portrait editing.

- Native Chinese Support: Developed by the Xiaohongshu team, it delivers excellent performance with both Chinese and English prompts.

- Fully Open-Source & Commercial-Ready: Released under the Apache 2.0 license and available for direct download on HuggingFace.

- Efficient Inference: Deployable with 30GB of VRAM and a generation speed of 4.5 seconds per image.

For developers and enterprises requiring precise image editing capabilities, FireRed 1.1 is currently the best choice in the open-source field.

APIYI (apiyi.com) is actively evaluating the integration of FireRed Image Edit 1.1. If you have any requirements, please feel free to contact us for more information. Our platform already supports unified model invocation for Gemini, Claude, GPT, and more; the addition of image editing models will further enhance our multimodal API matrix.

📚 Reference Materials

-

FireRed-Image-Edit GitHub Repository: Official open-source code and documentation.

- Link:

github.com/FireRedTeam/FireRed-Image-Edit - Note: Includes complete source code, model weight download links, and usage examples.

- Link:

-

FireRed-Image-Edit 1.1 HuggingFace: Model weights download.

- Link:

huggingface.co/FireRedTeam/FireRed-Image-Edit-1.1 - Note: You can download the model weights directly for local deployment.

- Link:

-

FireRed-Image-Edit 1.0 Technical Report: Academic paper.

- Link:

arxiv.org/abs/2602.13344 - Note: Provides a detailed breakdown of the architectural design and training methodology.

- Link:

-

REDEdit-Bench Benchmark: Evaluation methodology.

- Link:

github.com/FireRedTeam/FireRed-Image-Edit - Note: Includes an evaluation standard consisting of 15 categories and 1,673 bilingual edit pairs.

- Link:

Author: APIYI Technical Team

Tech Discussion: Feel free to share your AI image editing experiences in the comments. For more AI model news, visit the APIYI documentation center at docs.apiyi.com.