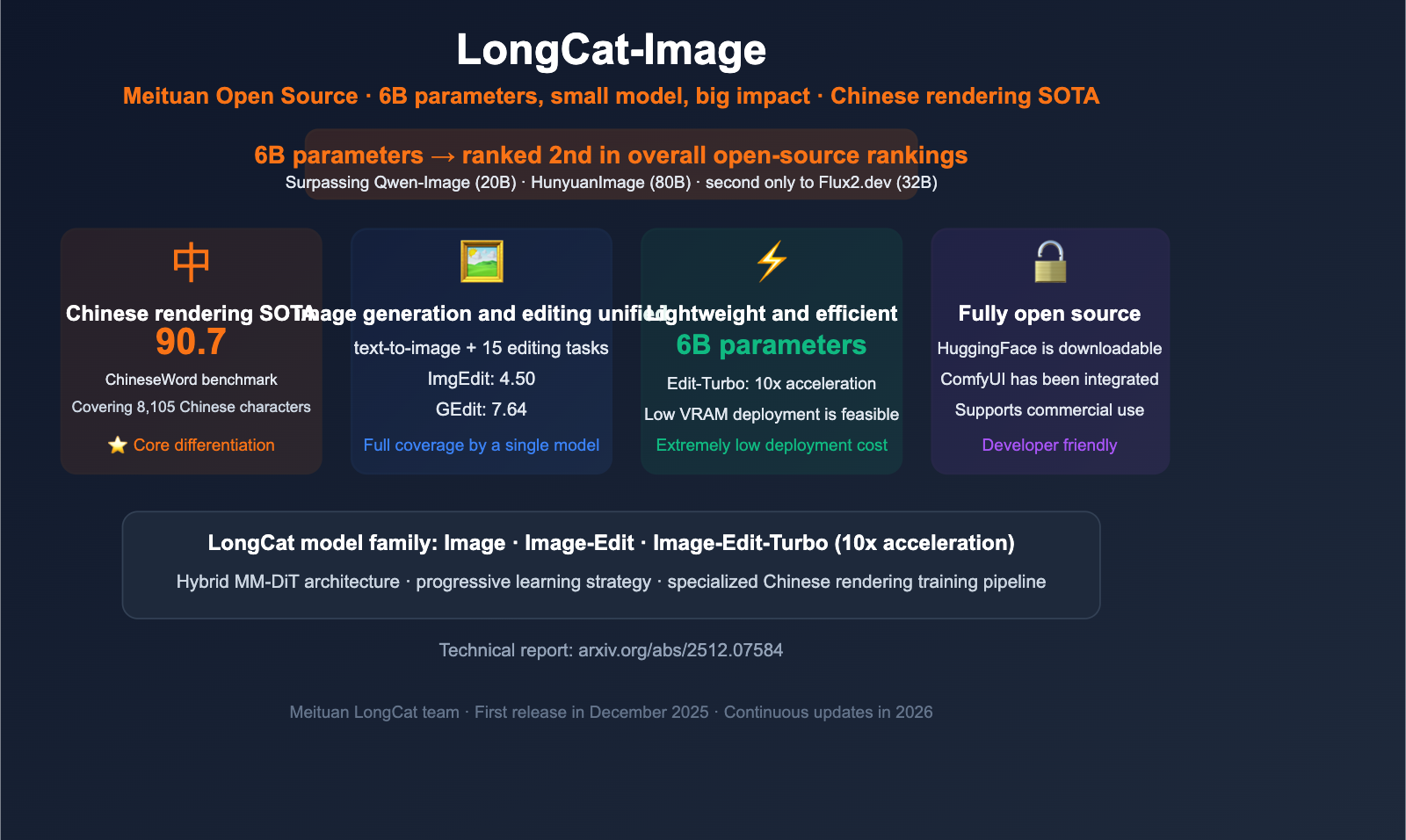

Author's Note: A comprehensive analysis of the open-source LongCat-Image image generation and editing model from Meituan. With only 6B parameters, it outperforms several 20B-80B models, provides full rendering support for all 8105 standard Chinese characters, and includes benchmark data and API access details.

In the AI image generation world, bigger models usually mean better results. However, Meituan’s LongCat team has shattered that convention with LongCat-Image. This model, sporting only 6B parameters, outperforms competitors several times its size—such as Qwen-Image-20B and HunyuanImage-3.0 (80B)—in multiple benchmarks. It currently holds the second spot in open-source overall performance, trailing only the 32B Flux2.dev.

Key Takeaways: By the end of this article, you’ll understand the four core advantages of LongCat-Image, its technical architecture, and its unique value in Chinese-language scenarios.

LongCat-Image Core Highlights

| Feature | Description | Advantage |

|---|---|---|

| Punching Above its Weight | 6B parameters outperform 20B-80B models | Extremely low deployment costs |

| SOTA Chinese Rendering | ChineseWord score of 90.7, covering 8105 characters | Top choice for Chinese scenarios |

| Unified Generation + Editing | Single model supports T2I and 15 editing tasks | No need to switch between models |

| Fully Open Source | Available on HuggingFace, ComfyUI support | Flexible deployment |

What is LongCat-Image?

LongCat-Image is an open-source bilingual (Chinese-English) image foundation model developed by the Meituan LongCat team. It’s built on a Diffusion Transformer architecture and utilizes a hybrid MM-DiT (Multi-Modal Diffusion Transformer) and unified multimodal context encoder design, striking the perfect balance between generation quality and inference efficiency.

LongCat-Image addresses four core pain points in current image generation models:

- Multilingual Text Rendering: Many models produce "garbled text" when generating Chinese; LongCat is specifically optimized for Chinese character rendering.

- Photorealism: Through innovative data strategies and training frameworks, the realism of the generated images reaches commercial-grade standards.

- Deployment Efficiency: 6B parameters mean lower GPU requirements and faster inference speeds.

- Developer-Friendly: It's completely open-source and supports ComfyUI workflow integration.

The model family includes:

| Model | Function | Release Date |

|---|---|---|

| LongCat-Image | Text-to-image (T2I) | 2025-12 |

| LongCat-Image-Edit | Image editing (15 tasks) | 2025-12 |

| LongCat-Image-Edit-Turbo | Accelerated editing version (10x speed) | 2026-02 |

4 Key Advantages of LongCat-Image

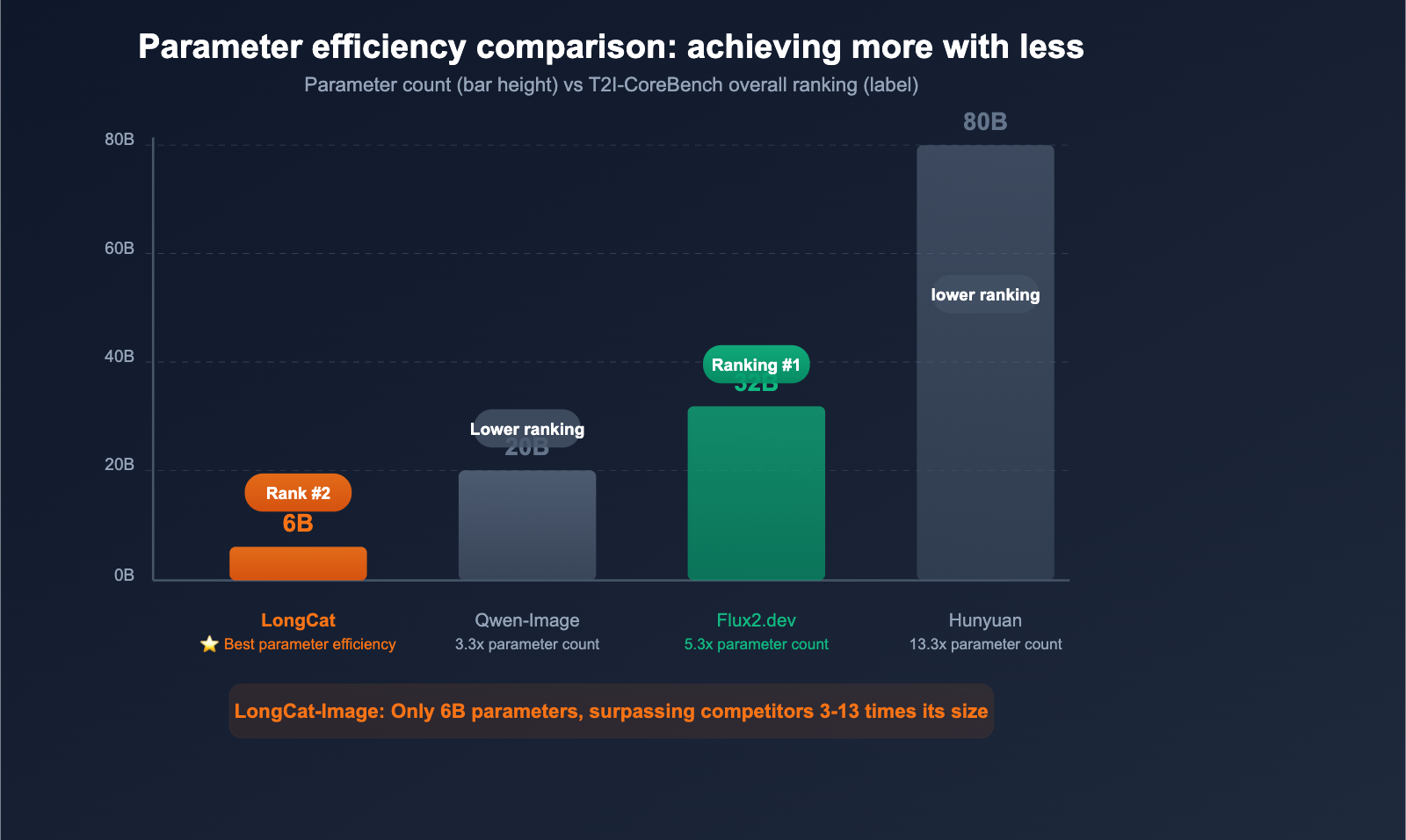

Advantage 1: Punching Above Its Weight with 6B Parameters

The most impressive feature of LongCat-Image is its parameter efficiency. In the T2I-CoreBench comprehensive evaluation:

| Model | Parameter Count | Overall Ranking | Comparison |

|---|---|---|---|

| Flux2.dev | 32B | #1 | 5.3x larger |

| LongCat-Image | 6B | #2 | ⭐ Price-Performance King |

| Qwen-Image | 20B | Below LongCat | 3.3x larger |

| HunyuanImage-3.0 | 80B | Below LongCat | 13.3x larger |

The practical benefits of having only 6B parameters:

- Lower VRAM requirements: Reduces VRAM demand by about 5x compared to 32B models

- Faster inference speeds: Fewer parameters lead to faster forward propagation

- Lower deployment costs: Can run on lower-spec GPUs

- Edge deployment potential: Enables future possibilities for mobile/edge device deployment

Advantage 2: Leading the Way in Chinese Text Rendering

This is arguably the most distinct capability of LongCat-Image. It scored 90.7 on the ChineseWord benchmark, covering all 8,105 characters in the GB2312 standard.

Why does this matter? Most image generation models (including Midjourney, DALL-E, and Stable Diffusion) often struggle with Chinese text, resulting in:

- Gibberish: Characters that aren't actually Chinese

- Blurriness: Strokes that are unclear or illegible

- Misalignment: Chaotic placement and layout

LongCat-Image solves these issues through a specialized training strategy, making titles, price tags, and UI text clear and readable within generated images. This is essential for e-commerce, social media, and advertising design.

Practical Application Examples:

- E-commerce Posters: Promotional images containing product names and prices in Chinese

- Social Media Covers: Channel/Xiaohongshu covers with Chinese titles

- Branding Materials: Brand promotional graphics with Chinese slogans

- UI Prototypes: Interface designs featuring Chinese labels

Advantage 3: Unified Architecture for Generation and Editing

LongCat-Image features a unified architecture that supports both text-to-image and image editing, with no need to switch models:

Text-to-Image (T2I) Capabilities:

- GenEval score: 0.87

- DPG-Bench score: 86.8

- Photorealistic quality that rivals closed-source commercial models

Image Editing Capabilities (15 Tasks):

- ImgEdit-Bench score: 4.50

- GEdit-Bench score: 7.60 (Chinese) / 7.64 (English)

- Supports background replacement, style transfer, object addition/removal, color adjustment, and more

Edit-Turbo Accelerated Version (Released Feb 2026):

- Achieves 10x speedup through model distillation

- Retains over 95% of the editing quality of the original version

- Ideal for production environments requiring rapid responses

🎯 Use Case Advice: If your application needs both image generation and editing capabilities, the unified architecture of LongCat-Image can simplify your tech stack. APIYI (apiyi.com) hasn't integrated LongCat-Image yet; if you have a need for it, feel free to contact us to evaluate its implementation. We currently specialize in the Nano Banana Pro/2 series (Gemini image models) for image generation, which have been fully verified for stability.

Advantage 4: Fully Open Source and Developer-Friendly

The open-source ecosystem for LongCat-Image is quite comprehensive:

| Resource | Description |

|---|---|

| GitHub Repository | github.com/meituan-longcat/LongCat-Image |

| HuggingFace Model | meituan-longcat/LongCat-Image |

| ComfyUI Support | Integrated as of March 2026, supports visual workflows |

| Technical Report | arxiv.org/abs/2512.07584 |

The open-source license allows for commercial use, enabling developers to:

- Download model weights directly for local deployment

- Build custom image workflows using ComfyUI

- Call the model via API on platforms like WaveSpeedAI or fal.ai

- Fine-tune the model to suit specific business scenarios

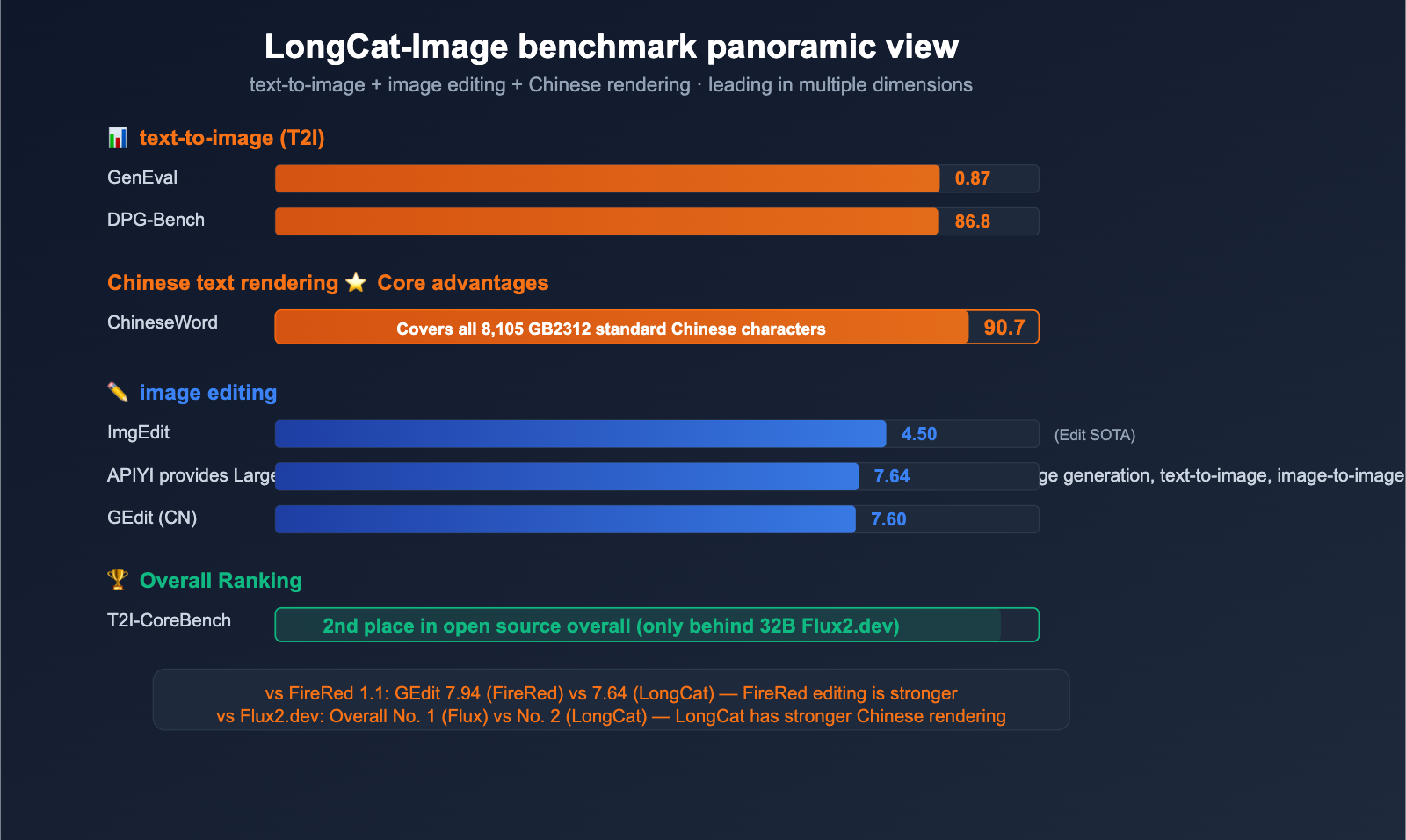

Comprehensive Analysis of LongCat-Image Benchmarks

Text-to-Image (T2I) Benchmarks

| Benchmark | LongCat-Image | Description |

|---|---|---|

| GenEval | 0.87 | Overall T2I quality |

| DPG-Bench | 86.8 | Fine-grained text-to-image alignment |

| ChineseWord | 90.7 | Accuracy of Chinese text rendering |

| T2I-CoreBench | 2nd Place (OSS) | Overall ranking |

Image Editing Benchmarks

| Benchmark | LongCat-Image-Edit | Description |

|---|---|---|

| ImgEdit-Bench | 4.50 | Overall editing quality |

| GEdit-Bench (Chinese) | 7.60 | Editing via Chinese instructions |

| GEdit-Bench (English) | 7.64 | Editing via English instructions |

Positioning Comparison with Other Models

| Model | Parameter Count | Core Strength | Chinese Rendering | OSS |

|---|---|---|---|---|

| LongCat-Image | 6B | Chinese Rendering + Lightweight | ⭐⭐⭐⭐⭐ 90.7 | ✅ |

| FireRed Image Edit 1.1 | — | Identity Consistency + Editing | ⭐⭐⭐ | ✅ |

| Gemini Nano Banana Pro | — | Multi-turn Chat + Search | ⭐⭐ | ❌ |

| Flux2.dev | 32B | Best Overall Generation | ⭐⭐⭐ | ✅ |

💡 Recommendation: If your primary requirement is Chinese text rendering (for e-commerce, social media, etc.), LongCat-Image is currently the best choice. If you prioritize identity consistency in image editing, consider FireRed Image Edit 1.1. For the most stable commercial image generation API, the Nano Banana Pro/2 series, now available on the APIYI (apiyi.com) platform, is a proven and reliable solution.

LongCat-Image Technical Architecture

Hybrid MM-DiT Architecture

The core of LongCat-Image is the hybrid MM-DiT (Multi-Modal Diffusion Transformer):

- Unified Multimodal Context Encoder: Standardizes the encoding of text prompts, original images, and reference images.

- Progressive Learning Strategy: Gradually elevates model capabilities from simple to complex tasks.

- Dedicated Chinese Text Training: A specialized pipeline optimized for 8,105 standard Chinese characters.

Training Data Scale

The model was trained using a meticulously curated large-scale dataset:

- Strategic Data Filtering: Data strategy focused on photorealism and Chinese rendering.

- Progressive Training: Phased training from basic generation to fine-grained editing.

- Quality-First: Strict data cleaning and quality filtration workflows.

Edit-Turbo Distillation Acceleration

The Edit-Turbo version, released in February 2026, achieves a 10x speedup through model distillation:

- Original Edit: Full quality, slower inference.

- Edit-Turbo: 95% quality, 10x speed.

- Use Cases: Real-time editing, batch processing, and latency-sensitive applications.

LongCat-Image API Integration and Deployment

Third-Party API Platforms

| Platform | Supported Models | Features |

|---|---|---|

| WaveSpeedAI | T2I + Edit | AI image model acceleration platform |

| fal.ai | T2I + Edit | Serverless deployment |

| Replicate | T2I + Edit | Pay-per-use billing |

| ComfyUI | T2I + Edit + Turbo | Local visual workflow |

Local Deployment

- Recommended GPU: NVIDIA A100 (40GB) or H100

- Model Source: HuggingFace

meituan-longcat/LongCat-Image - ComfyUI Integration: Supported since March 2026, ready to use out of the box

APIYI Platform Notes

LongCat-Image is not currently available on the APIYI platform.

🔔 Integration Notice: APIYI (apiyi.com) primarily offers the Nano Banana Pro/2 series (Google Gemini image models) for image generation, which is our most reliable and high-performance solution. If you have a specific API requirement for LongCat-Image (especially for Chinese text rendering), feel free to reach out to the APIYI team; we'd be happy to evaluate its integration based on client demand.

LongCat-Image Use Cases

Best Scenarios for LongCat-Image

- Chinese E-commerce Assets: Generating posters that include Chinese product names, prices, and promotional copy.

- Chinese Social Media Content: Covers for platforms like Little Red Book (Xiaohongshu), WeChat Official Accounts, and Douyin that require text.

- Chinese Branding: Design drafts featuring Chinese slogans and brand names.

- Chinese UI Prototypes: Application prototypes featuring Chinese interface elements.

Scenarios Where Other Models Are Recommended

- Pure English Content Generation: Flux2.dev or DALL-E 3 might perform better.

- Precise Portrait Editing: FireRed Image Edit 1.1 offers better face consistency.

- Stable Commercial API Needs: The Nano Banana Pro/2 series is already verified and running on the APIYI platform.

- Conversational Image Generation: Gemini 3.1 Flash Image supports multi-turn interactions.

🚀 Quick Start: If you need a stable and reliable image generation API right now, we recommend using the Nano Banana Pro/2 series via APIYI (apiyi.com). It’s the most mature image generation solution on the APIYI platform, featuring unified interface access and stability verified by a large user base.

FAQ

Q1: What is the difference between LongCat-Image and FireRed Image Edit 1.1?

They serve different purposes. LongCat-Image is a unified model for "generation + editing," with its core strengths lying in Chinese text rendering (ChineseWord 90.7) and parameter efficiency (6B). FireRed Image Edit 1.1 focuses specifically on image editing, with its core strength being face consistency (preserving identity during portrait editing). If your use case is primarily Chinese content generation, go with LongCat; if you need precise portrait editing, choose FireRed.

Q2: Can a 6B parameter model really outperform an 80B one?

In several benchmarks, it actually does. LongCat-Image ranks 2nd overall in T2I-CoreBench, outperforming Qwen-Image-20B and HunyuanImage-3.0 (80B). This is thanks to the innovations in data strategy, architectural design, and training methodology from the Meituan team. Of course, in some extreme scenarios, larger models might still hold an edge.

Q3: When will APIYI support LongCat-Image?

There is currently no set timeline. APIYI apiyi.com is currently prioritizing the Nano Banana Pro/2 series for image generation, as it is our most refined and stable solution. If you have a specific requirement for LongCat-Image (especially for Chinese text rendering scenarios), please feel free to reach out to us to evaluate the feasibility of adding it.

Q4: How does LongCat-Image-Edit-Turbo differ from the original version?

Edit-Turbo is a distilled acceleration version released in February 2026. Its inference speed is 10x faster than the original while maintaining over 95% of the original editing quality. It's ideal for production environments where responsiveness is key. Both versions are already supported in ComfyUI.

Summary

Key highlights of Meituan's LongCat-Image:

- Punching Above Its Weight: With 6B parameters, it ranks 2nd among open-source models in T2I-CoreBench, outperforming several 20B-80B models.

- King of Chinese Rendering: It scores 90.7 in ChineseWord and covers all 8105 standard Chinese characters, making it the top choice for Chinese-language scenarios.

- Unified Generation and Editing: A single model supports both text-to-image and 15 types of editing tasks, with the Edit-Turbo version offering a 10x speed boost.

- Fully Open Source: Available for download on HuggingFace, integrated with ComfyUI, and licensed under Apache 2.0.

For Chinese content generation (e-commerce, social media, brand design), LongCat-Image's Chinese text rendering capability is a unique competitive advantage.

APIYI apiyi.com currently provides the Nano Banana Pro/2 series for image generation, which remains our most mature and stable solution. If you require LongCat-Image access, please contact our team for an assessment.

📚 References

-

LongCat-Image GitHub Repository: Official code and documentation

- Link:

github.com/meituan-longcat/LongCat-Image - Description: Full source code, model weight downloads, and usage examples

- Link:

-

LongCat-Image HuggingFace: Model weight downloads

- Link:

huggingface.co/meituan-longcat/LongCat-Image - Description: Direct download for model weights, supporting local deployment

- Link:

-

LongCat-Image Technical Report: Academic paper

- Link:

arxiv.org/abs/2512.07584 - Description: Complete architectural design, training strategies, and evaluation data

- Link:

-

LongCat AI Official Website: Meituan's LongCat model family

- Link:

longcatai.org - Description: Introductions to the full range of LongCat models (Image/Video/Next, etc.)

- Link:

Author: APIYI Technical Team

Technical Community: Feel free to share your AI image generation requirements in the comments. For more model news, visit the APIYI documentation center at docs.apiyi.com