In the early hours of April 7, 2026, a name no one had ever heard of—HappyHorse-1.0—suddenly appeared on the Artificial Analysis Video Arena leaderboard. It snatched three first-place spots and one second-place spot across four core categories, leaving the previous "ruler," ByteDance's Seedance 2.0, trailing by a full 60 Elo points. Yet, within 72 hours, the model vanished from the leaderboard, and to this day, no team has stepped forward to claim it. This article provides a quick breakdown of what this development means for the AI video generation landscape.

Core Value: Spend 3 minutes getting up to speed on the key facts, capability breakthroughs, and theories behind HappyHorse-1.0, and what it signals for the current AI video generation race.

Quick Look: HappyHorse-1.0 Key Facts

HappyHorse-1.0 was a video generation model that arrived on the Artificial Analysis Video Arena via an "anonymous submission." It had no public GitHub, no official HuggingFace weights, and no accessible API, yet its performance across four core leaderboards left all public models—including ByteDance's Seedance 2.0, Google Veo, and OpenAI's Sora—in the dust.

| Feature | Details |

|---|---|

| Model Name | HappyHorse-1.0 (including V2 variant) |

| Appearance Date | Early morning, April 7, 2026 |

| Platform | Artificial Analysis Video Arena |

| Submission Method | Anonymous |

| Model Scale | ~15B parameters |

| Architecture | 40-layer single-stream Self-Attention Transformer |

| Multimodal | Joint pre-training for text / video / audio |

| Public Status | ❌ No source code · No weights · No API |

| Origin Theory | Alibaba Tongyi Lab (Wan 2.7?) |

| Current Status | Removed from the leaderboard |

💡 Quick Take: HappyHorse-1.0 wasn't a "product," but rather an "anonymous capability stress test." This "submit anonymously, then reveal later" release pattern has appeared multiple times over the past year on LMArena, Image Arena, and Code Arena, and almost every time, a top-tier model lab has been behind it. If you're looking to compare and experience currently available video generation models, you can quickly access the leading candidates via the APIYI (apiyi.com) platform.

HappyHorse-1.0 Arena Ranking Performance

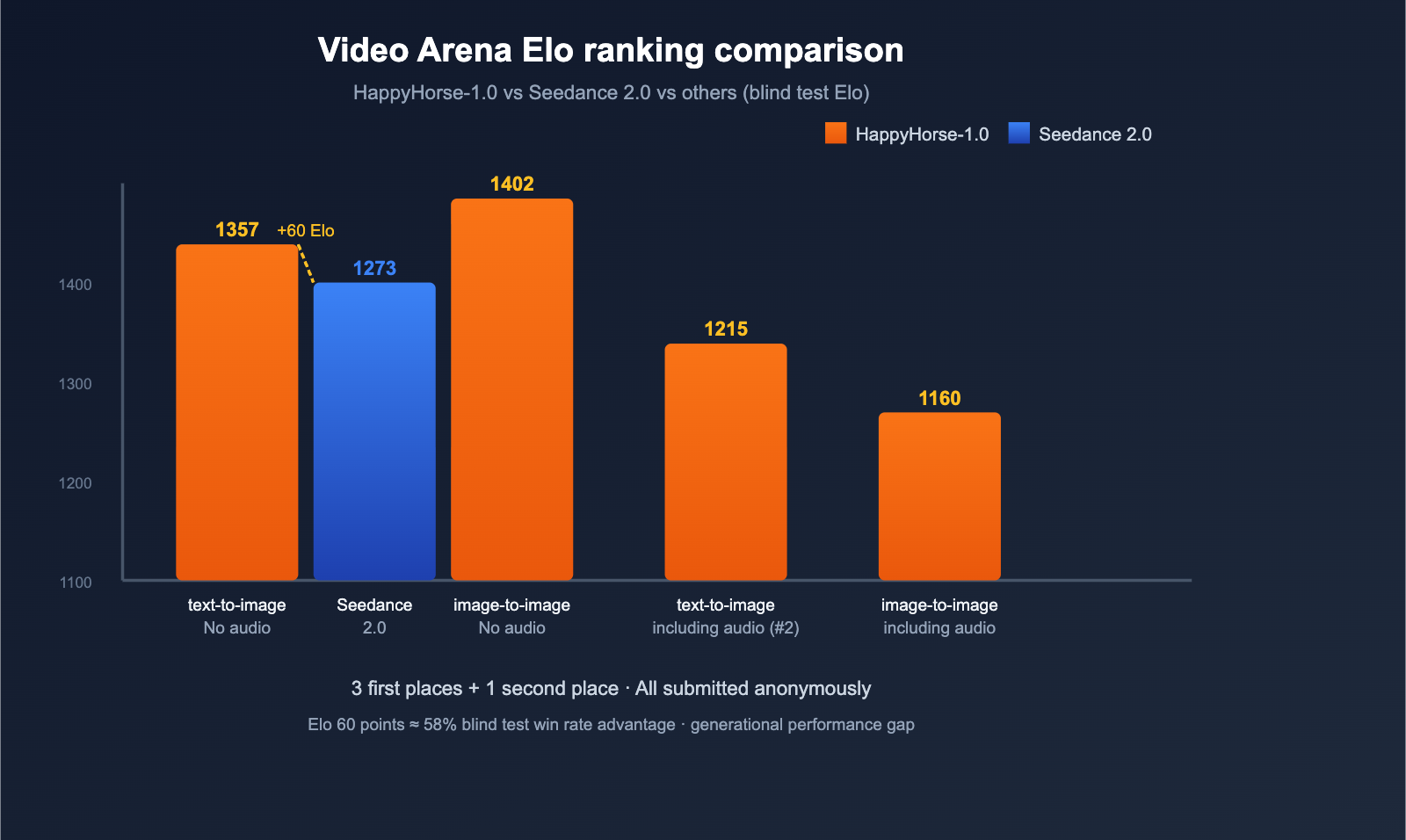

Artificial Analysis is currently one of the most authoritative blind-test leaderboards in the AI video generation field. It uses an Elo rating + user blind-voting system, which helps avoid biased claims from vendors. Here’s how HappyHorse-1.0 performed when it hit the leaderboard on April 7th:

| Category | HappyHorse-1.0 Elo | Rank | Previous Leader |

|---|---|---|---|

| Text-to-Video (No Audio) | 1333-1357 | 🥇 #1 | Seedance 2.0 (1273) |

| Image-to-Video (No Audio) | 1392-1402 | 🥇 #1 | — |

| Text-to-Video (With Audio) | 1205-1215 | 🥈 #2 | — |

| Image-to-Video (With Audio) | 1160 | 🥇 #1 | — |

The most impressive result is in the Text-to-Video (No Audio) category: HappyHorse-1.0 debuted with an Elo score 60 points higher than the previous king, ByteDance's Seedance 2.0. In the Elo system, a 60-point gap translates to roughly a 58% win rate advantage, which is a significant generational leap.

Speculating on the Technical Architecture of HappyHorse-1.0

While there isn't an official paper yet, the release page for HappyHorse-1.0 and third-party analyses from sources like WaveSpeedAI have revealed quite a few architectural details. If these hold true, it would mark the open-source community's first successful "end-to-end audio-video joint pre-training."

Single-Stream Unified Transformer

HappyHorse-1.0 uses a 40-layer Self-Attention Transformer that packs text tokens, reference image latents, noisy video tokens, and noisy audio tokens into a single sequence for joint denoising. This is a major departure from the current mainstream "dual-tower + Cross-Attention" approach (like Sora or Seedance):

- First and last 4 layers: Use modality-specific projections to handle inputs and outputs.

- Middle 32 layers: Parameters are fully shared, treating all modalities equally.

- No Cross-Attention: All modalities interact within the same attention mechanism.

This "all-Self-Attention single-stream" path is the same paradigm that GPT-4o and Gemini 2.x have been exploring for multimodal tasks, but this is the first time we're seeing it applied to video generation with "open-source + top-tier performance."

Joint Audio-Video Generation

HappyHorse-1.0 claims to support single-inference generation of video + dialogue + ambient sound + Foley effects, covering 6 languages (Chinese, English, Japanese, Korean, German, and French). Secondary sites also claim it supports Cantonese and "ultra-low WER lip-syncing."

If these metrics are accurate, HappyHorse-1.0's lead in "end-to-end audio-video pre-training" might be even wider than what the leaderboard suggests.

Inference Speed

| Configuration | Time |

|---|---|

| 5s 256p video | ~2s |

| 5s 1080p video (H100) | ~38s |

If these numbers are real, this speed is incredibly aggressive for a 15B parameter video model, pushing right up against the boundaries of real-time generation.

title: Speculating on the Origins of HappyHorse-1.0

description: A deep dive into the mystery behind the anonymous HappyHorse-1.0 model, the community theories, and what its sudden disappearance might signal for the future.

Speculating on the Origins of HappyHorse-1.0

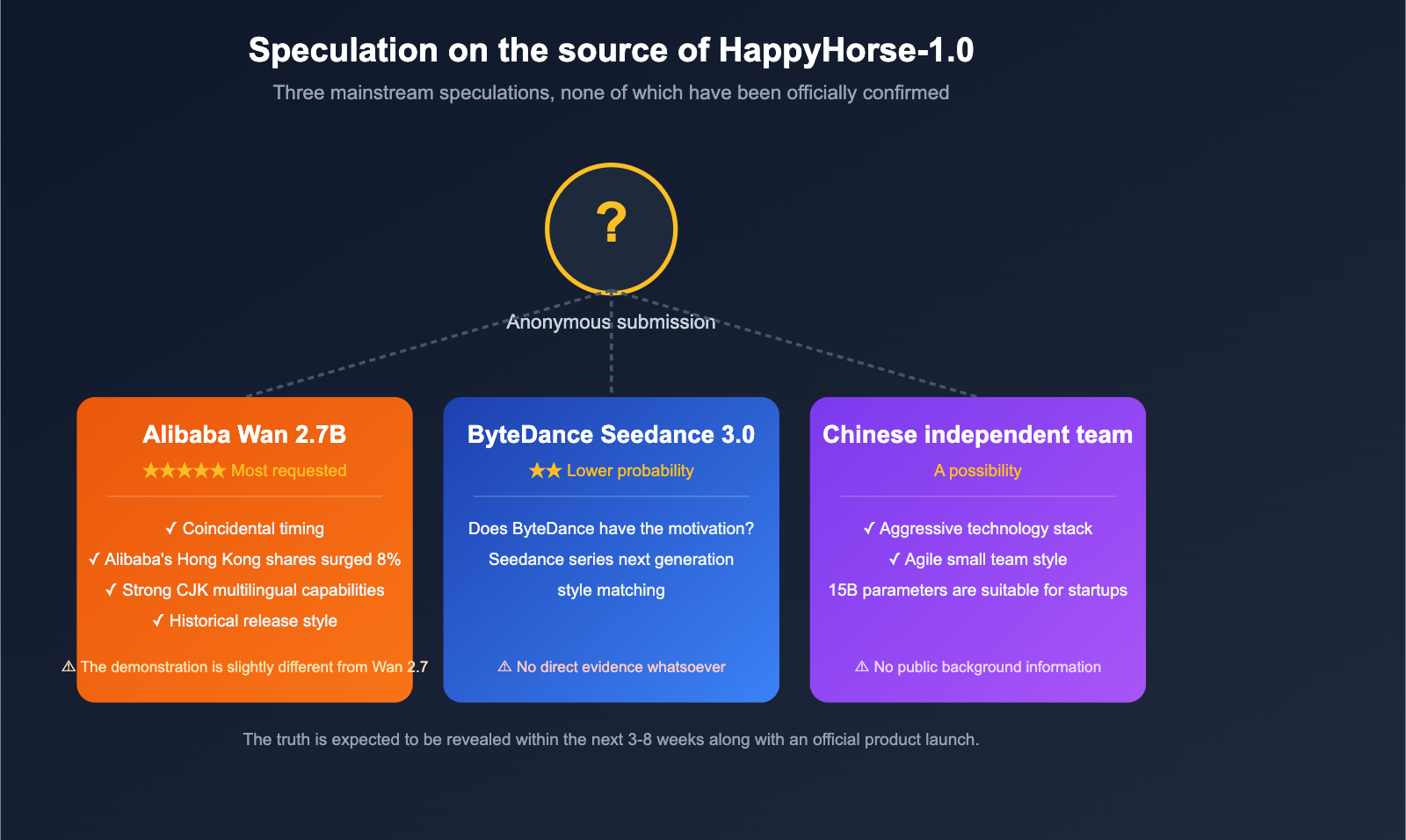

Because the submission was anonymous, Artificial Analysis had to label the model as "pseudonymous" on their leaderboard. However, the community has been busy piecing together clues to form a few main theories.

Theory 1: Alibaba Tongyi Lab (Wan 2.7)

This is currently the most popular theory in the community, and there are four main reasons why:

- Timing: HappyHorse-1.0 hit the top of the charts on April 7th. Since Alibaba's Tongyi Lab released Wan 2.6 in early April, the industry was already expecting the next iteration, Wan 2.7, to drop soon.

- Market Reaction: Alibaba's stock on the Hong Kong exchange rose nearly 8% after HappyHorse appeared on the leaderboard, suggesting the market clearly linked the two.

- CJK Language Proficiency: The model shows exceptional support for Chinese, Japanese, and Korean, which is a hallmark of teams from East Asia.

- Anonymous Testing Style: Alibaba has previously used a "soft launch/internal testing followed by official release" strategy for their Qwen and Wan series.

However, the company hasn't confirmed anything, and the official Wan 2.7 released by Alibaba in April didn't perfectly match the performance seen in some of HappyHorse's public demos. So, this remains unverified.

Theory 2: ByteDance / Other Tech Giants

Some speculate that HappyHorse might be a next-gen variant of ByteDance's Seedance series. Since it outperformed Seedance 2.0, some think ByteDance might have been using an anonymous handle to stress-test Seedance 3.0. As of now, there’s zero evidence to back this up.

Theory 3: A New Independent Chinese Team

Others guess that HappyHorse comes from a brand-new, under-the-radar Chinese startup. The technical stack—"15B + full Self-Attention + joint audio-video processing"—is quite aggressive, which feels more like the work of a nimble, unencumbered small team.

🎯 Keep it real: Until an official entity steps forward, any claims that "it's Alibaba," "it's ByteDance," or "it's some unicorn" are just guesses. What really matters isn't "who it is," but rather "how high the performance ceiling has been pushed," and how we can use currently available video models to build real-world applications. If you need to integrate mainstream video models for product validation right now, you can use the APIYI (apiyi.com) API proxy service to access multiple models in one place.

The 72 Hours When HappyHorse-1.0 Mysteriously Vanished

The most dramatic part of the story is the "disappearance" of HappyHorse-1.0. Less than 72 hours after topping the Artificial Analysis leaderboard on the morning of April 7th, the model was suddenly pulled, leaving behind only:

- A few screenshots circulating on social media.

- An encrypted landing page (claiming "GitHub / HuggingFace coming soon," though the links were dead).

- An official statement from Artificial Analysis confirming it had been "withdrawn."

This "climb the leaderboard → vanish" script has played out on LMArena before: early anonymous versions of GPT-4 Turbo, previews of Claude 3.5 Sonnet, and early variants of Gemini 2.0 Flash all followed a similar path. Almost every time an anonymous model disappears like this, an official product launch follows within 3 to 8 weeks.

The industry consensus on HappyHorse is pretty clear: this was likely a top-tier team gathering real-world user preference data to prepare for an official release.

The Impact of HappyHorse-1.0 on the AI Video Generation Industry

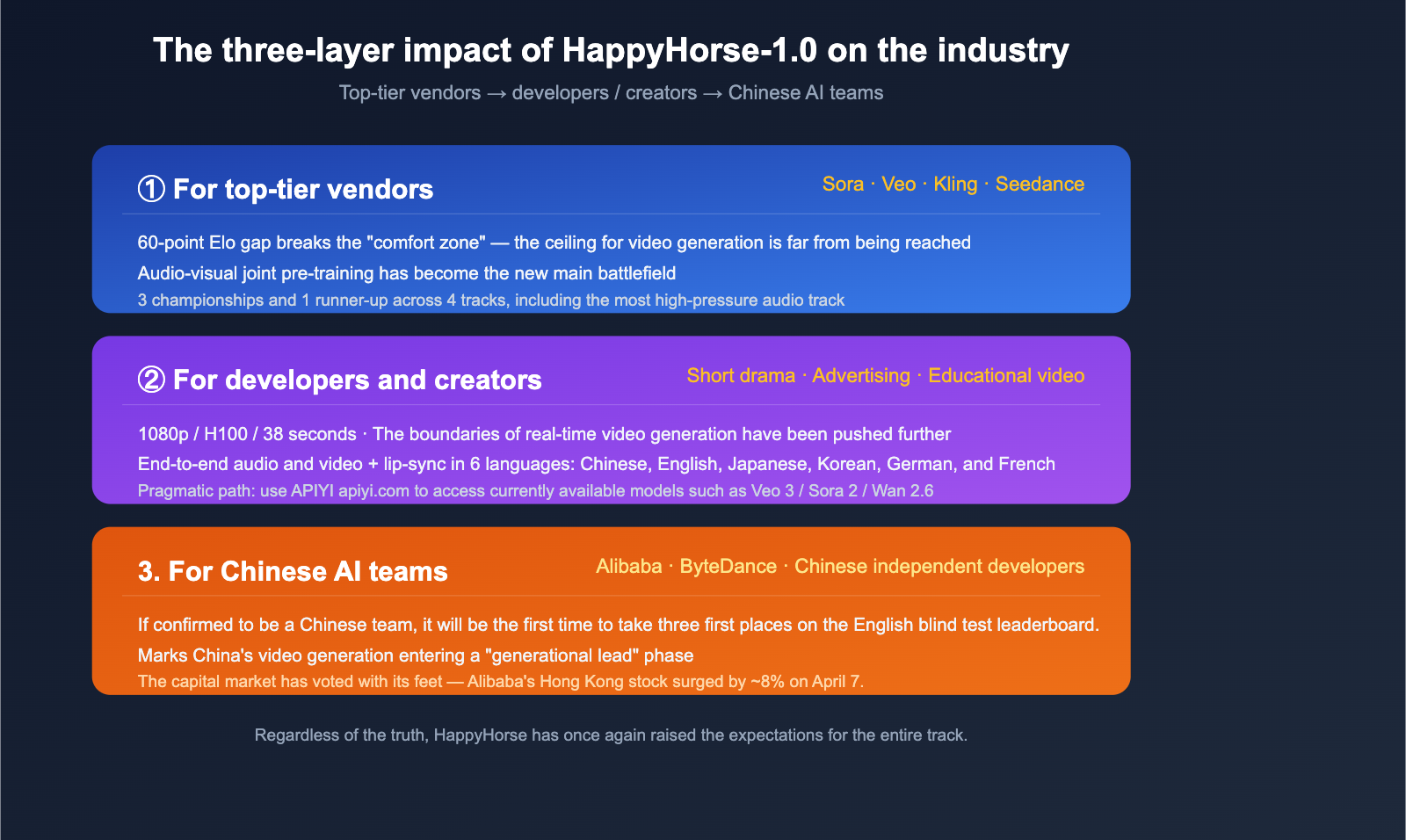

Even if HappyHorse-1.0 never sees a public release, it has already made a tangible impact on the AI video generation landscape.

Impact on Leading Players

- Disrupting the "Comfort Zone" of Seedance 2.0, Veo 3, and Sora 2: A 60-point Elo gap proves that we're nowhere near the performance ceiling for video generation.

- Audio-Video Co-generation as the New Battlefield: HappyHorse took first place in 3 out of 4 categories, with the audio-video joint category being the most competitive. This will force all major players to accelerate their audio-video pretraining roadmaps.

- Pressure on Open/Semi-Open Models: HappyHorse's "Everything is open" claim (even if not yet fully realized) puts significant pressure on closed-source products like Sora.

Impact on Developers and Creators

- Resetting Capability Expectations: With 1080p resolution, H100 efficiency, and 38-second clips, the cost-performance ratio makes "real-time video generation" feel much less like science fiction.

- Multilingual + Lip-Syncing: End-to-end audio-video generation across six languages (Chinese, English, Japanese, Korean, German, and French) is a massive win for short-form dramas, advertising, and educational content.

- The Pragmatic Path Remains: Until HappyHorse is truly available, mastering existing models like Veo 3, Sora 2, Kling 2, and Wan 2.6 is still the best way to build real-world products. You can access these easily through the APIYI API proxy service at apiyi.com.

Impact on Chinese AI Teams

If HappyHorse is confirmed to be the work of Alibaba or another Chinese team, it would mark the first time a Chinese AI video generation model has taken three top spots on a "region-agnostic" English blind-test leaderboard like Artificial Analysis. This achievement carries weight far beyond the model itself.

HappyHorse-1.0 FAQ

Q1: Can I use HappyHorse-1.0 right now?

No. It only appeared briefly in the Artificial Analysis blind-test Arena for about 72 hours. There is no public API, no downloadable weights, and no accessible GitHub repository. If you need to invoke top-tier video generation models immediately, you can access currently available models like Veo 3, Sora 2, Kling 2, and Wan 2.6 via APIYI (apiyi.com).

Q2: Is HappyHorse-1.0 actually Alibaba’s Wan 2.7?

There is no official confirmation. The community has four speculative reasons (timing, Alibaba's stock surge, CJK multilingual capabilities, and Alibaba's historical release style). However, the demos shown after Wan 2.7's official release in April don't perfectly align with HappyHorse. The most accurate stance is: It's highly likely, but unconfirmed.

Q3: What does its 60-point Elo lead actually mean?

In the Elo rating system, a 60-point lead roughly corresponds to a 58% win rate in blind tests. This is a significant gap, though not insurmountable. For context, the shifts between Sora, Veo, Kling, and Seedance over the past year have typically stayed within a 20-40 point range. HappyHorse entering with a 60-point lead is truly a generational leap.

Q4: How radical is its “15B parameters + full Self-Attention single-stream” architecture?

It's extremely radical. Most current mainstream video models use a "dual-tower + Cross-Attention" or "DiT + cross-modal projection" hybrid approach. HappyHorse packs audio, video, and text into a single 40-layer Transformer with shared parameters. This is the same technical path that GPT-4o and Gemini 2.x are exploring for multimodal tasks, but it's the first time it has been validated with top-tier performance in video generation.

Q5: Why did it disappear after 72 hours on the leaderboard?

This is a common "data collection pattern" for anonymous models. Early versions of GPT-4 Turbo, the preview of Claude 3.5 Sonnet, and early variants of Gemini 2.0 Flash all followed the same process: launch → collect real-world preference data → withdraw → official release a few weeks later. Expect HappyHorse to return under an official name within 3-8 weeks.

Q6: What video models can I use to replace HappyHorse for now?

Currently, the most capable models available include: Google Veo 3 (top-tier general quality), OpenAI Sora 2 (strong cinematography), Kling 2 (excellent portrait/action), ByteDance's Seedance 2.0 (strong in Chinese contexts), and Alibaba's Wan 2.6 (open-source and easy to deploy). If you need to use a unified interface to call these models for comparative testing, you can use the APIYI (apiyi.com) platform to integrate them all at once, avoiding the hassle of switching between multiple account systems.

Summary

HappyHorse-1.0 was the most dramatic "anonymous drop" in the AI video generation space in April 2026. It signifies three major shifts:

- The ceiling for video generation has been raised again: With a 60-point Elo lead and 3 wins and 1 runner-up across 4 categories, it’s clear that the "strongest video model" we see today is nowhere near its limit.

- Joint audio-video generation is the new main battlefield: HappyHorse's dominant performance in categories involving audio will push the entire industry to prioritize end-to-end audio-video pretraining.

- "Anonymous → Retracted → Official Release" is becoming the standard launch paradigm for flagship models: We expect a familiar vendor to officially claim it within the next 3 to 8 weeks.

🚀 Actionable Advice: Before HappyHorse is fully available, the most practical move isn't to guess its origins, but to master the top-tier video models already at your disposal. Models like Veo 3, Sora 2, Kling 2, Seedance 2.0, and Wan 2.6 can easily cover 90% of real-world production scenarios. We recommend using APIYI (apiyi.com) to access mainstream video models through a single platform. With pay-as-you-go billing and the ability to switch models on the fly, you'll be ready to migrate seamlessly the moment HappyHorse or Wan 2.7 officially opens up.

Author: APIYI Team — Dedicated to providing developers with stable access to mainstream AI Large Language Models. Visit apiyi.com to learn more.

References

-

WaveSpeedAI Blog – What Is HappyHorse-1.0

- Link:

wavespeed.ai/blog/posts/what-is-happyhorse-1-0-ai-video-model - Description: Third-party in-depth analysis of architecture, parameters, and inference speed.

- Link:

-

Artificial Analysis – Video Arena Leaderboard

- Link:

artificialanalysis.ai/video/leaderboard/text-to-video - Description: Original rankings for both Text-to-Video and Image-to-Video categories.

- Link:

-

Phemex News – HappyHorse Tops Rankings

- Link:

phemex.com/news/article/happyhorse10-surpasses-seedance-20-in-ai-video-model-rankings-71750 - Description: Comparative data between HappyHorse and Seedance 2.0.

- Link:

-

36Kr – Mysterious Model HappyHorse

- Link:

eu.36kr.com/en/p/3757826958635781 - Description: Industry interpretation of the anonymous model's debut.

- Link:

-

Futunn – Alibaba Hong Kong Stock Surges Due to HappyHorse

- Link:

news.futunn.com/en/post/71208943 - Description: Capital market reactions to speculations regarding its origin.

- Link:

-

DEV Community – Who Developed HappyHorse

- Link:

dev.to/calvin_claire_451169e1b82 - Description: Community discussions and various theories regarding the development team.

- Link: