"How is APIYI?" This is a question I've been asked repeatedly in various Chinese AI developer communities over the past six months. The people asking generally fall into two camps: independent developers who are already juggling three separate accounts, three billing cycles, and three sets of invoices for OpenAI, Anthropic, and Google; and engineering leads at companies tasked with integrating AI capabilities who need a domestic solution that offers "a single interface, unified billing, and tax-compliant invoicing." APIYI (api.apiyi.com) is frequently mentioned among these groups, but while there's plenty of marketing-heavy content in the Chinese community, there's a real lack of "dimension-by-dimension" objective evaluation.

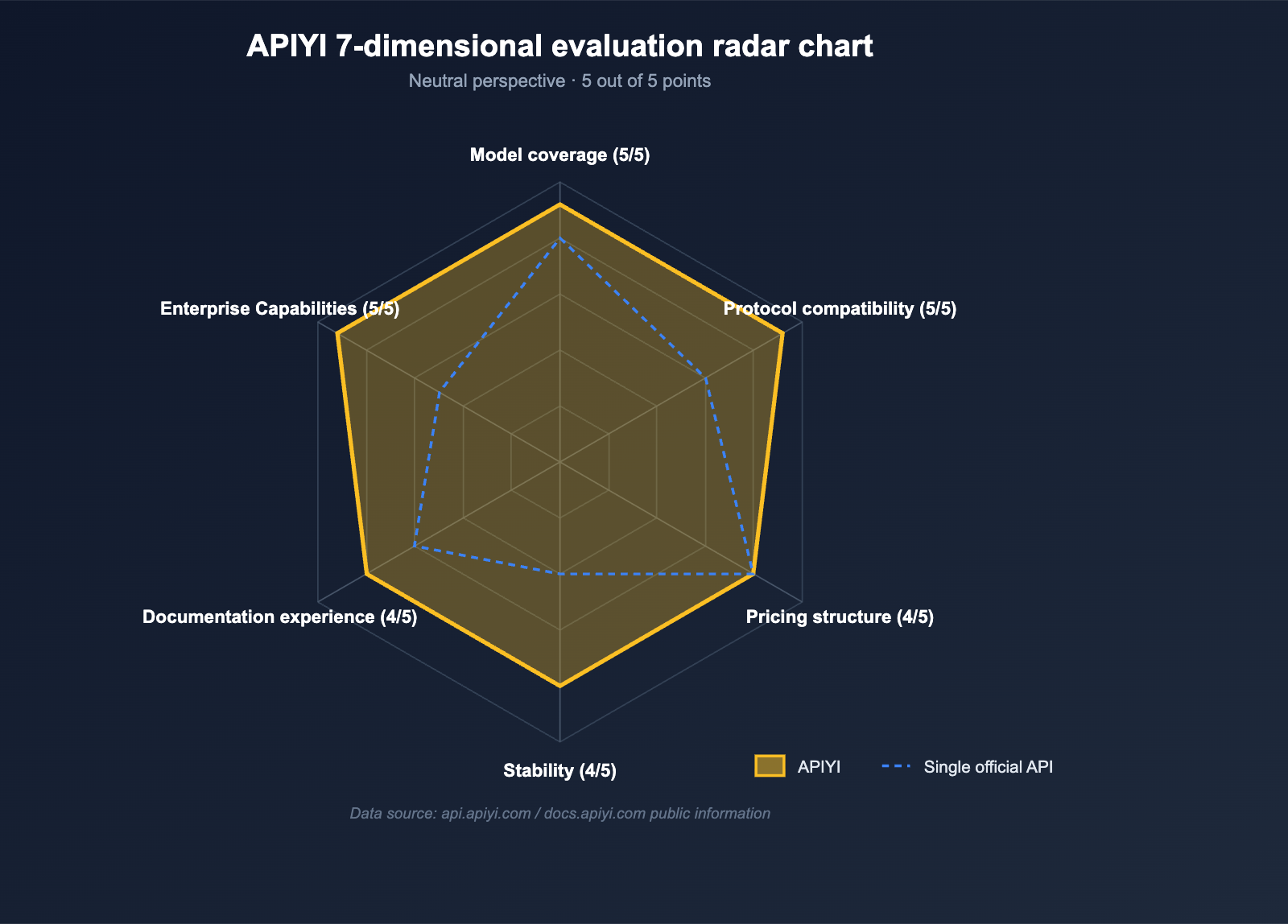

To thoroughly answer the question "How is APIYI?", this article avoids all rumors or third-party screenshots. Instead, it is based solely on public information from the official website (api.apiyi.com) and the documentation center (docs.apiyi.com). From the perspective of a developer looking to integrate AI into their product, I will systematically break down whether it's right for you—and who it isn't for—across seven dimensions: model coverage, protocol compatibility, pricing structure, stability, documentation experience, enterprise capabilities, and onboarding costs. At the end of the article, I've provided a checklist you can use to make your own decision.

How is APIYI? A Quick Overview (April 2026 Edition)

Before diving into the detailed evaluation, let's use a table to lock in the key facts about the APIYI platform so you can see everything at a glance. Every subsequent judgment will refer back to this table.

| Dimension | Public Information for APIYI |

|---|---|

| Platform Positioning | Unified API proxy service for Large Language Models, catering to developers and enterprises |

| Access Domain | api.apiyi.com |

| Documentation Center | docs.apiyi.com / docs.apiyi.com/en (Bilingual) |

| Integration Standard | OpenAI API standard as baseline, compatible with Claude Native / Gemini Native formats |

| Model Scale | 300+ mainstream models (latest data as of April 2026) |

| Major Model Families | OpenAI / Anthropic / Google / xAI Grok / DeepSeek / GLM / Qwen / Kimi, etc. |

| Multimodal Coverage | Full stack: Chat, Embeddings, Vision, image generation, video generation |

| Pricing Highlights | Some models as low as 80% of official pricing; GPT-5.1 savings up to 90% with Prompt Cache hits |

| Registration Bonus | Approximately 3 million test tokens upon registration |

| Billing Method | Billed by token / image / second, unified balance, unified billing |

| Target Audience | Independent developers, startups, and enterprises in mainland China needing unified billing and invoices |

🎯 Quick Decision Advice: Before spending time reading the full article, you can answer two questions first: "Do I need models from more than three providers (OpenAI, Anthropic, Google) simultaneously?" and "Do I want to use a single API key for all calls and receive a unified invoice?" If the answer to both is "Yes," then APIYI (apiyi.com) is almost certainly worth adding to your shortlist. If the answer to both is "No," then connecting directly to the official SDKs will be more lightweight for your needs.

Dimension 1: How broad is the model coverage on APIYI?

Model coverage is the cornerstone for determining "what APIYI is like." If an API proxy service only connects to OpenAI, its value is effectively zero. A truly useful proxy service must provide all the mainstream models that developers actually use and companies are willing to pay for.

Major model families currently supported by APIYI

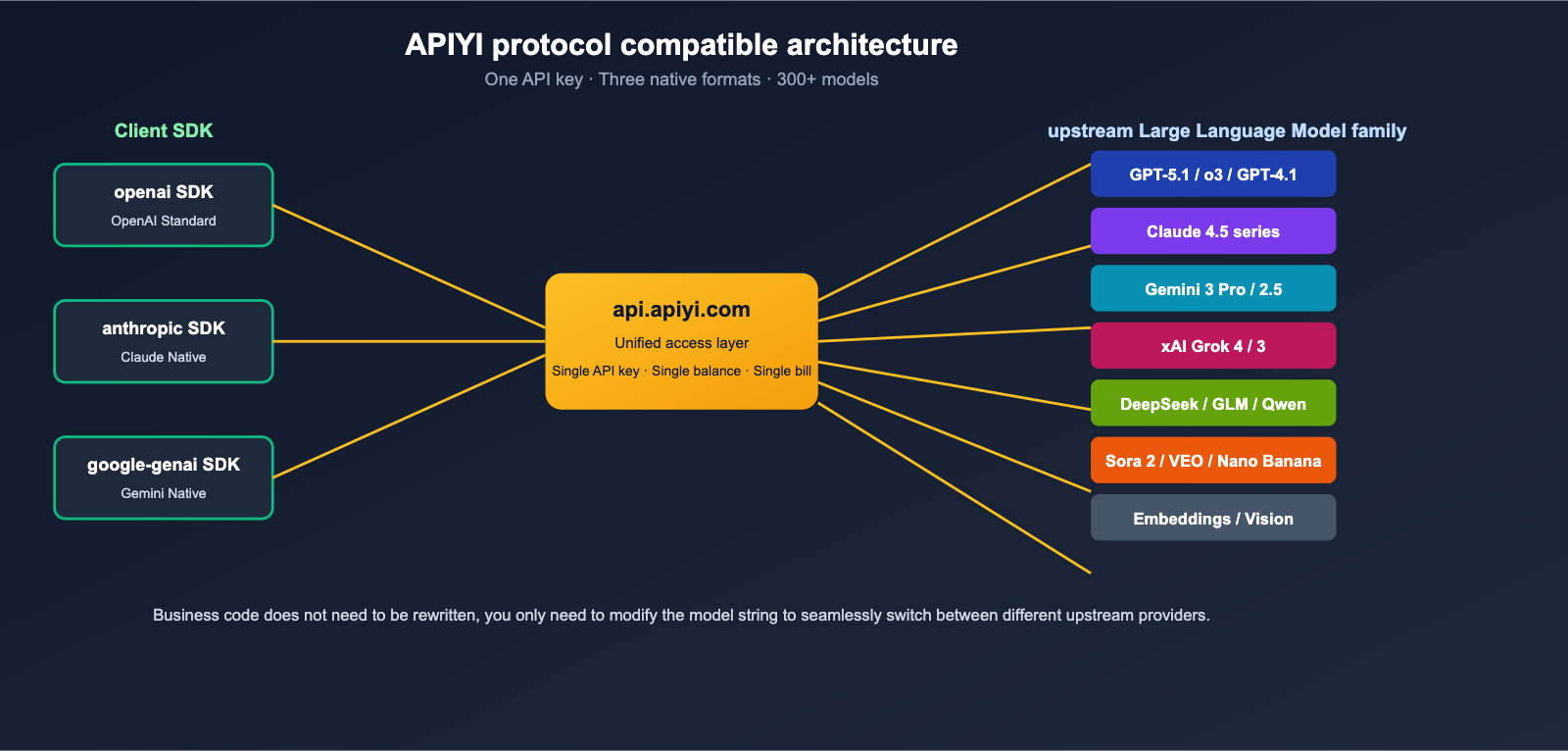

According to the public documentation on docs.apiyi.com, APIYI has integrated 6 major Large Language Model families and 3 multimodal families under a single API key management system.

| Category | Representative Models | Use Case |

|---|---|---|

| OpenAI | GPT-5.1 / GPT-5 / o3 / o4-mini / GPT-4.1 / DALL·E 3 | Chat, Reasoning, Image |

| Anthropic | Claude Opus 4.5 / Sonnet 4.5 / Haiku 4.5 | Long text, Code, Agent |

| Gemini 3 Pro Preview / 2.5 Pro / 2.5 Flash | Multimodal, Long context | |

| xAI | Grok 4 / Grok 3 series | Real-time search, Social semantics |

| Top Chinese Models | DeepSeek V3.2 / GLM-4.6 / Qwen / Kimi K2 / ERNIE 4.0 | Chinese reasoning, Cost-efficiency |

| Image Generation | Nano Banana Pro / Flux / DALL·E 3 | Text-to-image, Image-to-image |

| Video Generation | Sora 2 / VEO 3.1 | Text-to-video |

| Text Embeddings | Embeddings series | RAG, Knowledge base |

| Vision Understanding | Vision interface | OCR, Multimodal Q&A |

From this table, a clear fact emerges: APIYI isn't just a platform that "proxies OpenAI." It bundles mainstream closed-source models, top-tier open-source models, and multimodal generation into a single interface. For teams building Agents, RAG, content generation, or multilingual customer service, there's almost no need to sign additional contracts with other vendors.

The real value of model coverage

The true value of model coverage isn't about "how many models you can call," but rather: When a specific model has issues or changes its pricing, can you switch to an alternative without modifying your business code? This is especially critical in early 2026—Nano Banana Pro has experienced intermittent performance degradation, Google AI Studio API has faced multiple outages, and Anthropic has previously rate-limited Claude Opus due to capacity issues. No vendor can be assumed to be "stable and available forever."

On APIYI, switching from GPT to Claude or Gemini often requires changing only a single model string. This "one-click switch" capability is, in itself, a quantifiable engineering asset.

🎯 Selection Advice: If your product requires "GPT-5.1 + Claude Sonnet 4.5 + Gemini 3 Pro" as a triple-redundancy setup, we recommend prioritizing a platform like APIYI (apiyi.com) that has already integrated all three into a single interface, rather than opening separate enterprise accounts with each vendor—the latter would mean three sets of contracts, three sets of reconciliation, and three sets of monitoring.

Dimension 2: How is the protocol compatibility of APIYI?

The second most critical dimension is protocol compatibility. "Can I run my existing code without any changes?" determines the migration cost of adopting APIYI for your team.

Native support for OpenAI / Claude / Gemini formats

The documentation clearly states that APIYI supports three calling styles simultaneously:

- OpenAI API Standard (Baseline): Any code written using the

openaiPython or Node SDK only requires changing thebase_urltohttps://api.apiyi.com/v1and swapping the API key for your APIYI key to get up and running immediately. - Claude Native Format: Supports Anthropic's official SDK and native message format, meaning you can directly reuse Agent code written with the

anthropiclibrary. - Gemini Native Format: Supports the request body structure of the official Google Gemini API, allowing you to natively utilize features like image-to-image and long context windows for Nano Banana Pro / Gemini 3 Pro.

This "parallel support for three formats" design is rare among proxy services—most similar products simply "translate" all models into the OpenAI format, which often comes at the cost of losing features like Claude's system prompt or Gemini's fileUri.

One API key to call them all

Another feature repeatedly emphasized in the documentation is "One account, one API key, one standard interface." From a developer's perspective, this means:

- No need to maintain three sets of SDK keys in your code;

- No need to distinguish between three vendors' balances in your monitoring;

- No need to write separate authentication logic for different models.

For early-stage products, one API key to rule them all significantly lowers the marginal cost of integrating new models. From a neutral standpoint, this is a design that benefits any team using multiple models at scale.

Dimension 3: What is the APIYI Pricing Structure Like?

The third core dimension is pricing. The pricing logic for an API proxy service generally falls into two categories: the first is "list prices higher than the official ones" (which you should basically ignore); the second is "tiered discounts based on usage" for efficient proxying. APIYI falls into the latter category.

Highlights of Public Pricing

According to the public information on the homepage of docs.apiyi.com:

- Image generation: Nano Banana Pro starts at approximately $0.05/image

- Video generation: Sora 2 starts at approximately $0.1/second

- Text tokens: Gemini 3.1 is about $2.00 / million tokens

- Prompt Cache hits: Savings on the GPT-5.1 series can reach up to 90%

- Top-up bonuses: Discounts can reach up to 80%

- New user perks: Get about 3 million test tokens upon registration

- Billing method: Pay-as-you-go, no monthly fees, no minimum consumption

For small and medium-sized teams, the most practical feature is the "significant price reduction after Prompt Cache hits"—for typical scenarios like RAG or Agents where "system prompts are long and user messages are short," the cache hit rate often exceeds 70%, making the actual bill considerably cheaper than paying the "official list price."

How to Truly Evaluate Pricing

To determine "what APIYI is like" in terms of pricing, there are two actionable steps you can take:

- Run a real prompt from your own business using the test tokens provided upon registration, rather than relying on standard community benchmarks;

- Calculate your 30-day usage volume using both official prices and APIYI prices; the difference is the actual savings you'll realize.

These two steps are more effective than listening to any review, because the "input/output token ratio" varies wildly across different businesses, and no general conclusion can replace your own real-world testing.

🎯 Pricing Evaluation Advice: If you're unsure whether APIYI is cost-effective, we recommend using the test credits provided when you sign up at apiyi.com to run your actual business traffic for a week. Then, use the billing data to calculate your unit cost—this is much more accurate than looking at any price list.

Dimension 4: How is APIYI's Stability and Capacity?

No matter how many models are available or how cheap the prices are, it’s all for nothing if the stability isn't there. Following the Nano Banana Pro "intelligence degradation" incident and the Google AI Studio API outages in early 2026, this has become a top-tier metric for more and more teams.

APIYI's Public Stability Capabilities

The "Why Choose APIYI" section of docs.apiyi.com mentions several key design features:

| Capability | APIYI Public Description |

|---|---|

| High Availability | Multi-node deployment |

| Concurrency | Described as "unlimited concurrency," geared toward multi-industry production environments |

| Monitoring | 24/7 round-the-clock monitoring |

| Upstream Redundancy | Official infrastructure partnerships with major clouds like AWS / Azure / Google Cloud |

| Failover | Ability to switch to backup models under the same interface |

Note that APIYI does not provide a specific SLA percentage commitment in its public documentation. This is something that is very difficult for any API proxy service to "hard guarantee"—because it inherently depends on the stability of upstream providers like OpenAI, Anthropic, or Google. A neutral assessment is: The core of evaluating the stability of an API proxy service isn't looking at a single upstream's SLA, but rather whether it has the ability to "switch to alternatives when an upstream fails." APIYI naturally possesses this through its "one interface + multiple models" design.

Neutral Stability Evaluation Method

If you want to verify "what APIYI is like" for yourself, we recommend conducting a 7-day "passive monitoring" test:

- Send a fixed prompt using a very cheap model (like Gemini Flash) every 5 minutes;

- Record the latency, status code, and error messages for each request;

- After 7 days, calculate the success rate, P95/P99 latency, and the distribution of error types.

This test doesn't require writing complex code, and it will help you obtain real stability data "within your own network environment," which is far more reliable than any official marketing claims.

Dimension 5: How is the APIYI Documentation and Onboarding Experience?

Documentation is the "storefront" by which developers judge a platform. A quick way to determine "how good APIYI is" is to spend 30 minutes reading through docs.apiyi.com to see if you can "get your first request running on your own."

Bilingual Documentation Structure

The APIYI documentation center at docs.apiyi.com provides both Chinese and English versions (via the /en path), with a core structure clearly divided into several sections:

- Quick Start: From registration to your first API call.

- Model List: 300+ models categorized by family, complete with descriptions and examples.

- API Reference: Chat, Image, Video, Embeddings, Vision, etc.

- SDK Integration: Official support for OpenAI SDK, Claude SDK, and Gemini SDK.

- Enterprise Capabilities: Invoices, corporate payments, and reconciliation.

- FAQ: Error codes, rate limits, and best practices.

One-Line Migration Example

The migration example provided in the documentation—showing how to "switch from an official SDK to APIYI"—is incredibly concise. Below is an equivalent Python example, assuming you are already using the official OpenAI SDK:

from openai import OpenAI

# Only change two lines: base_url + api_key

client = OpenAI(

base_url="https://api.apiyi.com/v1",

api_key="YOUR_APIYI_KEY"

)

# Business logic remains completely unchanged

resp = client.chat.completions.create(

model="gpt-5.1",

messages=[

{"role": "user", "content": "Explain quantum entanglement in one sentence."}

]

)

print(resp.choices[0].message.content)

When switching to Claude or Gemini models, you only need to change the model field to claude-sonnet-4-5 or gemini-3-pro-preview. There is no need to change the SDK, API key, or base_url.

Neutral Rating of Documentation Experience

From a neutral perspective, APIYI's documentation ranks "significantly above average" among Chinese API proxy services:

- ✅ Complete bilingual support, making it accessible for overseas users.

- ✅ High update frequency for the model list (Gemini 3 Pro, GPT-5.1, Claude 4.5, etc., appear immediately).

- ✅ Provides native formats rather than "translating everything to OpenAI," which is friendly for advanced features.

- ⚠️ Some details (such as specific rate limits for each model or Prompt Cache hit rules) are scattered across multiple pages and require some digging.

The overall conclusion on the documentation experience is: It's sufficient for independent developers, though enterprise-level deep integration might require a bit more searching.

🎯 Onboarding Tip: If you are new to APIYI, we recommend running a minimal OpenAI-compatible request using the "Quick Start" page on docs.apiyi.com, then trying it again by swapping in the model names for Claude and Gemini. You'll have a real feel for the platform within 15 minutes.

Dimension 6: How is APIYI's Enterprise Capability?

The sixth dimension is enterprise capability. While this might be optional for independent developers, for any company that needs to go through a formal procurement process, this is where APIYI clearly distinguishes itself from "connecting directly to OpenAI."

3 Things Mainland Chinese Enterprises Care About Most

The documentation center explicitly mentions key capabilities like corporate payments + invoicing. We've listed the "top enterprise concerns" for comparison:

| Enterprise Concern | APIYI Public Capability |

|---|---|

| Supports RMB settlement | ✅ Supported |

| Can issue formal VAT invoices | ✅ Corporate invoicing supported |

| Supports bank transfers / Corporate WeChat Pay | ✅ Corporate payment methods supported |

| Requires overseas credit card | ❌ Not required |

| Requires foreign phone number | ❌ Not required |

| Multi-account / Team management | ✅ Unified management of multiple models under one account |

| Financial reconciliation | ✅ Unified balance + unified billing |

For a typical SaaS team in mainland China, this set of capabilities means that "the process of integrating GPT-5.1 / Claude 4.5 / Gemini 3 Pro can follow the same procurement workflow as integrating Alibaba Cloud or Tencent Cloud"—something that is nearly impossible to achieve when connecting directly to official APIs.

Neutral Rating of Enterprise Capability

From a neutral perspective, this is a "significant plus" for APIYI. If your team happens to be in the awkward position of "wanting to use cutting-edge models but being blocked by overseas credit card requirements, personal settlement issues, or invoicing needs," this single factor is enough to put APIYI on your final shortlist.

Dimension 7: Ease of Use and Hidden Barriers for APIYI

The final dimension is "ease of use," which also covers some "hidden barriers" that aren't usually mentioned on marketing pages.

Public Onboarding Path

According to the standard process described at docs.apiyi.com:

- Register an account at api.apiyi.com (supports WeChat/Email);

- Create an API key in the console;

- Copy the official code snippet and tweak a couple of lines;

- Run your first request using the ~3 million free tokens provided upon registration;

- Top up your balance or request an invoice based on your usage.

For any developer who has worked with OpenAI or Claude APIs, the entire process is essentially at the level of "getting your first successful call in under 5 minutes."

Hidden Barriers from a Neutral Perspective

To remain neutral, we should also point out a few details that might dampen the experience for some users. These aren't unique to APIYI; they are common traits of almost all AI API proxy services:

| Hidden Barrier | Explanation |

|---|---|

| Inconsistent rate limits across models | You need to check specific RPM/TPM limits on different documentation pages |

| Prompt Cache hit rates vary by use case | The advertised "90% savings" is a peak hit rate; daily averages are often lower |

| Some preview models change with upstream | Preview models like gemini-3-pro-preview require monitoring upstream announcements |

| Potential for minor queuing during peak hours | This is the natural trade-off for any shared proxy service |

| Longer latency for video generation | Latency for Sora 2 / VEO 3.1 is determined by upstream providers, not the proxy |

Once these points are laid out, the answer to "How is APIYI?" becomes clear—it has a very specific "ideal user profile."

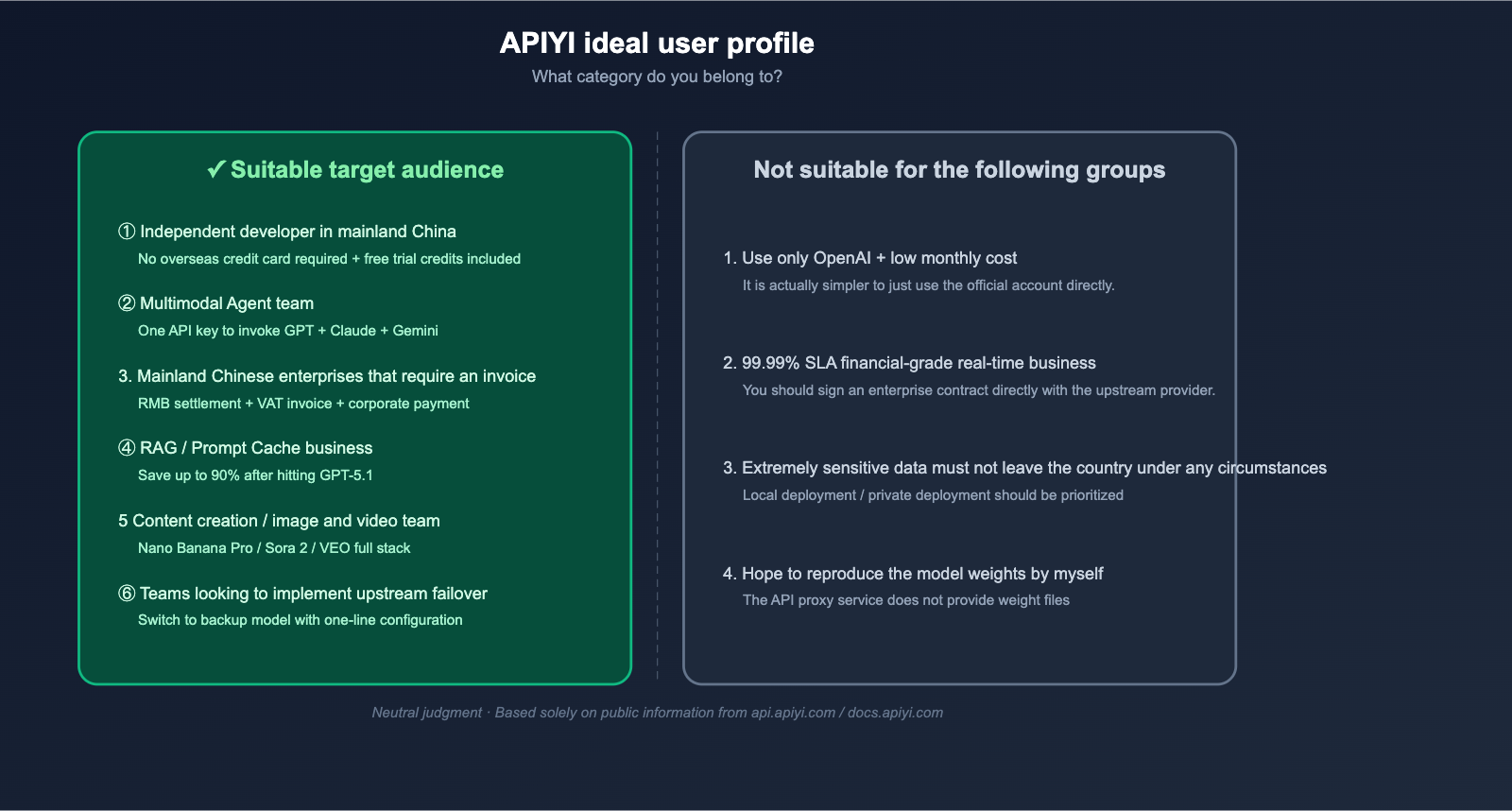

Comprehensive Verdict on APIYI: Who Is It For?

Who It’s For

| User Group | Why It’s a Good Fit |

|---|---|

| Independent developers in Mainland China | No need for overseas credit cards; get test credits upon registration |

| Multi-model Agent teams | Use one API key for GPT + Claude + Gemini without rewriting business logic |

| Mainland Chinese enterprises needing invoices | Supports RMB payments, corporate transfers, and VAT invoices |

| RAG / Prompt Cache users | Significant savings once GPT-5.1 hits are achieved |

| Content creation / Image & Video teams | Full-stack access to Nano Banana Pro / Sora 2 / VEO 3.1 |

| Teams needing "upstream failover pools" | Switch to another provider with a single line of configuration if one goes down |

Who It’s Not For

| User Group | Reason |

|---|---|

| Users only using OpenAI with very low monthly spend | Using an official account directly is simpler |

| Financial-grade real-time services requiring 99.99% SLA | No proxy platform is suitable; sign enterprise contracts directly with upstream providers |

| Users with extremely sensitive data that cannot leave the region | Local deployment or private solutions should be prioritized |

| Users wanting to reproduce model weights | Proxy platforms do not provide model weights |

🎯 Decision Advice: Compare the tables above with your team's current situation. If you hit ≥ 2 items in the "For" column, APIYI (apiyi.com) is almost certainly worth spending an afternoon running a proof-of-concept for your actual business. If you hit items in the "Not For" column, no proxy platform should be your first choice.

APIYI FAQ

Q1: What are the actual differences between using APIYI and the official OpenAI / Claude APIs?

There are four core differences: First, a single API key allows you to access 300+ models, including GPT, Claude, Gemini, Grok, and DeepSeek, with code changes limited only to the model field. Second, you can settle payments in RMB and receive VAT invoices, eliminating the need for overseas credit cards in mainland China. Third, you get unified billing and balance management, which significantly lowers your operational overhead. Fourth, some models offer tiered discounts, and you'll see further price reductions when Prompt Cache is hit. If any of these points matter to you, just head over to apiyi.com and run a quick test to form your own judgment.

Q2: Does APIYI support the official OpenAI SDK? Do I need to rewrite my business code?

Yes, it supports it, and no, you don't need to rewrite your business code. According to the migration guide at docs.apiyi.com, you only need to change the base_url of your OpenAI SDK to https://api.apiyi.com/v1 and replace the api_key with your APIYI key. Your business logic (messages, tools, stream, etc.) remains exactly the same. The same applies to Claude and Gemini; APIYI supports native SDKs for all three, so the migration cost is essentially "two lines of code."

Q3: Are the Claude / Gemini models on APIYI "translated"? Will I lose any features?

Not at all. The documentation clearly states that APIYI supports OpenAI Standard, Claude Native, and Gemini Native formats simultaneously, rather than forcing all models into an OpenAI-style structure. This means advanced features like Claude's system prompt, Gemini's fileUri for image-to-image, and Anthropic's tool_use are preserved natively and aren't "flattened" to the lowest common denominator.

Q4: Is APIYI really cheaper than the official providers?

It depends on how you use it. On paper, APIYI offers prices lower than the official rates for many models (as low as 80% of the official price), with further discounts when Prompt Cache is hit (savings of up to 90% for the GPT-5.1 series). However, no number is meaningful without considering your specific input-to-output token ratio. The most responsible way to judge is to use the ~3 million test tokens provided upon registration to run your actual business workload and perform a precise diff against your official bills.

Q5: Is APIYI suitable for large enterprises? Is there an SLA?

It's suitable for companies in mainland China that need "unified billing and a standard procurement process." While docs.apiyi.com mentions multi-node deployment, 24/7 monitoring, and enterprise payment/invoicing capabilities, it doesn't publicly commit to a specific SLA percentage—which is standard for almost all API proxy services, as availability ultimately depends on upstream providers like OpenAI, Anthropic, and Google. We recommend that large enterprises conduct a 7-day passive monitoring period before deciding to integrate it into their primary production pipeline.

Q6: Is there a risk of "sudden price hikes" or "model deprecation" when using APIYI?

Any proxy service relying on third-party upstreams faces this risk, and APIYI is no exception. A neutral perspective is: As long as you design your business architecture to be "model-agnostic," this risk can be engineered away. For example, use a unified model field to manage your list of available models, prepare backups of the same tier for every critical model, and set price fluctuations as an alert trigger. Once this mechanism is in place, even if a model's price changes or it gets deprecated, you only need to change one line of configuration.

Conclusion: A Final Neutral Verdict on APIYI

By looking at these six dimensions, the question "How is APIYI?" has a relatively clear answer: It is an AI API proxy service for Chinese developers and enterprises that bundles 300+ mainstream models, three native protocols, and mainland China-friendly billing into a single interface. It isn't the "cheapest in every scenario," nor does it offer a "financial-grade 99.99% SLA," but for scenarios where you need "cutting-edge models, engineering convenience, and invoices/RMB settlement," its product design is a perfect fit.

If I had to summarize my neutral assessment of APIYI in one sentence: It sacrifices a degree of "extreme deep control" in exchange for "high integration breadth and operational convenience"—which happens to be the two things most lacking for 90% of AI teams in mainland China. In the 2026 landscape, where "models change weekly and upstreams fluctuate monthly," this "breadth-first + engineering-packaged" product form is becoming increasingly valuable.

🎯 Final Recommendation: If you still have doubts about "How is APIYI?" after reading this review, there is only one efficient way to judge: spend 5 minutes registering an account at apiyi.com, copy the minimal code example from docs.apiyi.com, run a real prompt from your business, and use the billing data to verify all the conclusions in this article. No review can replace your own testing, but the dimensions provided here will help you minimize your testing time.

Author: APIYI Team | Focusing on AI Large Language Model deployment and engineering practices. For more model evaluations and troubleshooting, please visit APIYI at apiyi.com.